Anthropic

Integration with Anthropic is implemented as a separate AI TOOLS (ChatGPT) block, which can be placed between the Source and Destination of data. This will allow you to create a query based on data from the Data Source and pass data from Anthropic to the Data Ingest fields. This way you can automatically receive data from Anthropic and transfer it to the services and systems that you use.

The function allows you to create a request in Anthropic and send the result of the request to Data Destination.

Navigation:

Connecting Google Sheets as a Data Source:

1. What data can you get from Google Sheets?

2. How to connect your Google Sheets account to ApiX-Drive?

3. Select the table and sheet from which rows will be unloaded.

4. An example of data that will be transferred from Google Sheets.

Connecting Anthropic:

1. What data can be obtained from Anthropic ?

2. How to connect your Anthropic account to ApiX-Drive?

3. How to configure data search in Anthropic in the selected action?

Claude Sonnet 4.6

Claude Opus 4.6

Claude Sonnet 4

Claude Haiku 4.5

4. An example of data that will be transferred from Anthropic .

Setting up row updates in Google Sheets:

1. What will the Google Sheets integration do?

2. How to connect your Google Sheets account to ApiX-Drive?

3. How can I configure the selected action to transfer data to Google Sheets?

4. Example of data that will be sent to your Google Sheets.

5. Auto-update and communication interval.

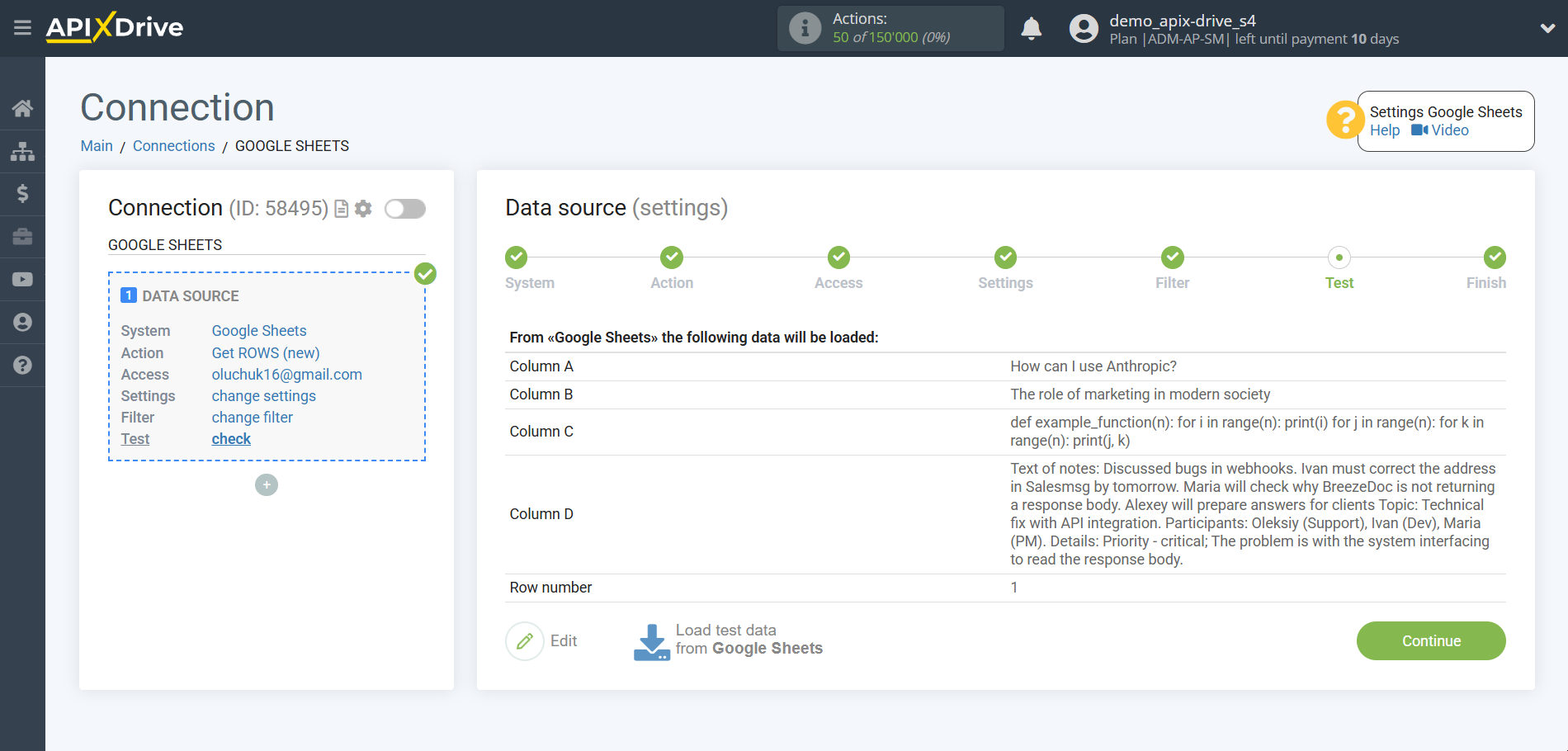

Setting up Data SOURCE: Google Sheets

Let's look at how the function of requesting data from Anthropic and sending the result to Google Sheets works.

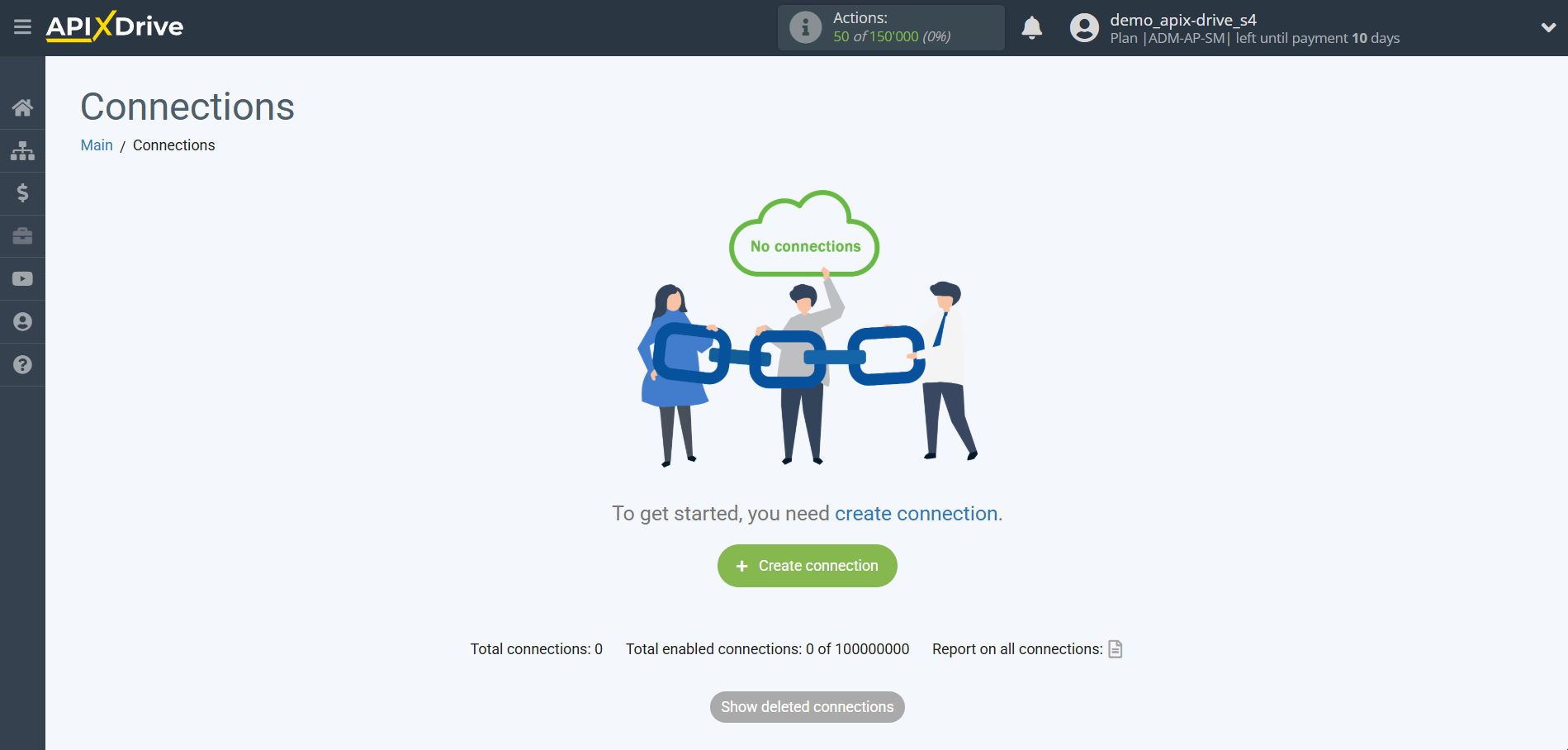

First, you need to create a new connection.

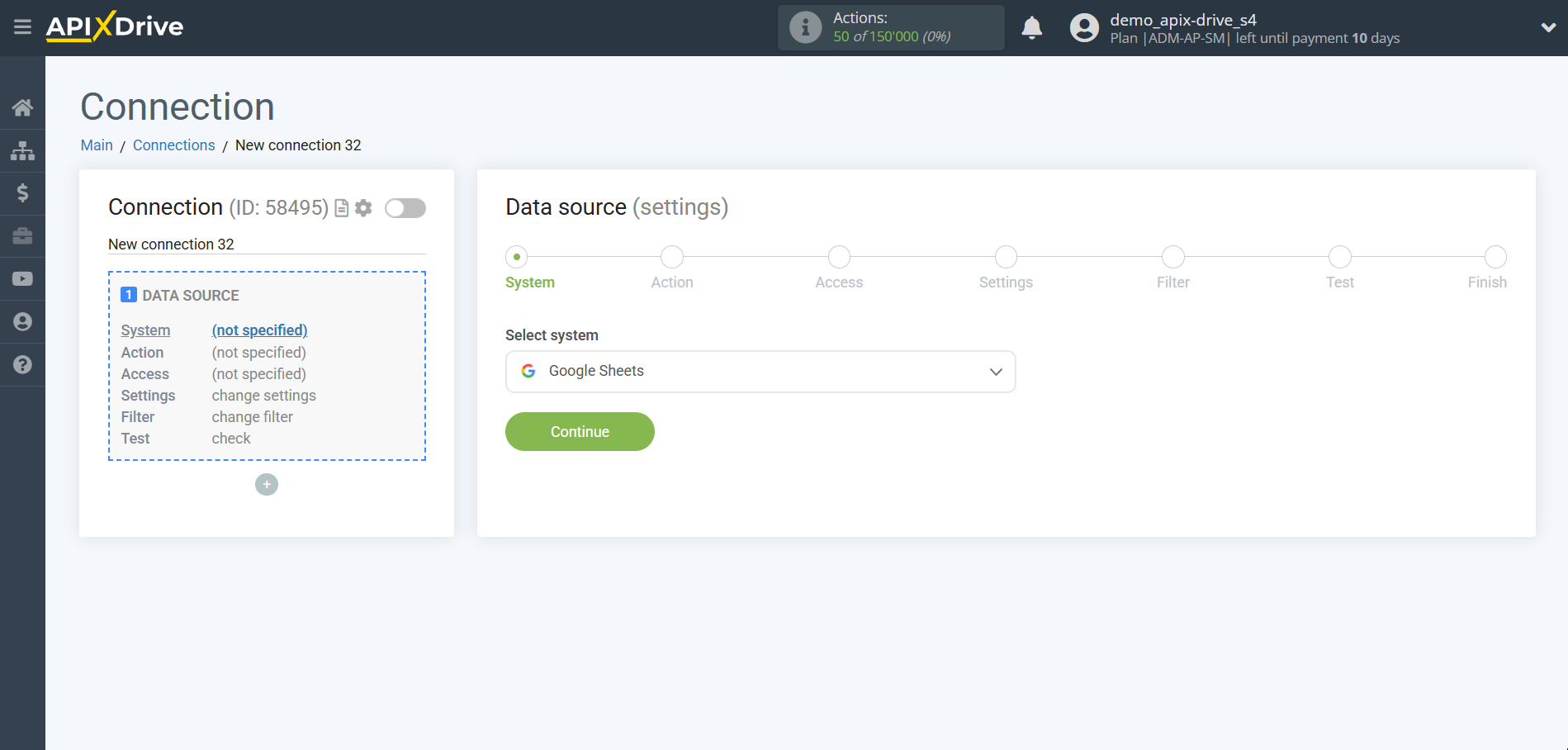

Select the system as the Data Source. In this case, you must specify Google Sheets.

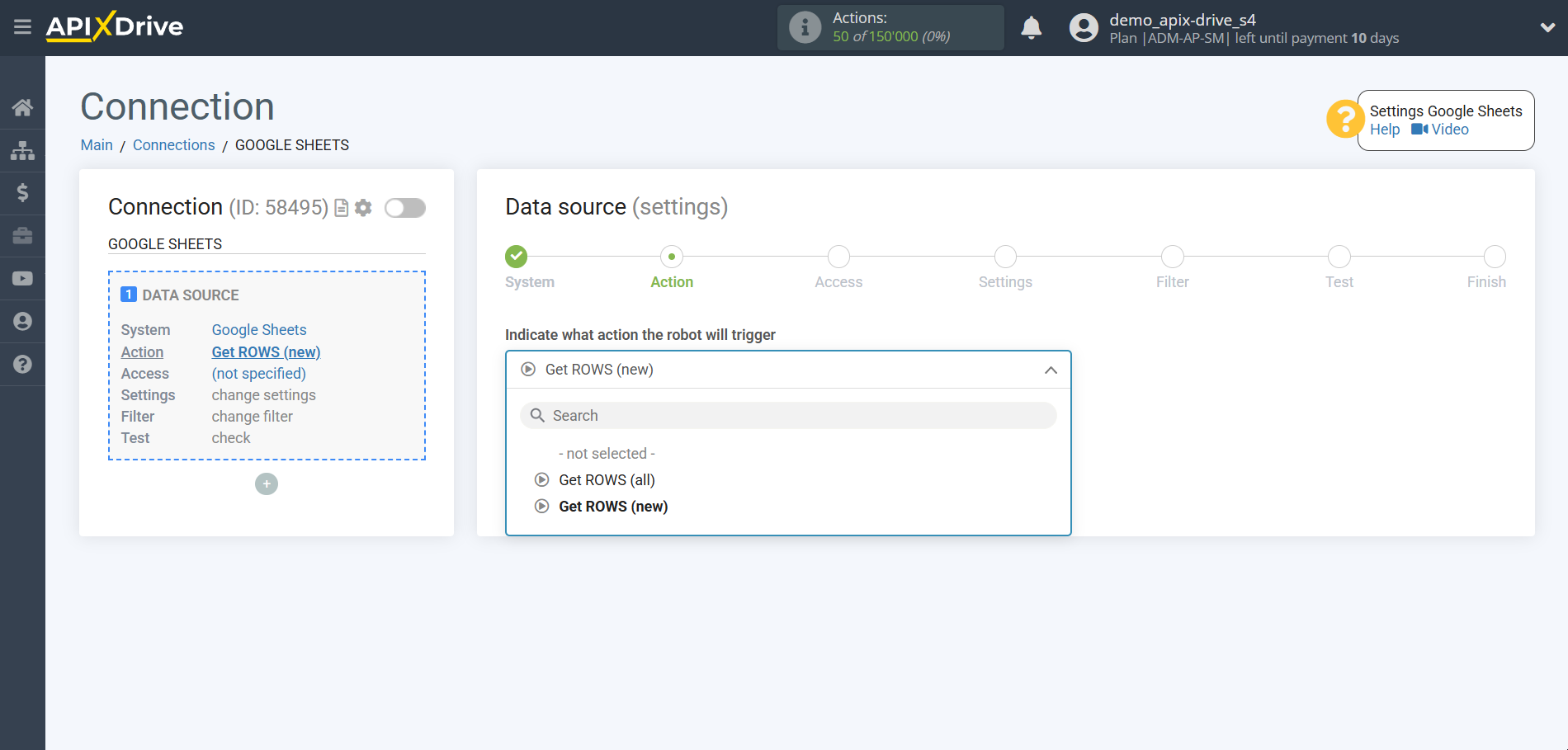

Next, you need to specify the action "Get ROWS (new)".

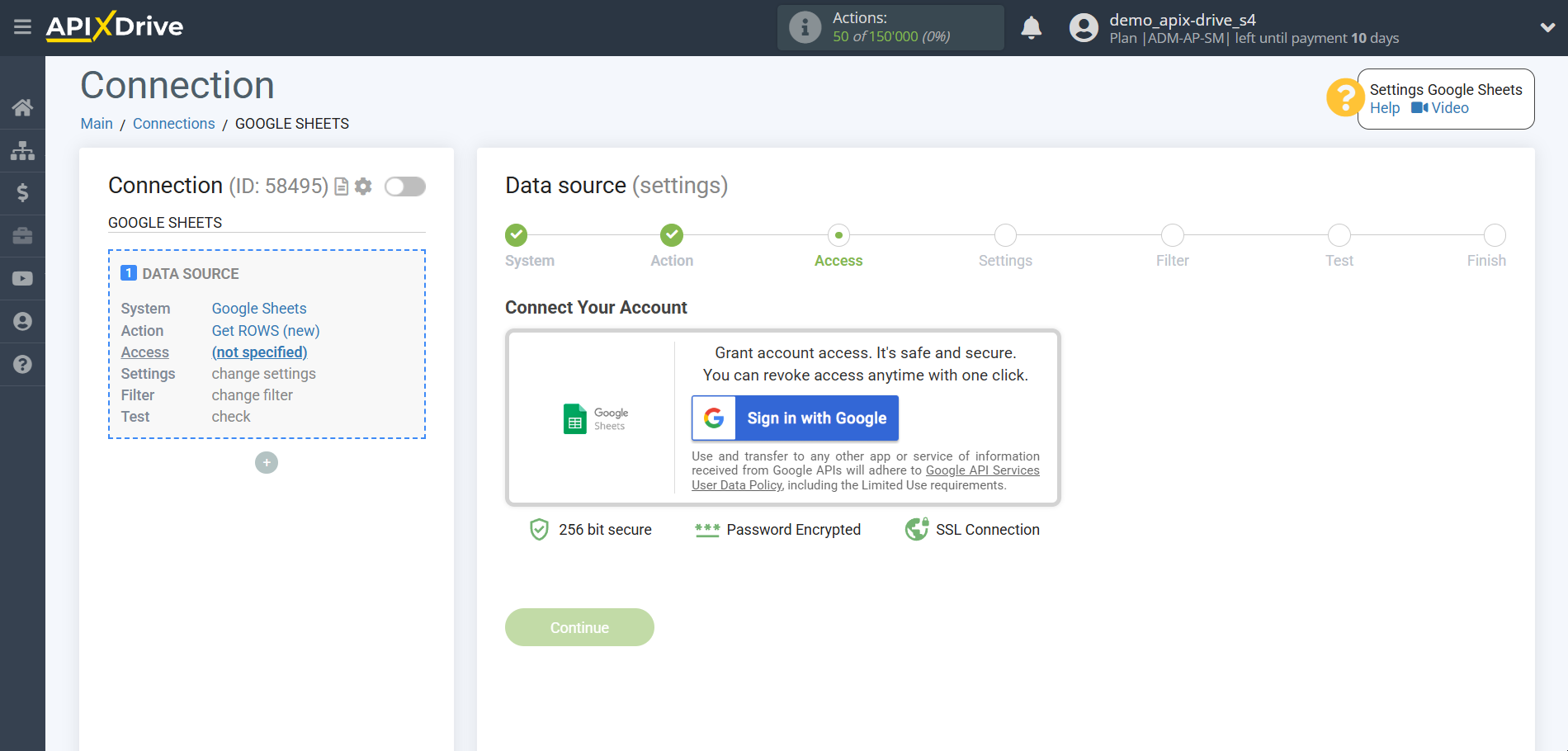

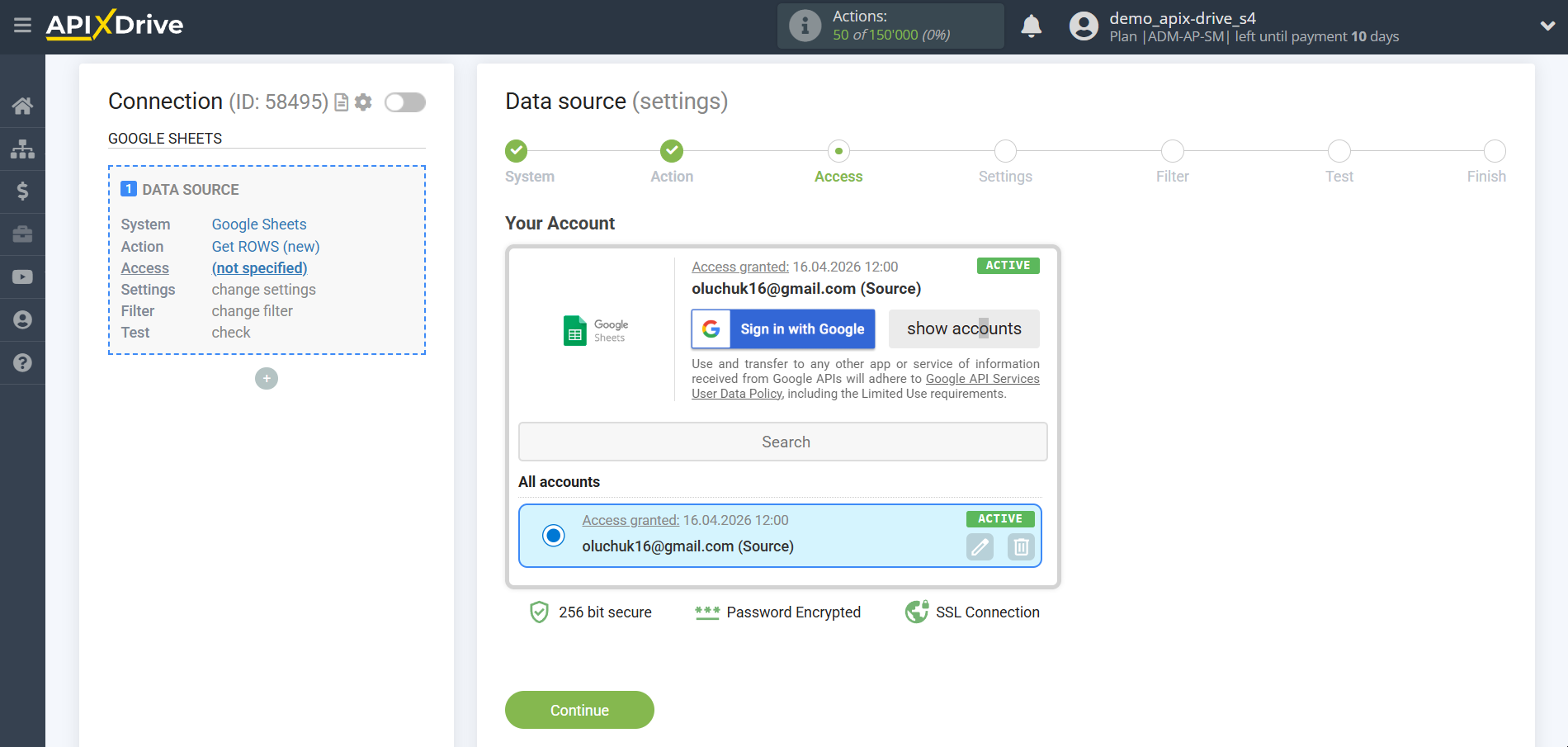

The next step is to select the Google Sheets account from which the data will be uploaded.

If there are no logins connected to the system, click "Connect account".

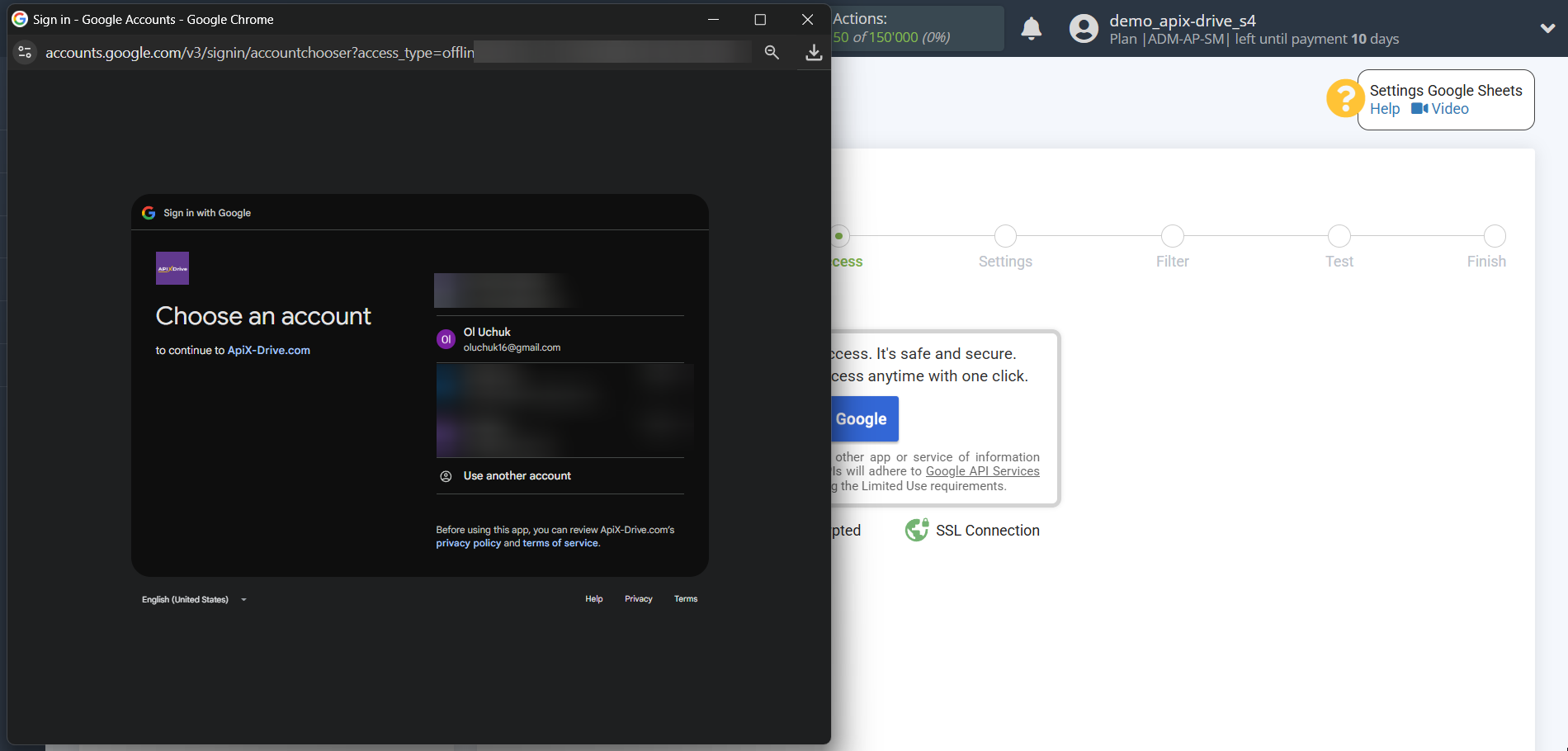

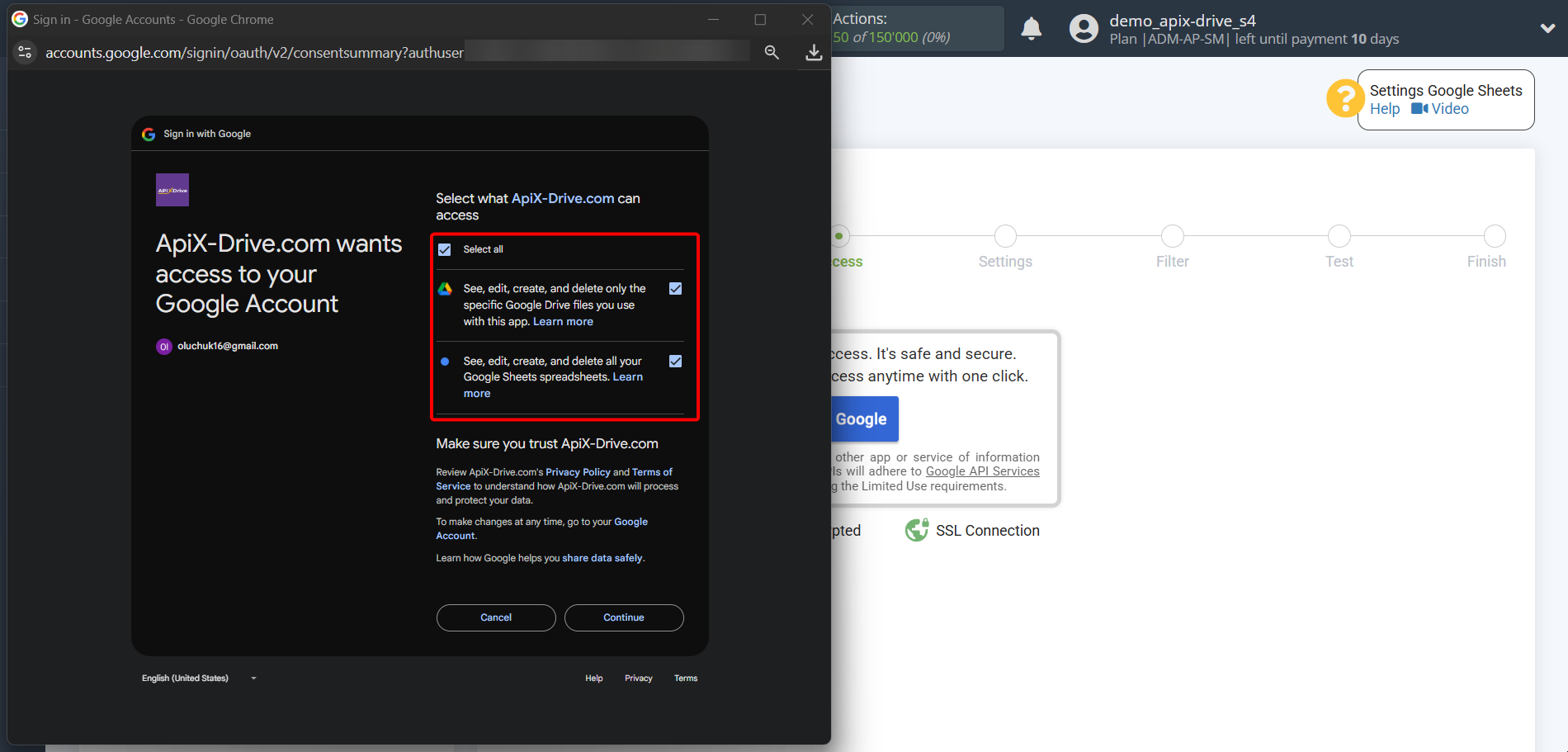

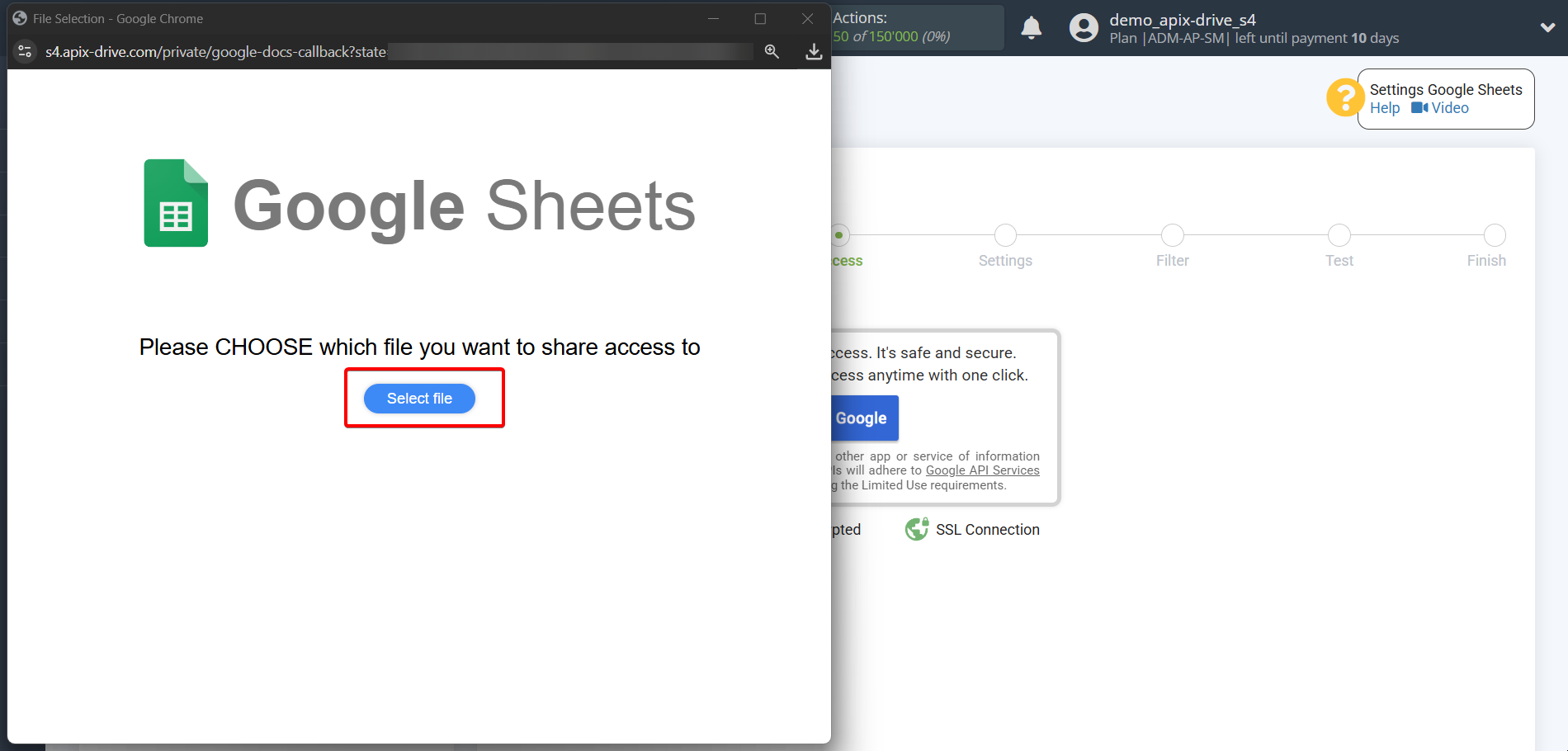

Select which account you want to connect to ApiX-Drive and provide all permissions to work with this account.

When the connected account appears in the "active accounts" list, select it for further work.

Attention! If your account is on the "inactive accounts" list, check your access to this login!

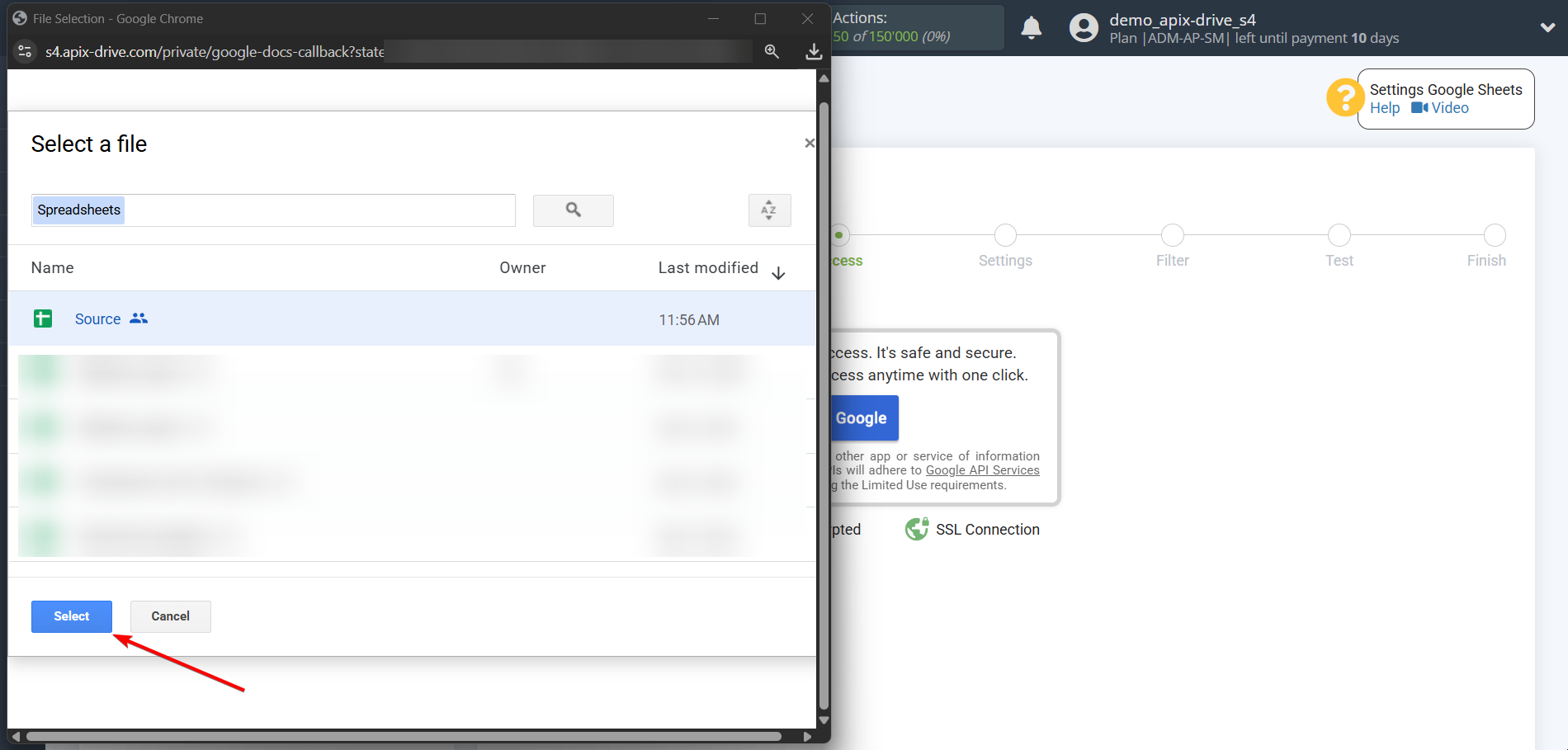

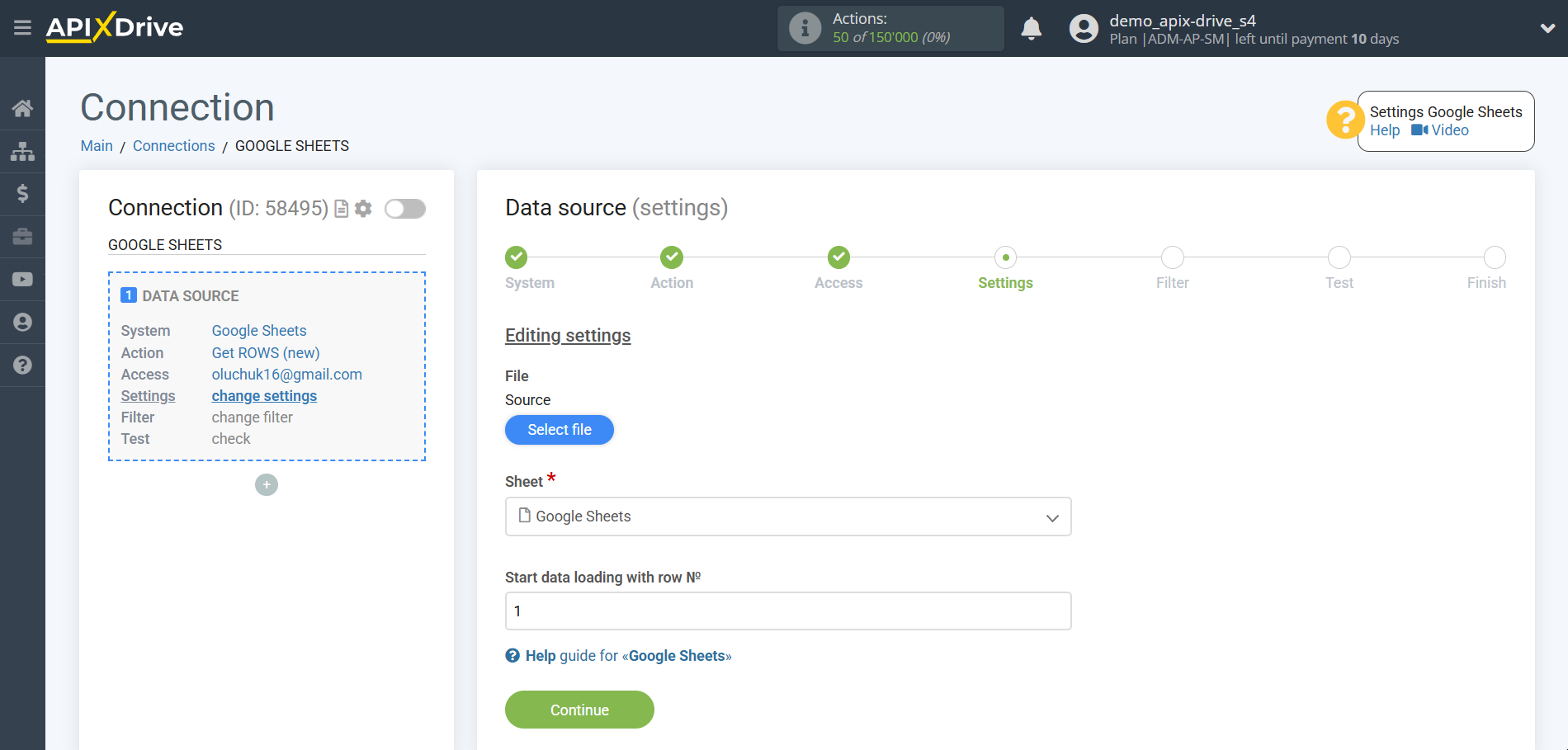

Select the Google Sheets table and sheet where the data you need is located.

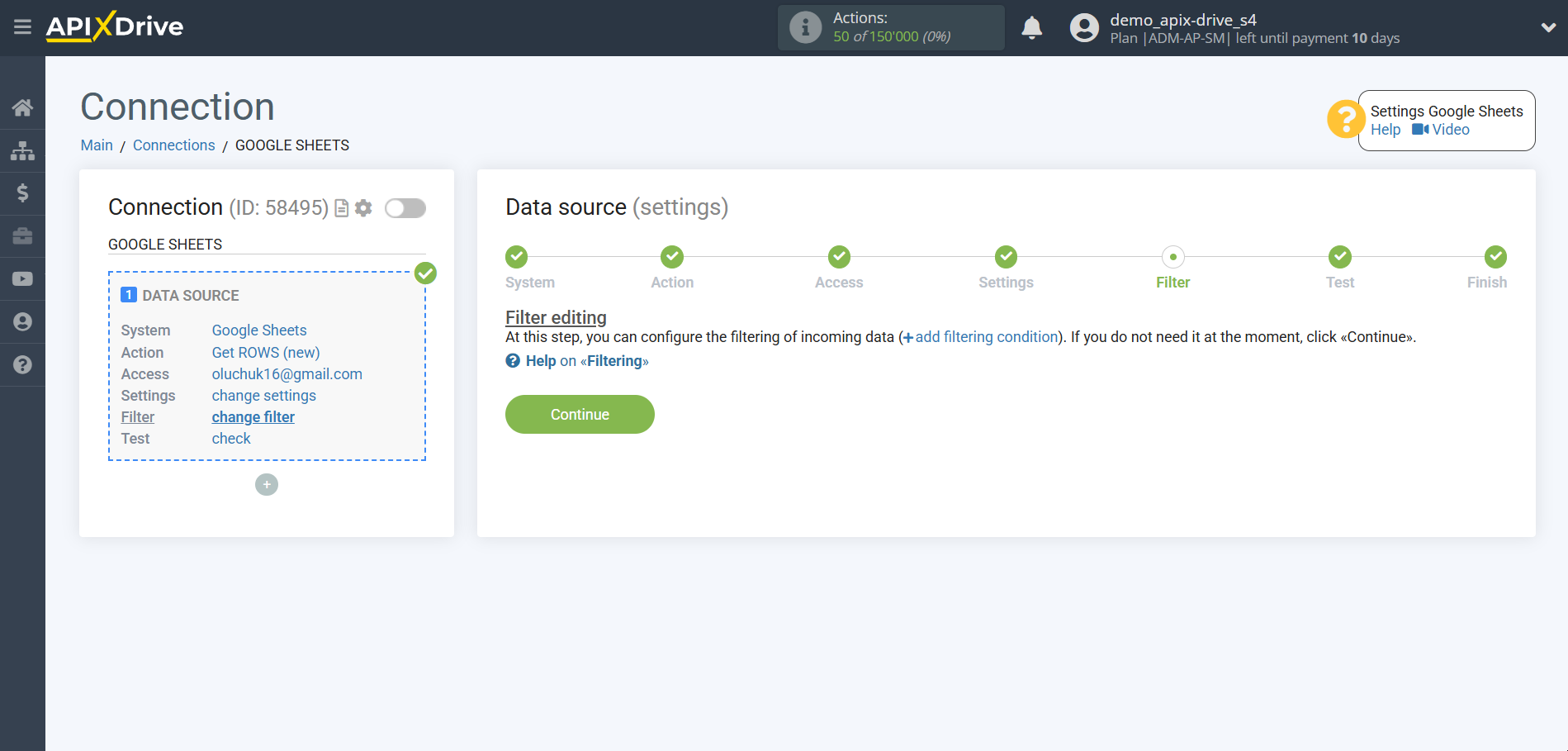

If necessary, you can set up a Data Filter, or click "Continue" to skip this step.

To find out how to setup the Data Filter, follow the link: https://apix-drive.com/en/help/data-filter

Now you can see the test data for one of the rows in your Google Sheets.

If you want to update the test data - click "Load test data from Google Sheets".

If you want to change the settings - click "Edit" and you will go back one step.

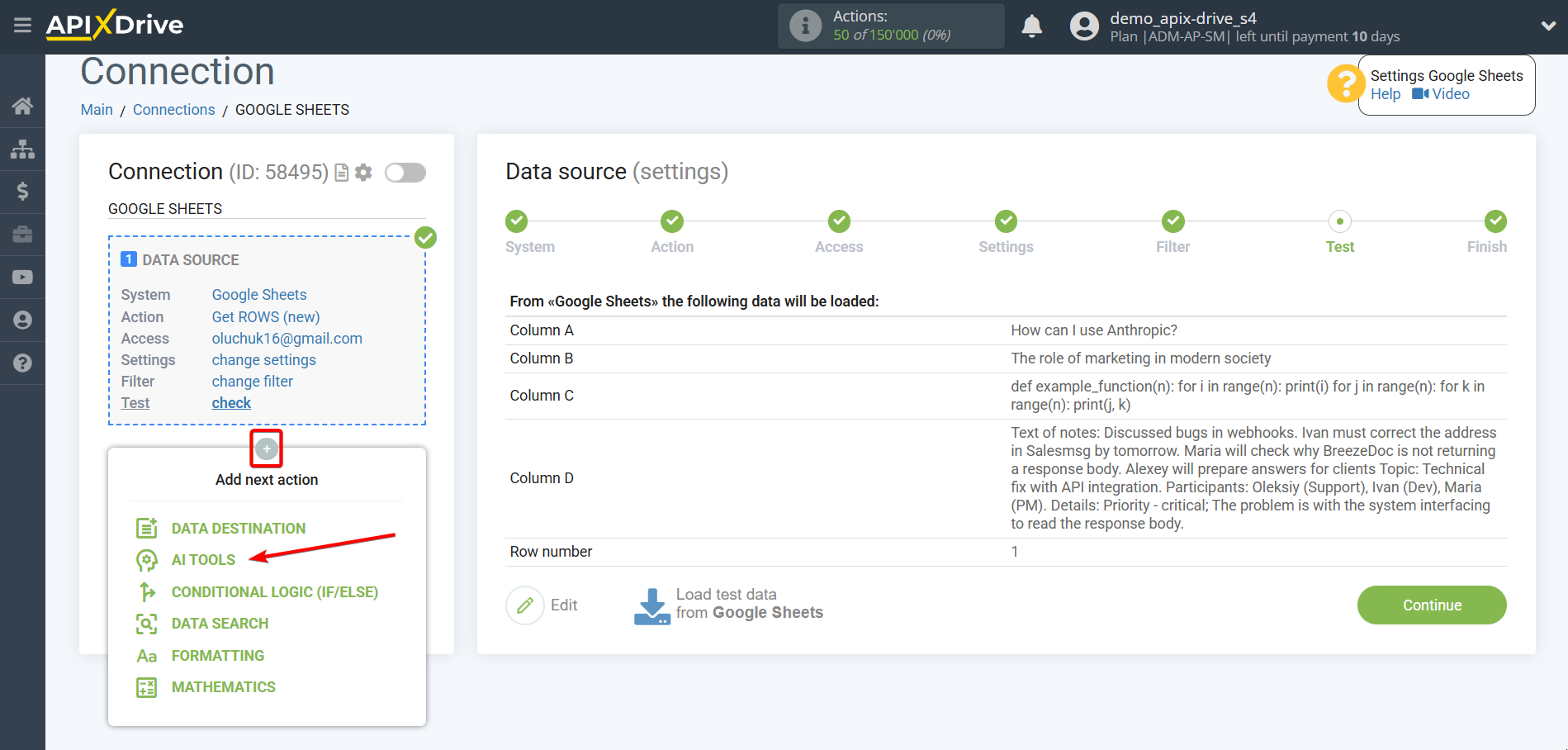

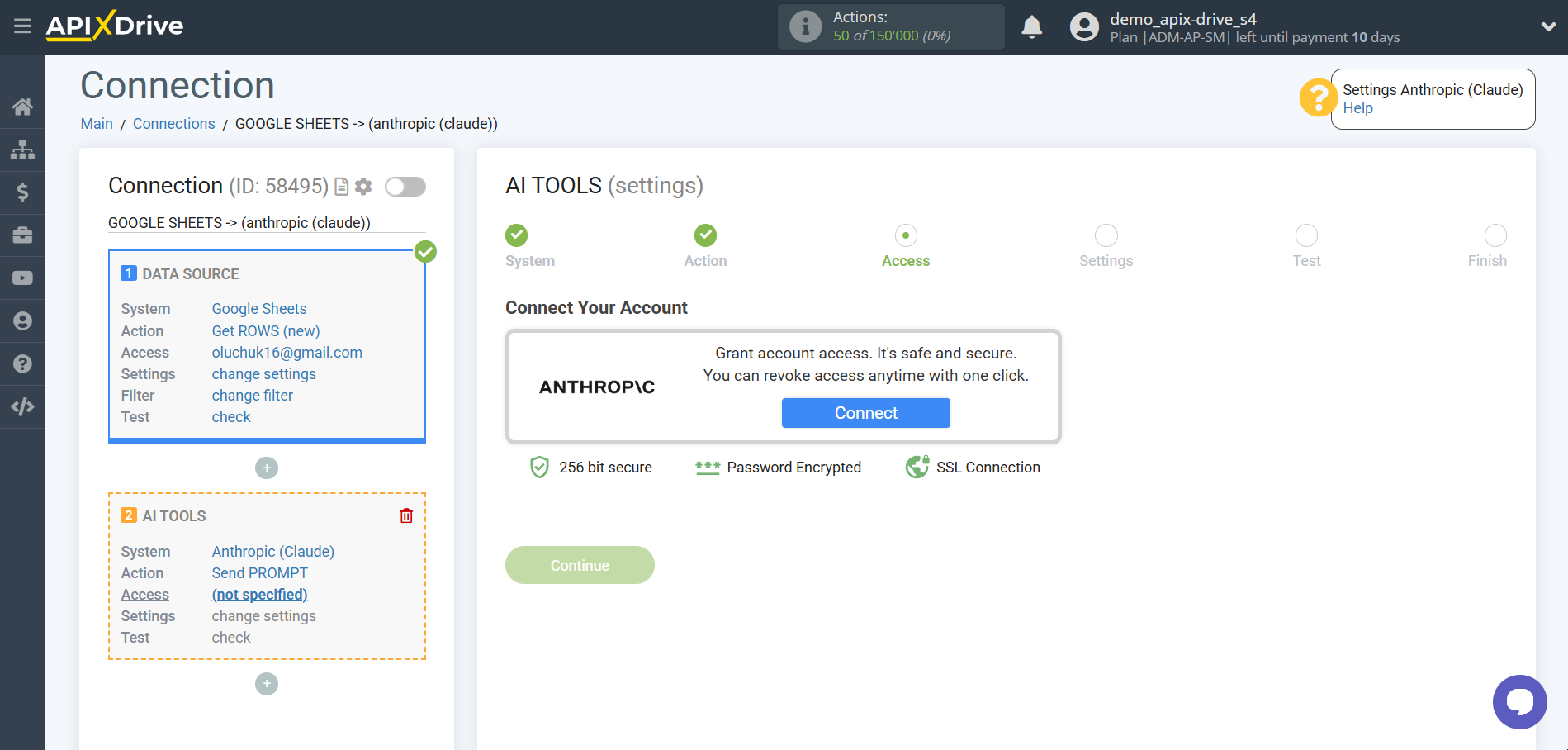

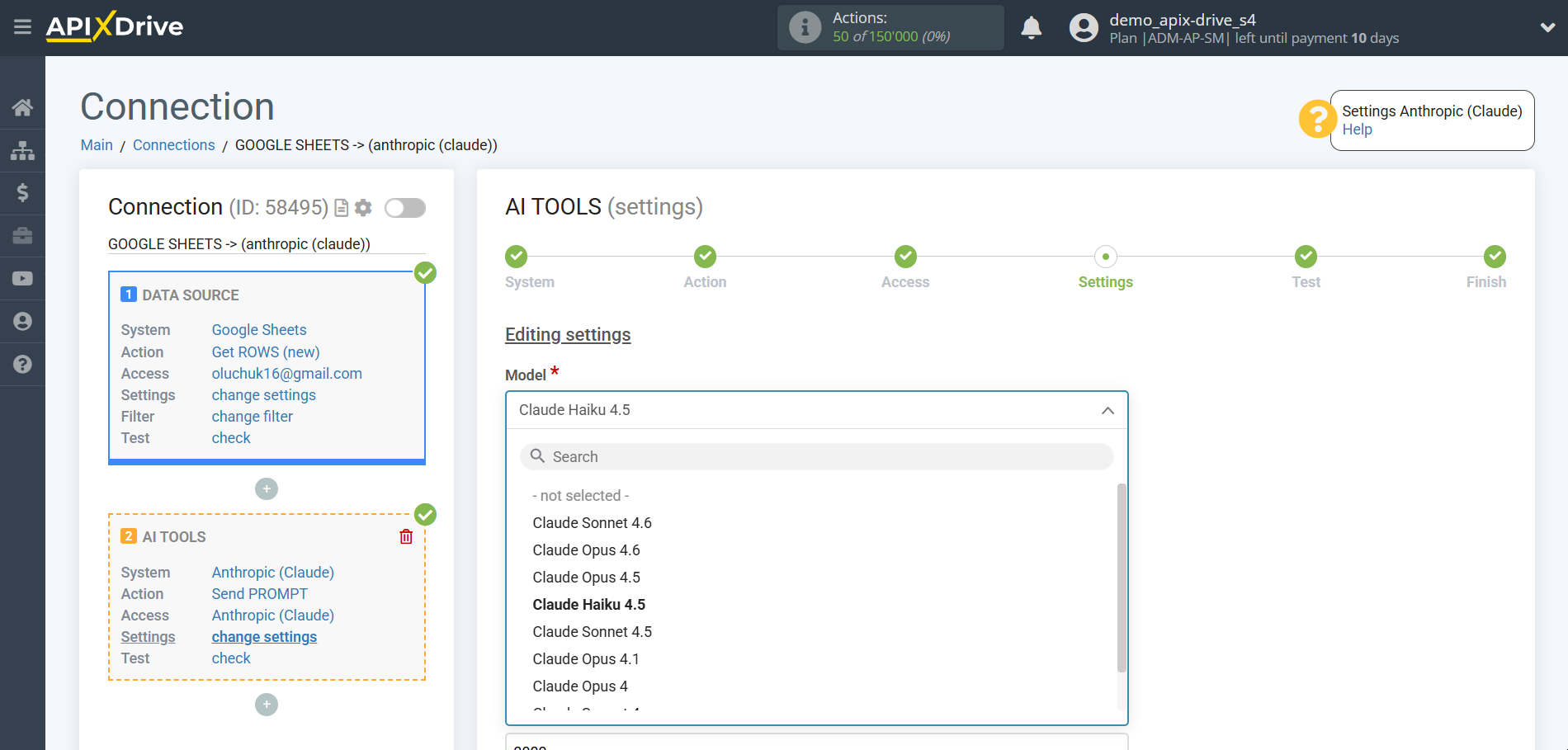

Now let's connect the additional AI TOOLS unit. To do this, click the "+" button and select "Anthropic " from the list.

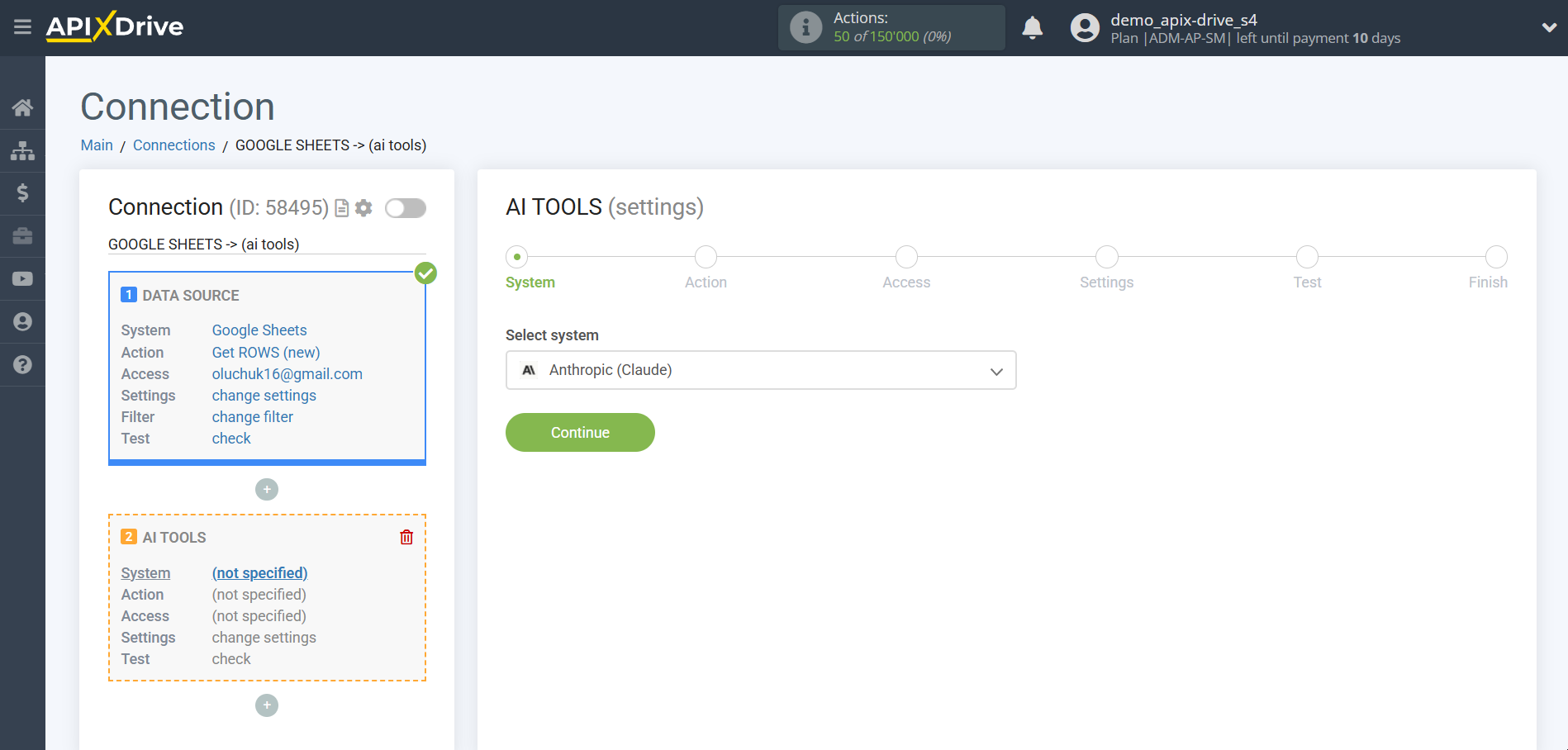

Setting up Anthropic

Select "Anthropic" as the system in which the search will be performed.

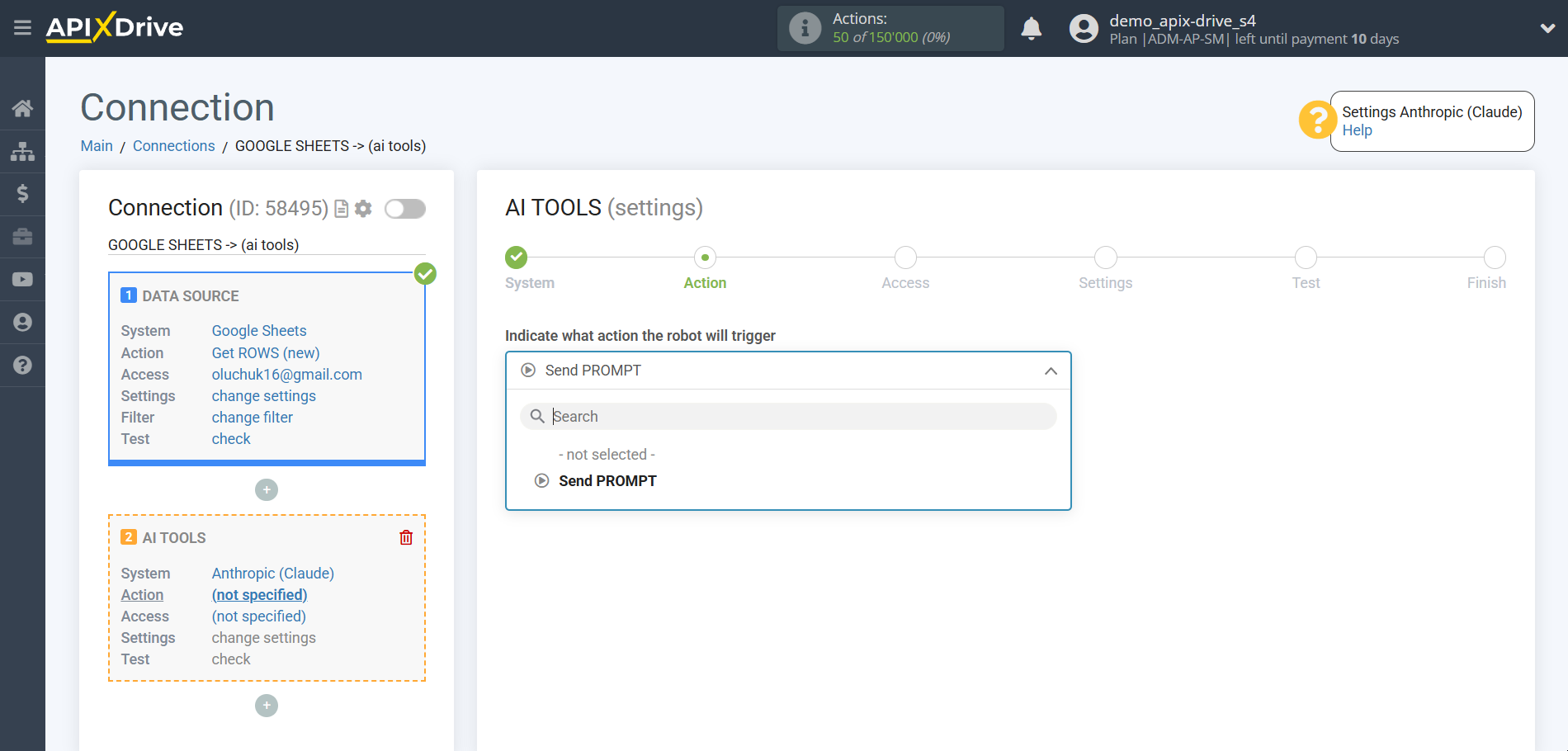

Next, you need to specify the action, for example, "Send PROMPT".

- Send PROMPT - allows you to send a request to Anthropic for gerating, editing and translating data.

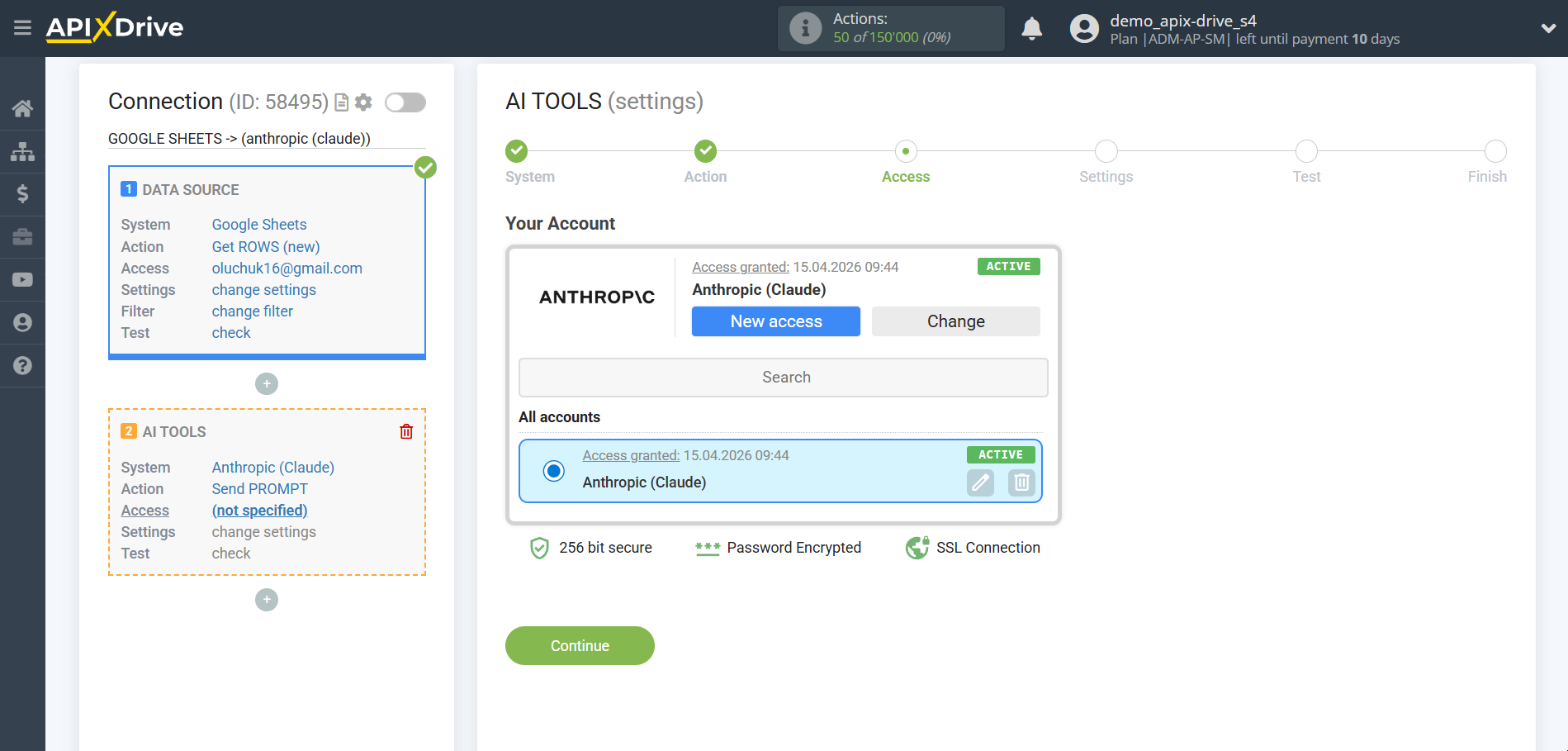

The next step is to select an Anthropic account.

If there are no logins connected to the ApiX-Drive system, click “Connect account”.

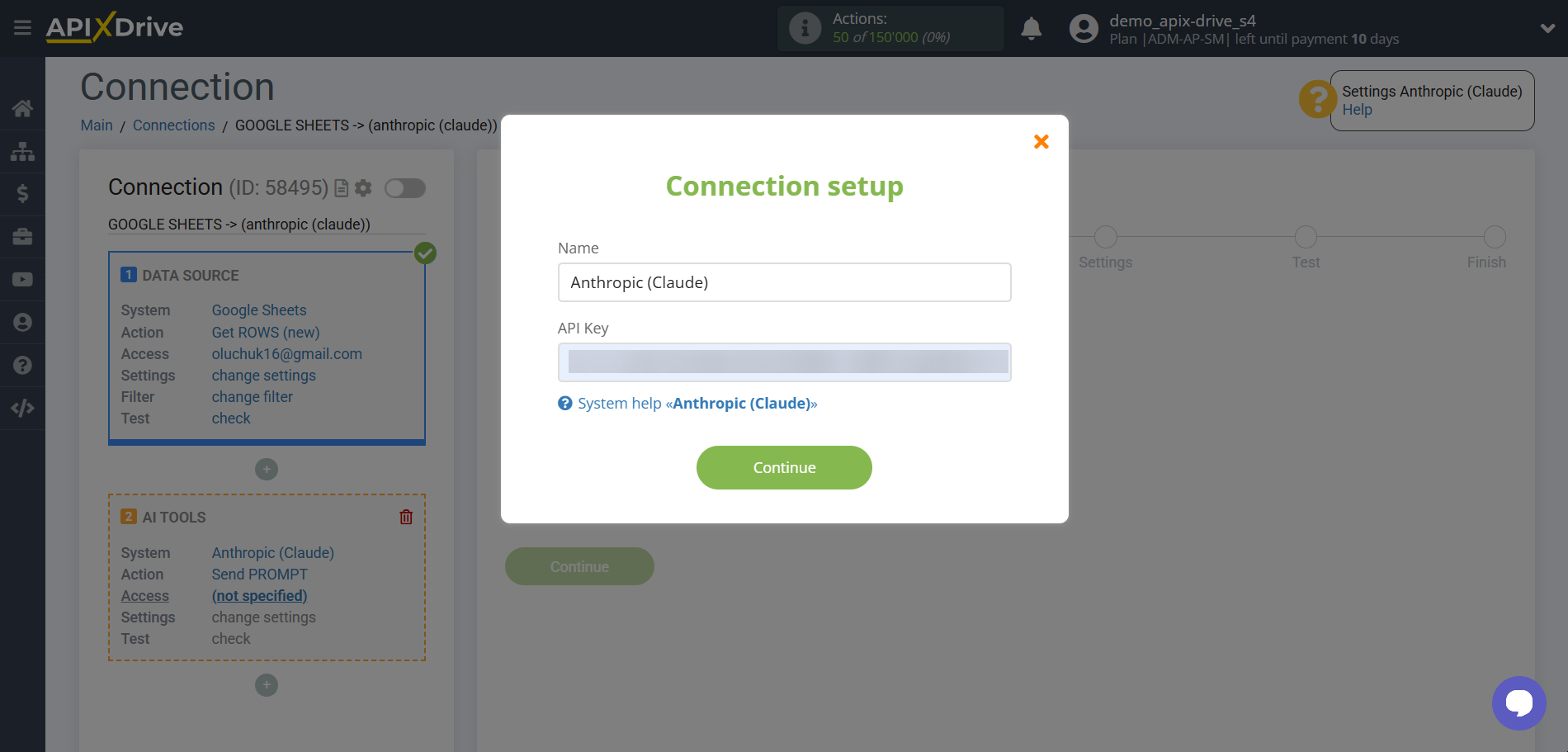

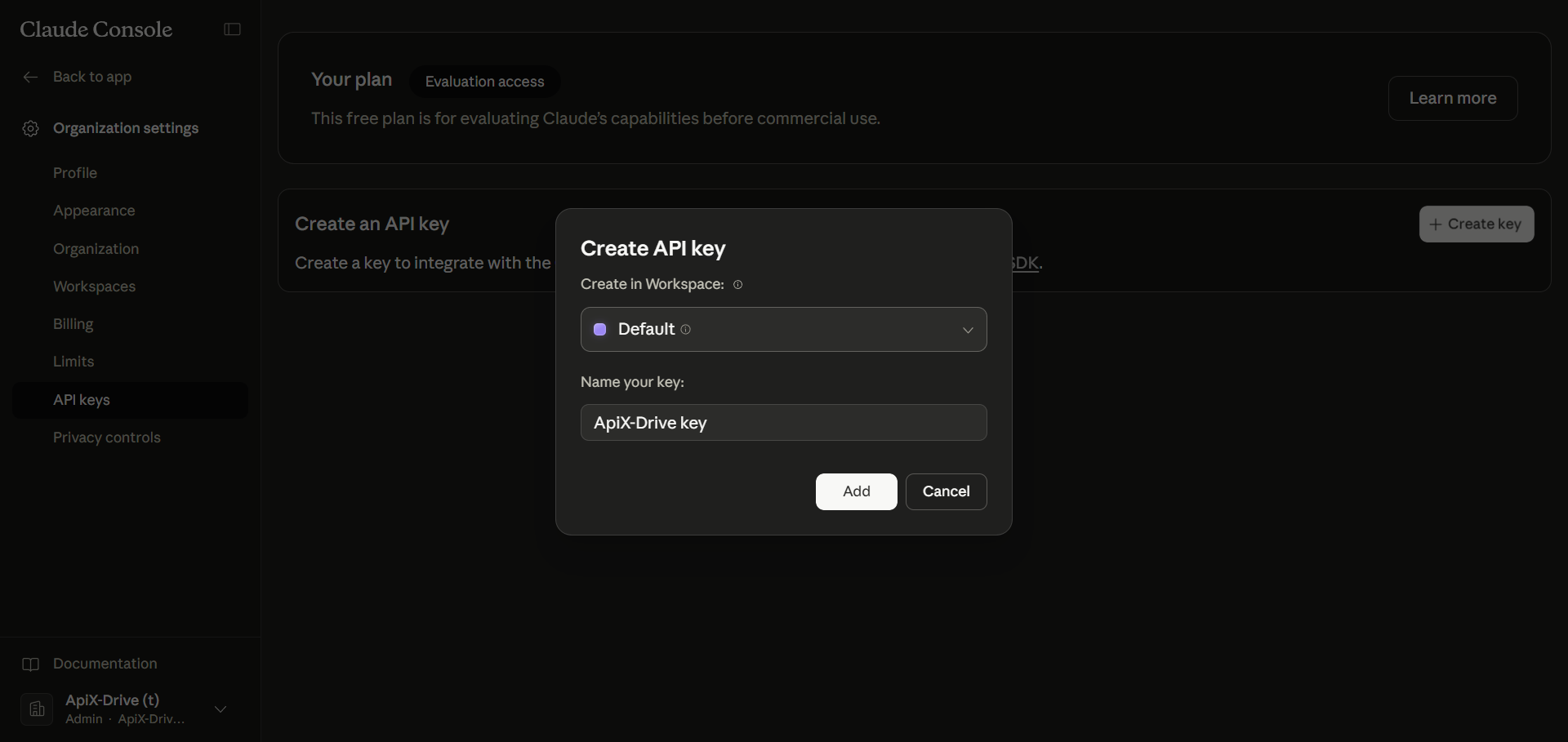

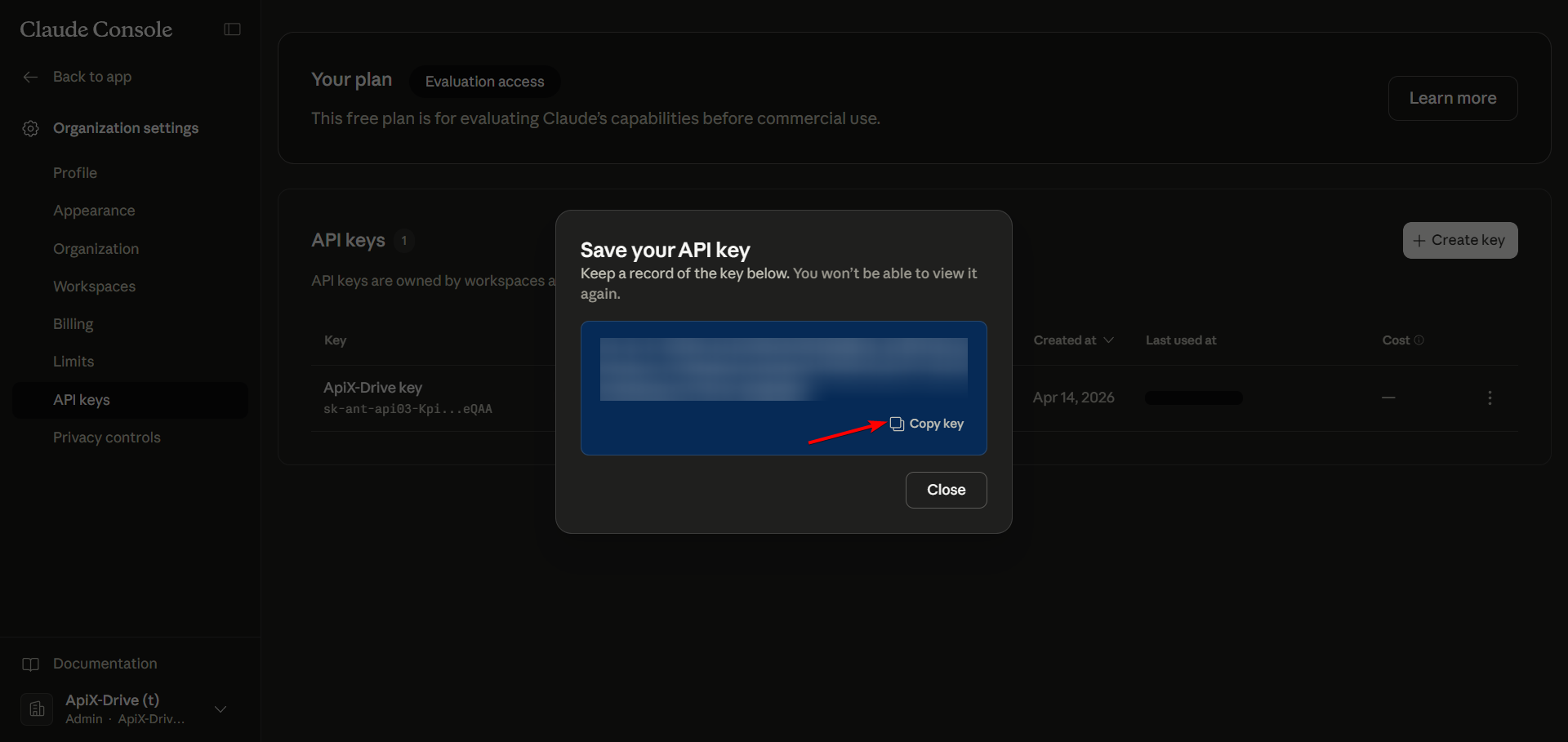

Enter the API key found in your Anthropic account settings.

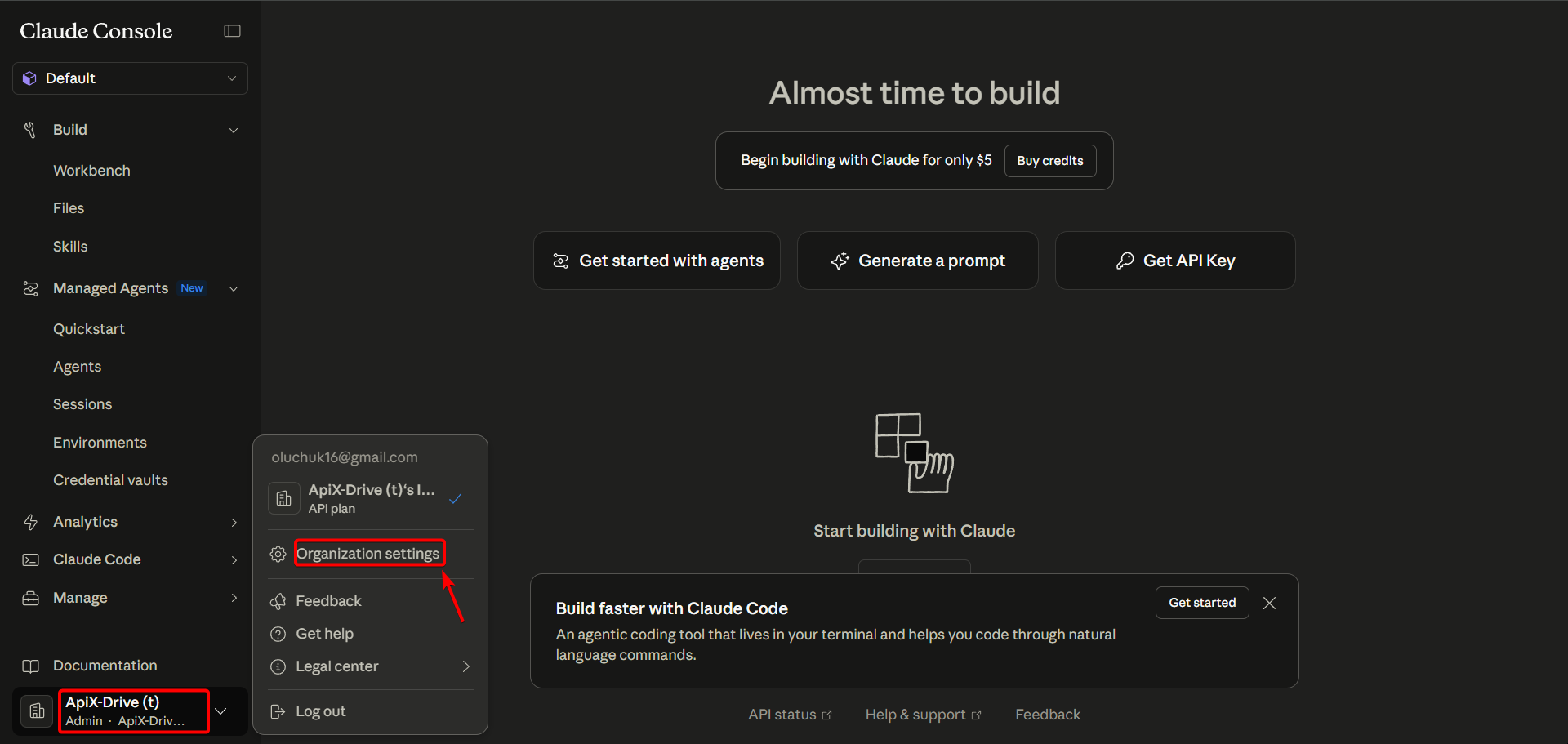

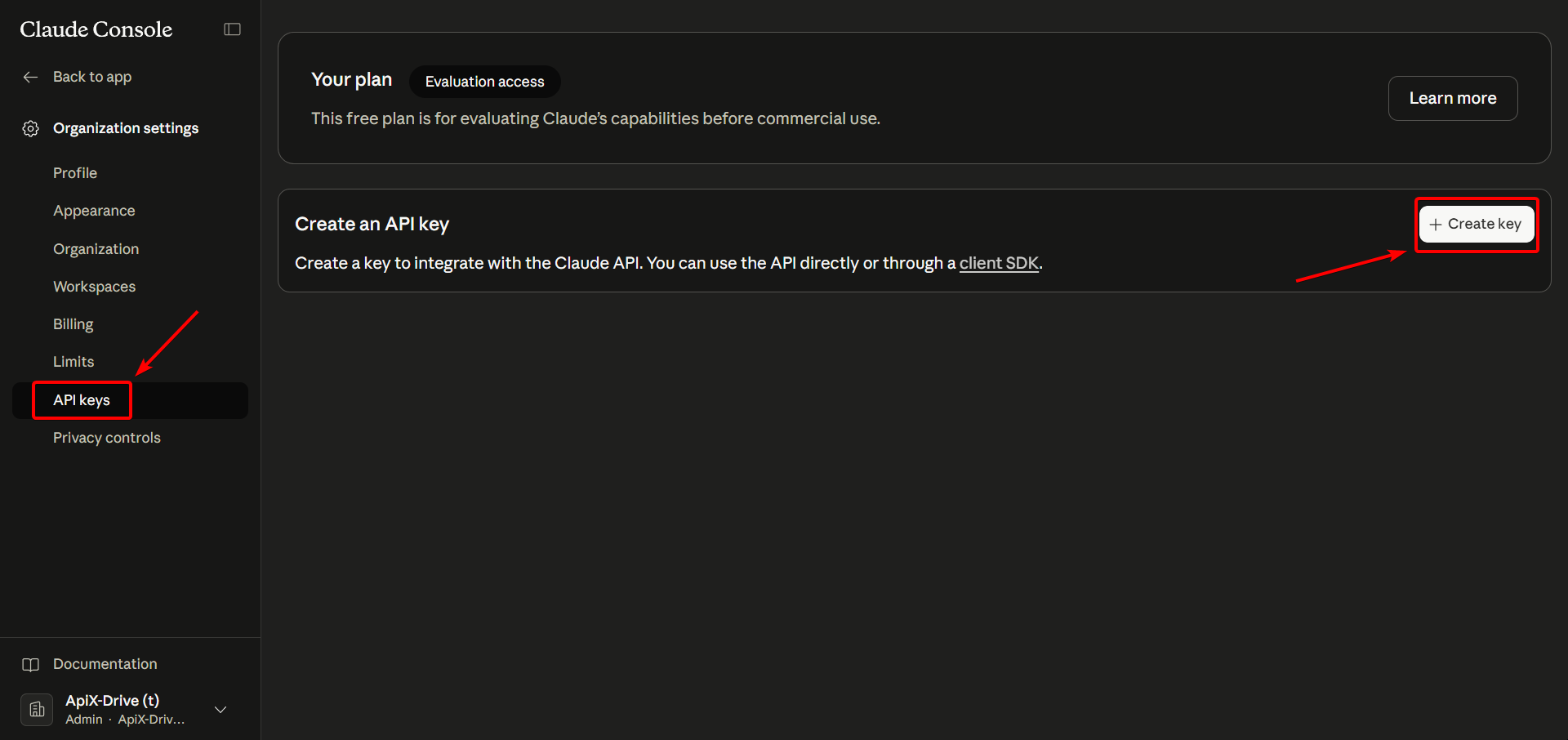

Go to your Anthropic account, in the lower right corner click on your account tab, then click "Organization settings", then "API keys" and "Create API key" and enter a name for your key. Copy the API key and paste it into the appropriate field of the account connection window in the ApiX-Drive system.

Click "Save and select the connected Anthropic account from the drop-down list.

When the connected account appears in the "active accounts" list, select it for further work.

Attention! If your account is on the "inactive accounts" list, check your access to this login!

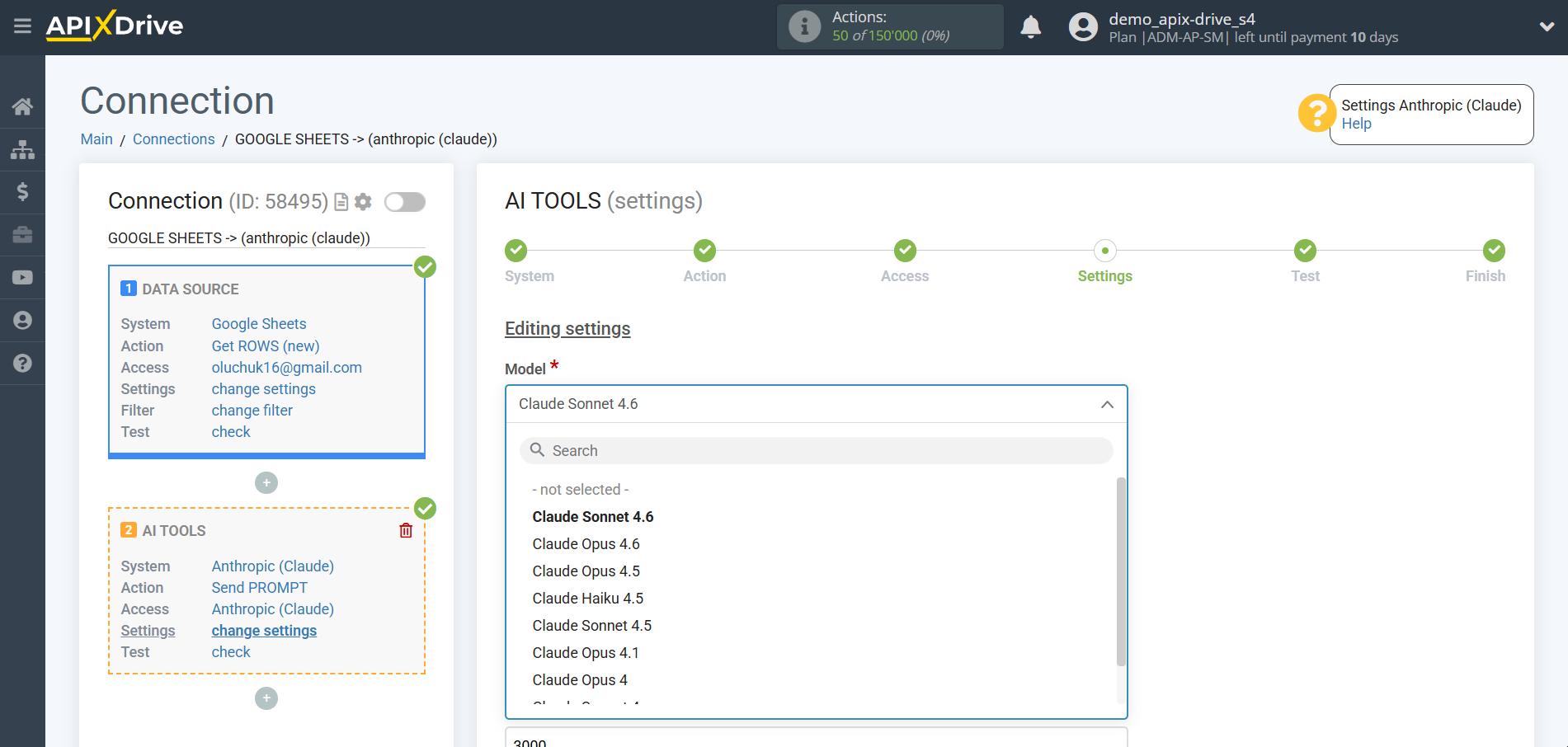

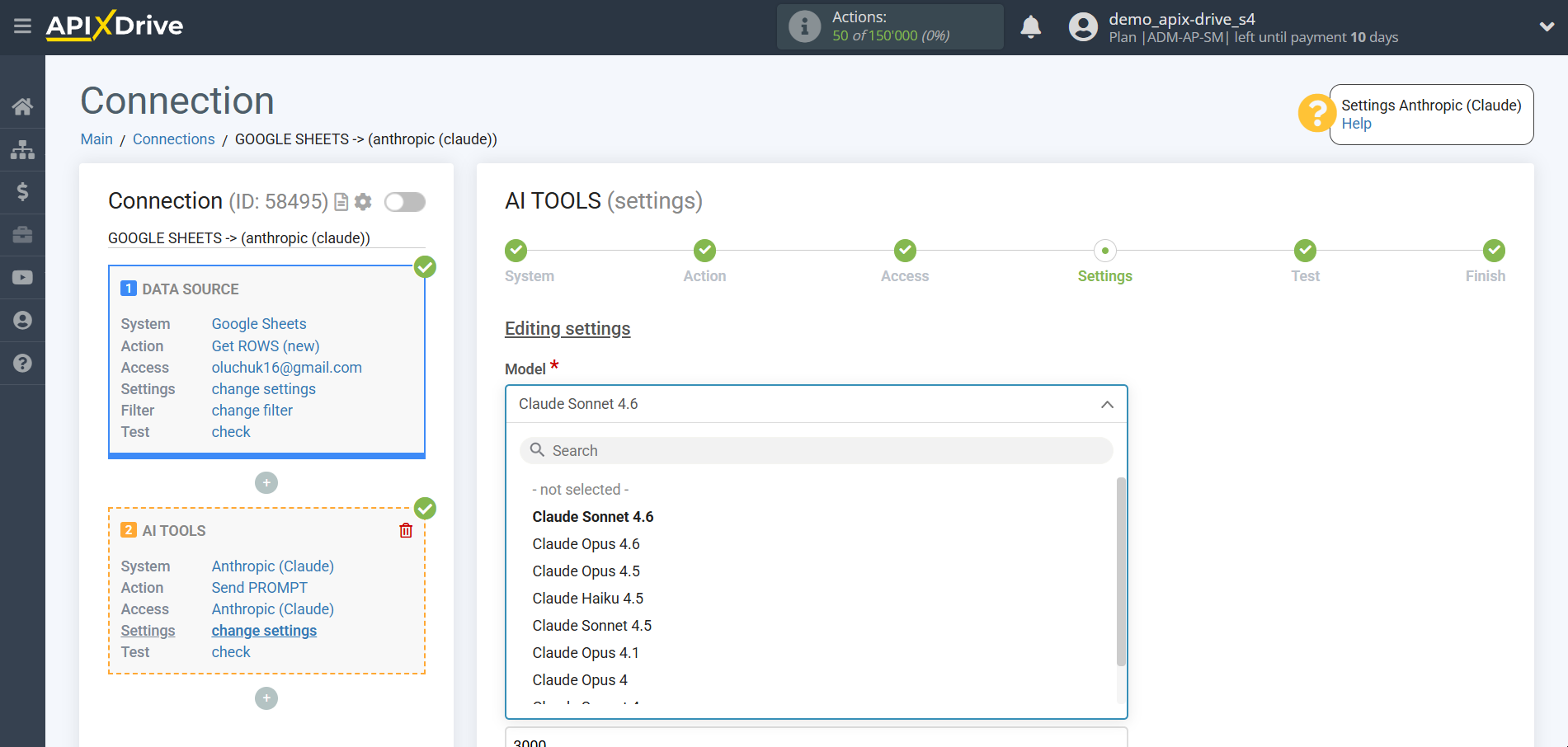

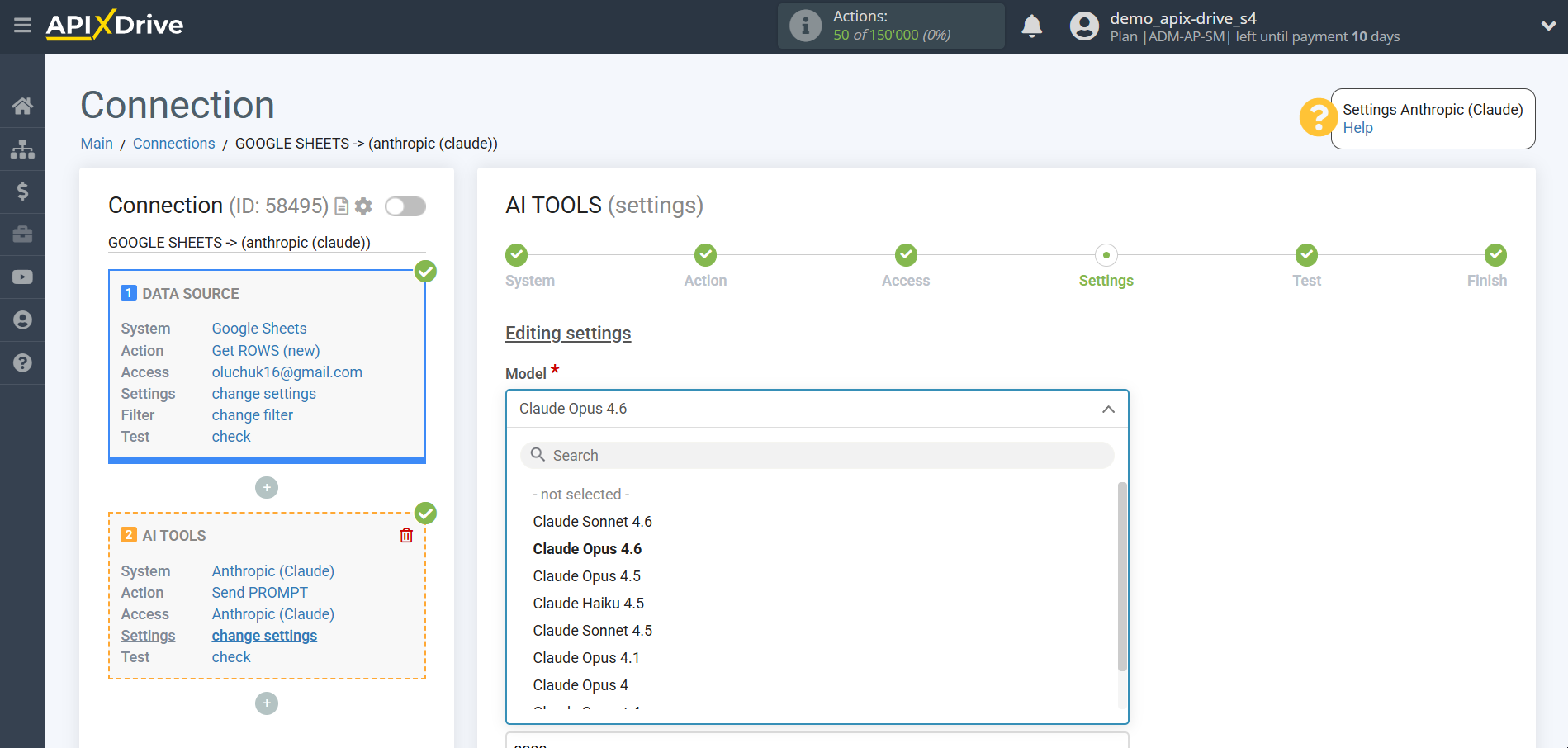

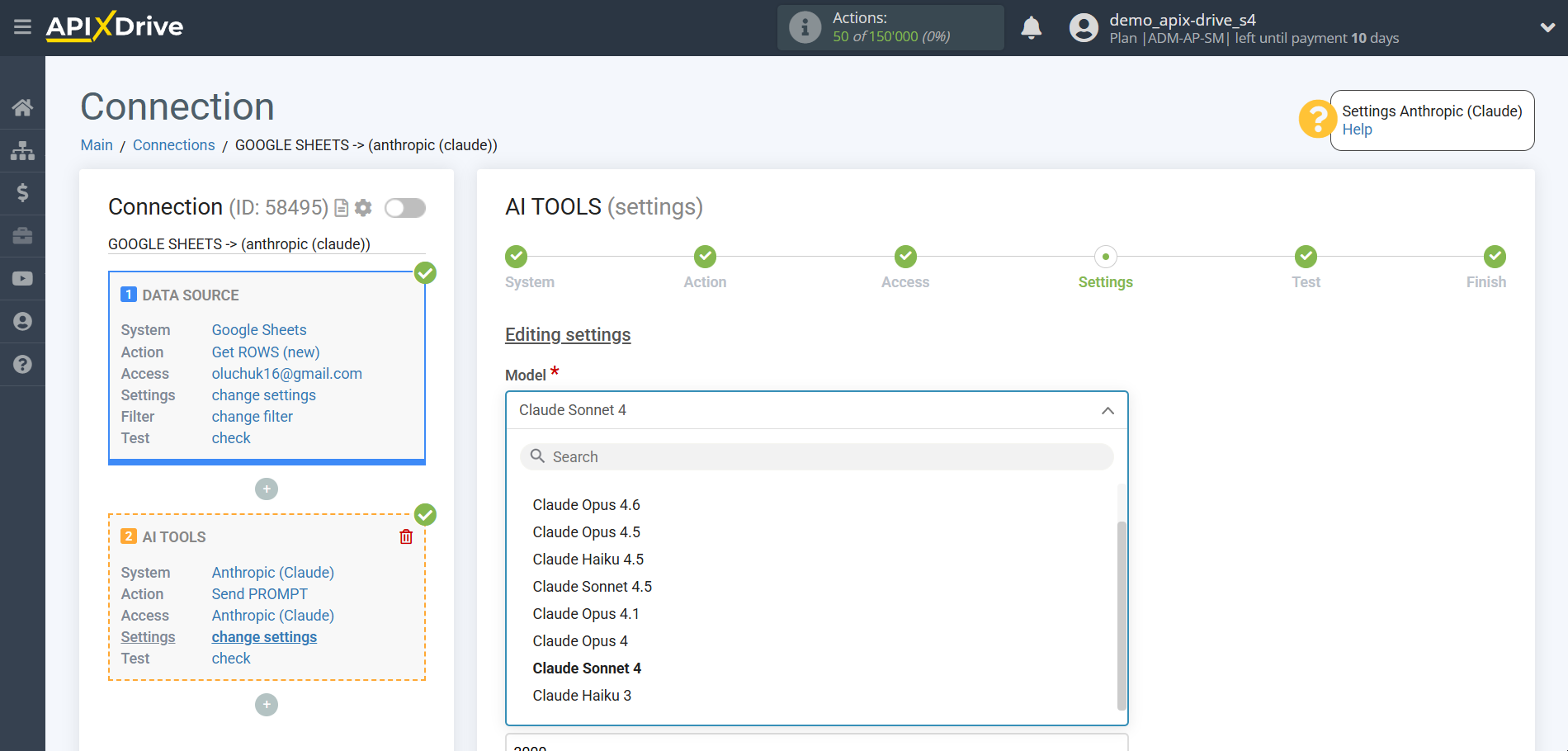

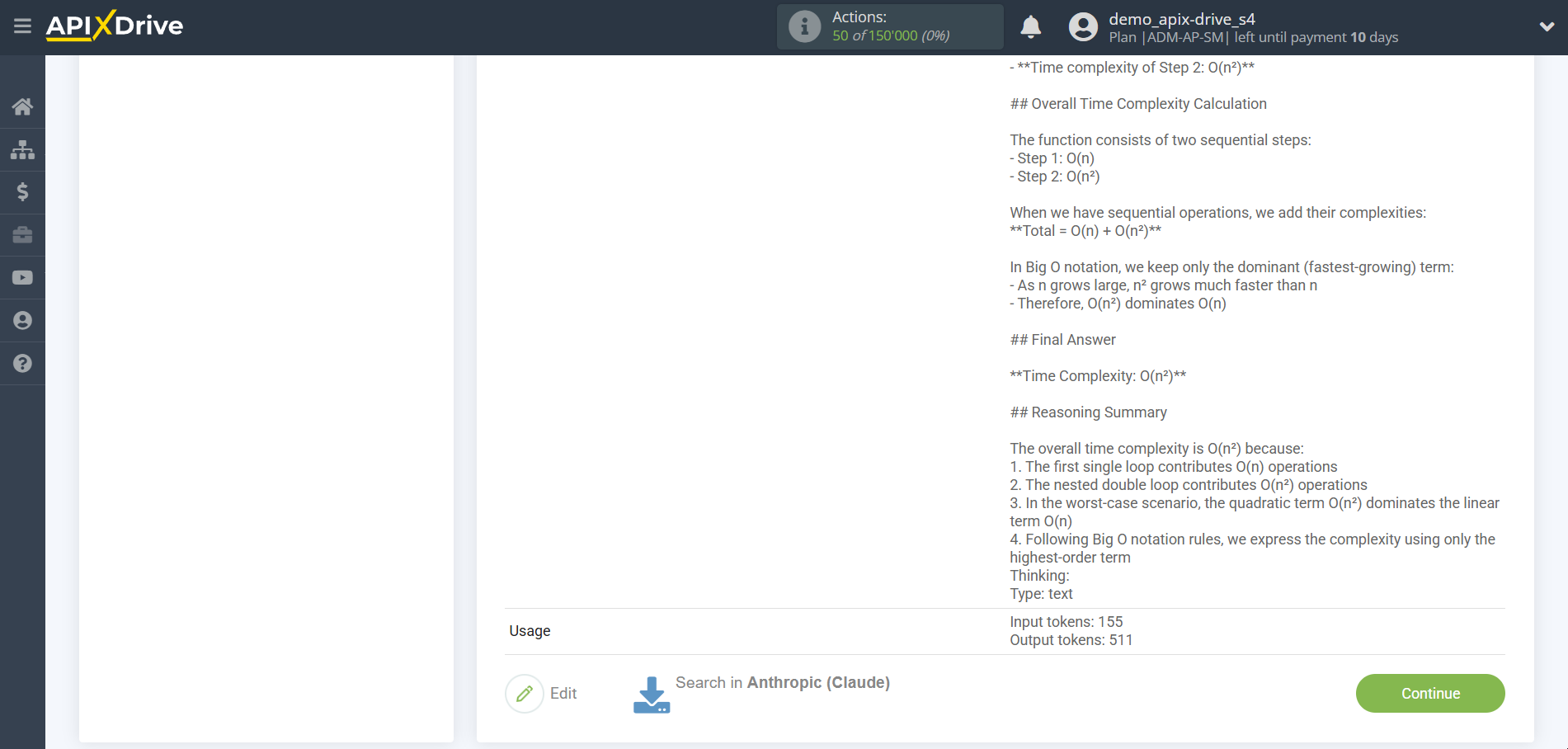

Now you need to select the Anthropic Model. The choice of model depends on your application.

Claude Sonnet 4.6 - is the most advanced balanced model in the Claude 4 line, combining flagship intelligence with high speed of operation. It has received significant updates in programming, planning and analysis of large volumes of data. The model supports the mode of deep reasoning, which allows to achieve high accuracy in complex business processes, maintaining the optimal balance between utility and speed.

Claude Opus 4.6 - is the most powerful and intelligent model of the fourth generation, designed to solve critically complex tasks. It is distinguished by unsurpassed abilities for deep logical reasoning, scientific analysis and strategic planning. Opus 4.6 is able to process huge contexts and generate detailed structured responses, setting a new standard in the field of professional AI applications.

Claude Opus 4.5 - is the flagship model, focused on deep analysis and high accuracy of complex instructions. It demonstrates excellent results in maintaining long contexts and working with structured data. The model is ideal for tasks where maximum attention to detail, accuracy of logical conclusions and stability of results are required.

Claude Haiku 4.5 - is the fastest and most efficient model in the Claude 4 family. Optimized for lightning-fast response and processing large flows of information in real time. Despite its compactness, Haiku 4.5 has a high level of intelligence, which makes it an ideal choice for automating routine tasks, quickly classifying data and creating high-quality content with minimal delays.

Claude Sonnet 4.5 - is a professional model specially tuned for developers and technical specialists. It offers increased accuracy in writing software code, technical documentation and logic modeling. The model provides fast iteration and stable work with large context windows, while maintaining high performance in complex technical scenarios.

Claude Opus 4.1 - is a specialized version of the flagship model with improved long-term memory and context stability. It is designed to support complex multi-step operations where it is important to maintain the consistency of reasoning over long interactions. The model demonstrates high reliability in professional analytical scenarios and programming.

Claude Opus 4 - is the fourth-generation base model of the highest level of intelligence. It laid the foundation for the entire Claude 4 line, offering a significant leap in the quality of understanding natural language and complex concepts. The model provides deep immersion in the subject, high creativity and the creation of high-quality content of any complexity.

Claude Sonnet 4 - is the fourth-generation foundation model, providing the perfect balance between speed and intelligence. It is designed as a universal solution for a wide range of tasks: from writing texts to basic analytics. The model offers high reliability, accurate following of instructions and predictability of results.

Claude Haiku 3 - is the fastest and most compact model of the previous generation, providing almost instant response. It is the most economical solution in the line, ideal for simple tasks requiring high speed, minimal latency and basic context understanding.

Pay attention! AI services typically use a payment model based on the number of API requests and the amount of tokens used. We recommend setting usage limits and regularly checking the request statistics in your account to avoid unexpected costs.

Answering Anthropic knowledge base questions and generating text

In this case, select "Claude Sonnet 4.6".

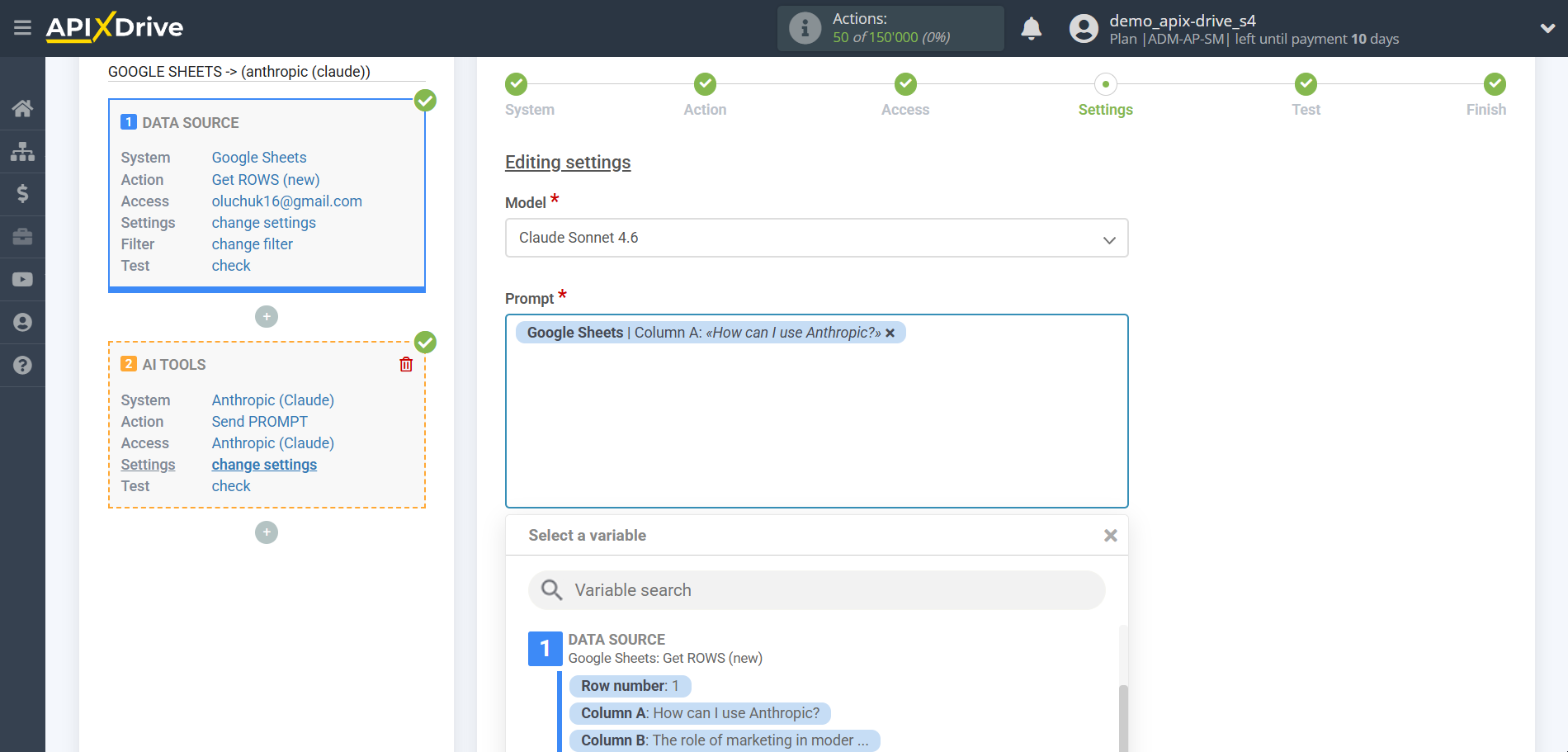

- Prompt - in this field you need to assign which variable of the Data Source table contains the data for which the query will be made on the Anthropic server, in our case, this is column “A”.

- Presence penalty - This parameter is used to encourage the model to include a variety of tokens in the generated text. This is the value that is subtracted from the log likelihood of the token each time it is generated. A higher Presence Penalty value will cause the model to be more likely to generate tokens that were not already included in the generated text.

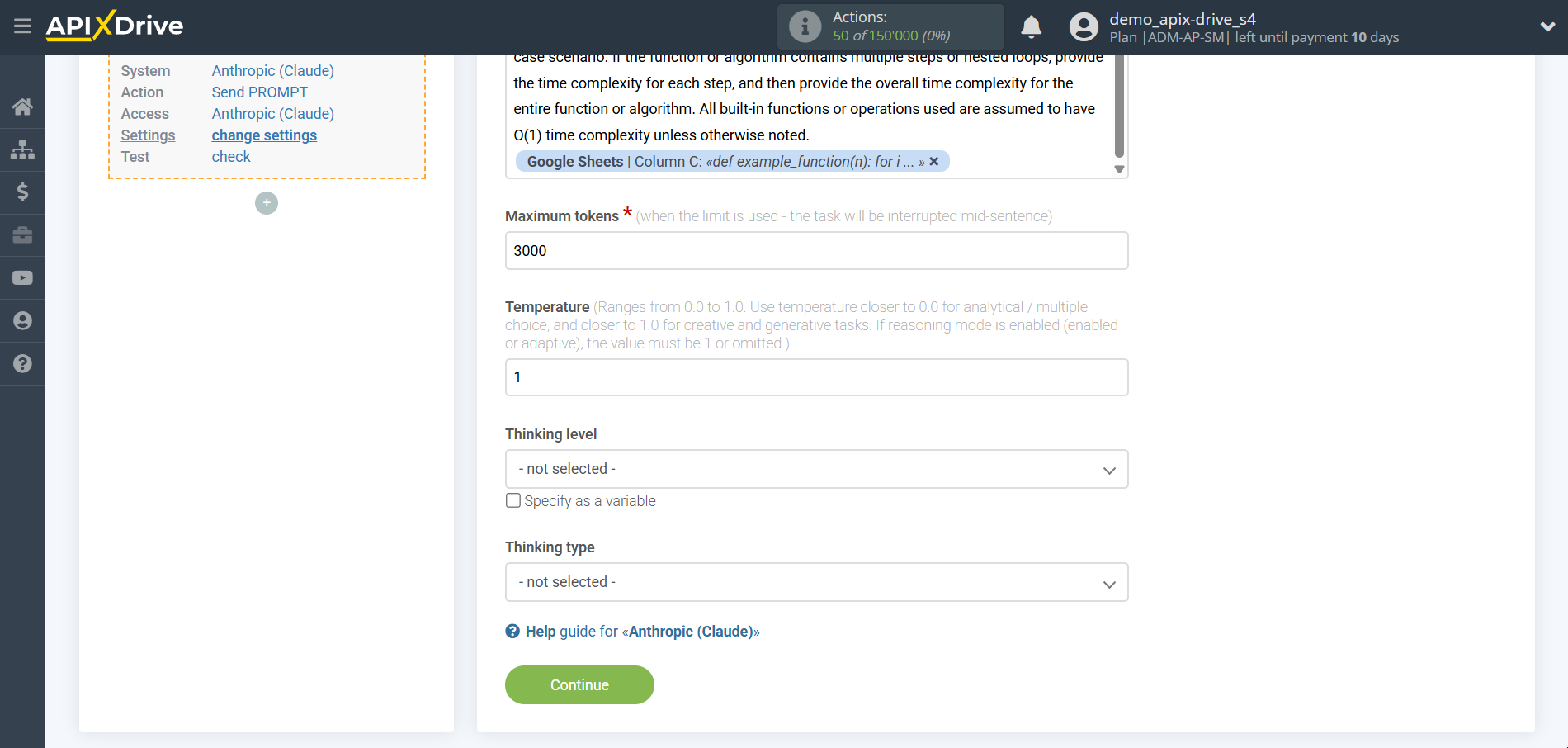

- Temperature - can range from 0 to 2. Higher values such as 0.8 will make the output more random, while lower values such as 0.2 will make it more focused and deterministic.

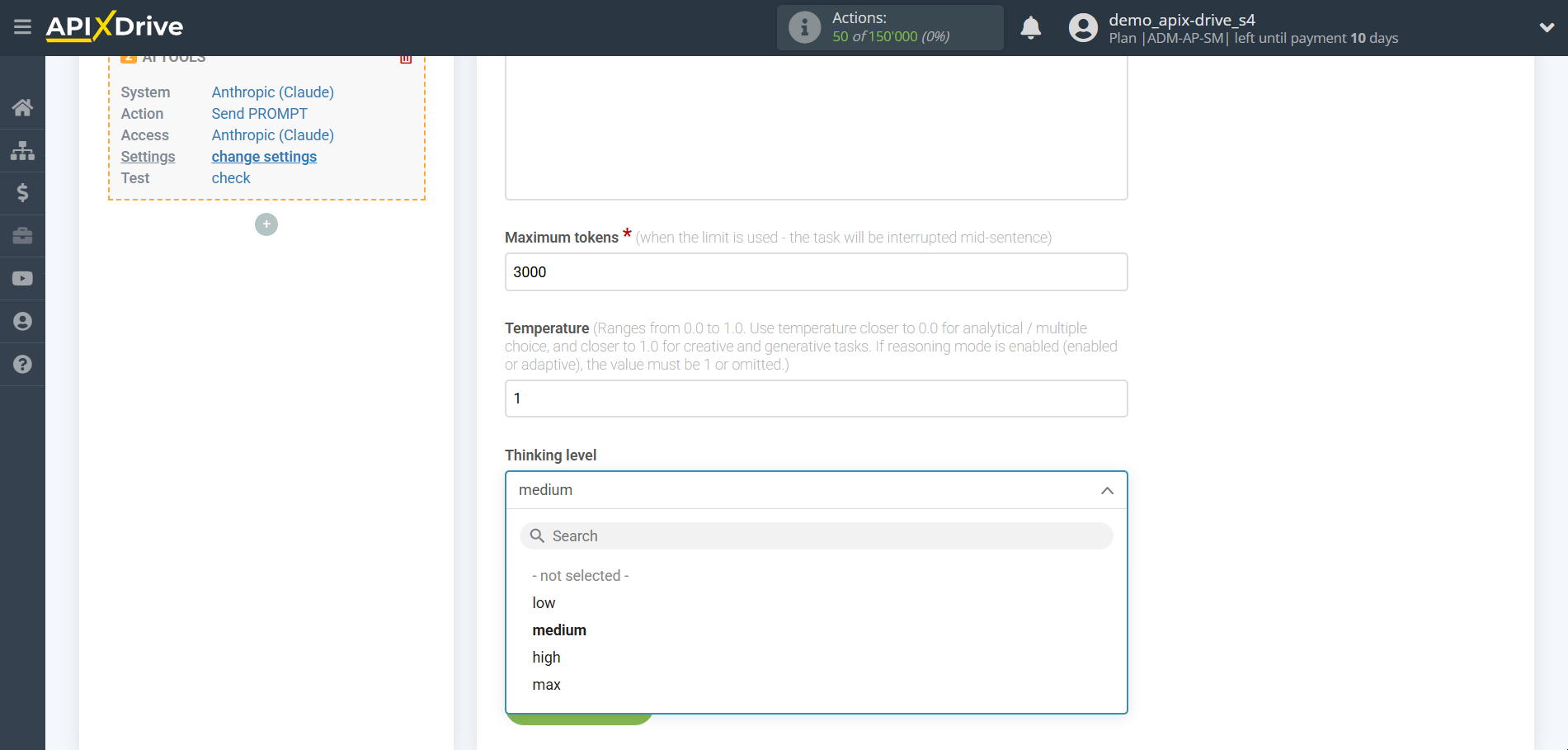

- Thinking Level

* Low — provides a basic level of logic checking. The model spends minimal time on “thinking”, which allows you to slightly improve the quality of the answer, while maintaining a high generation speed. Optimal for clarifying details in texts or simple code corrections.

* Medium — a balanced level that allows the model to work out the structure of the answer in more detail. The AI analyzes several options for solving the problem and chooses the most rational one. Suitable for most work tasks where the absence of logical errors is important.

* High — a mode of deep immersion in the context. The model conducts a thorough analysis of all conditions, reveals hidden connections and minimizes the risk of hallucinations. Recommended for complex programming, writing detailed technical tasks and analyzing voluminous documents.

* Max — the limit level of computational thinking. The model repeatedly checks its own conclusions, simulating the most complex scenarios. This is the best choice for scientific research, finding critical bugs in the code, or solving strategic problems where the cost of a mistake is very high.

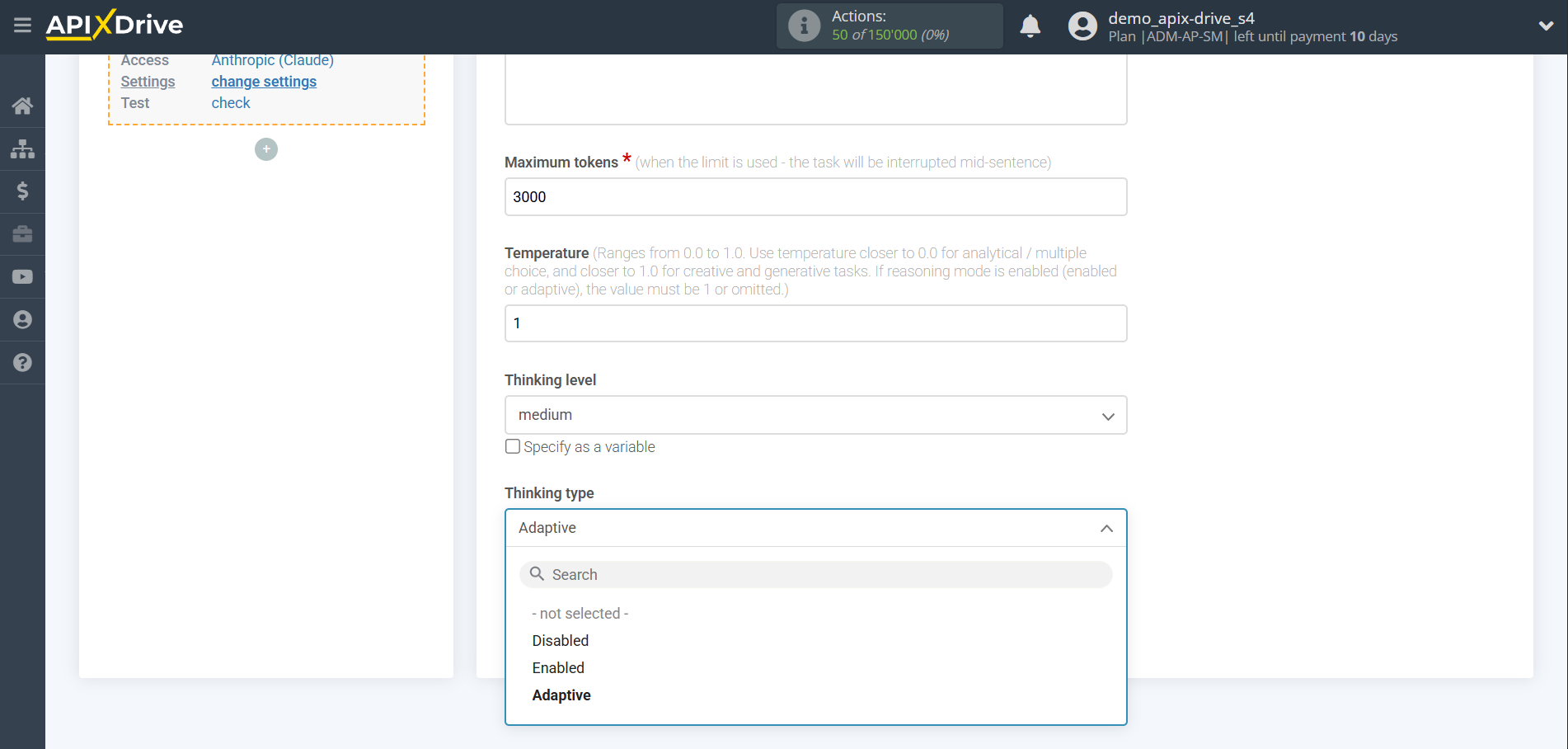

- Thinking Mode

* Off — the standard operating mode of the model, in which it generates an answer instantly, based on direct associations and learned patterns. Suitable for simple creative tasks, translation, or short answers where deep logical analysis is not required.

* On — a mode that activates the model's internal chain of thought. Before providing the final answer, the AI conducts a hidden process of reflection, checking the logic and building a solution step by step. This significantly increases accuracy in complex mathematical, technical, and strategic problems.

* Adaptive — an intelligent mode in which the model independently determines whether the request requires additional reflection. If the task is simple, the AI responds instantly; if the request is complex or contains contradictory conditions, the model automatically turns on the reasoning mode to achieve the best result.

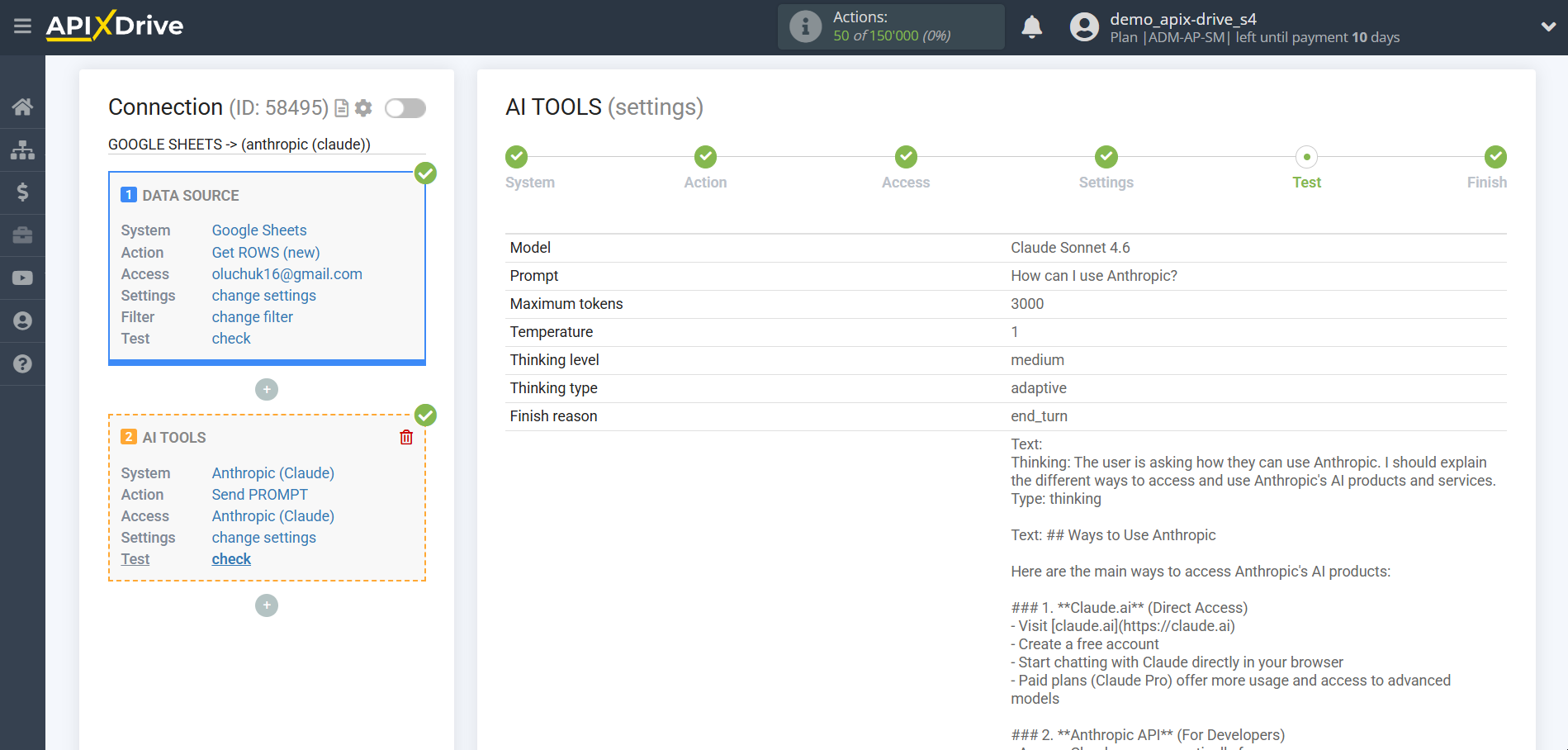

Now you see test data for your request. You can pass this data to your reception table.

If test data does not appear automatically, click "Search in Anthropic".

If you are not satisfied with something, click “Edit”, go back a step and change the search field settings.

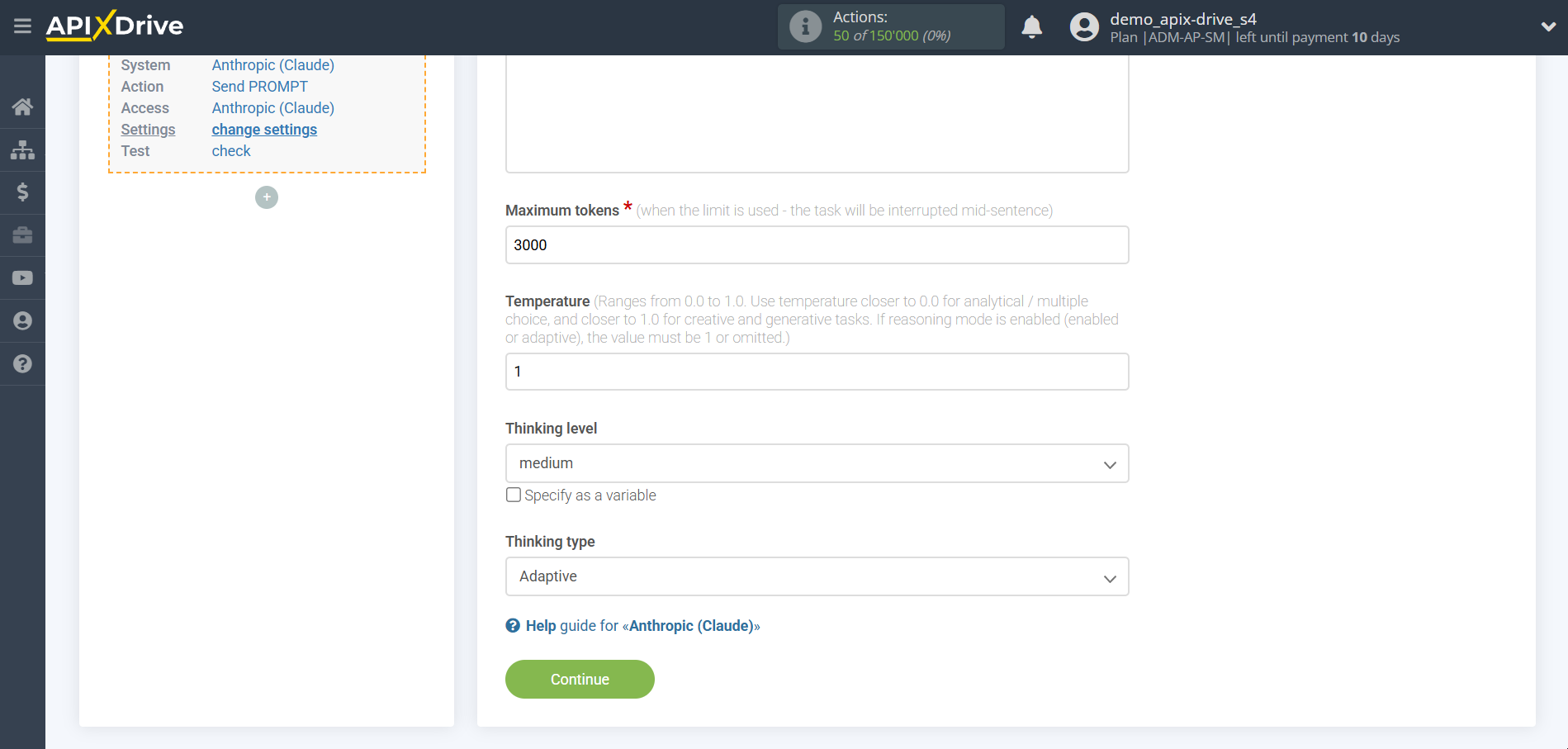

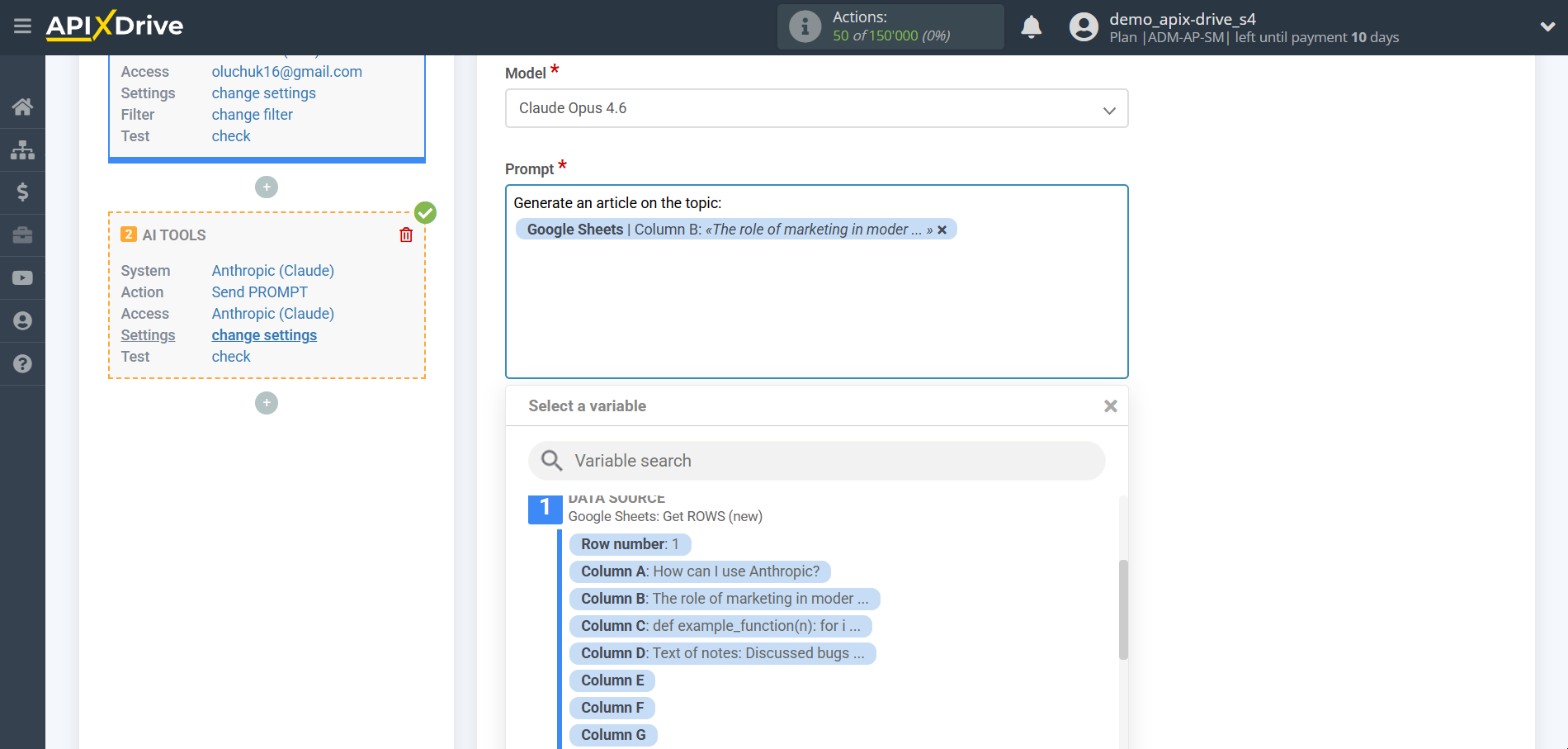

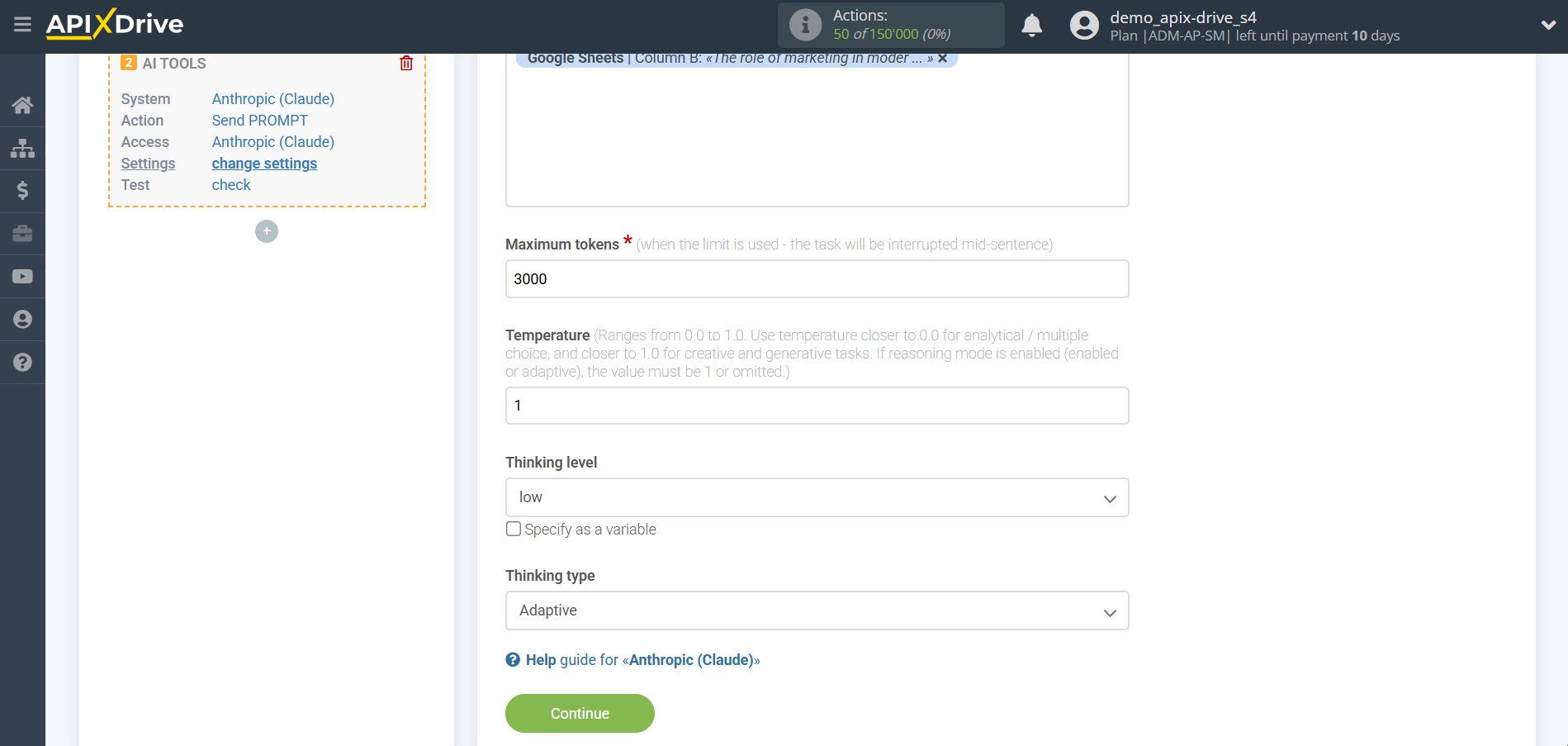

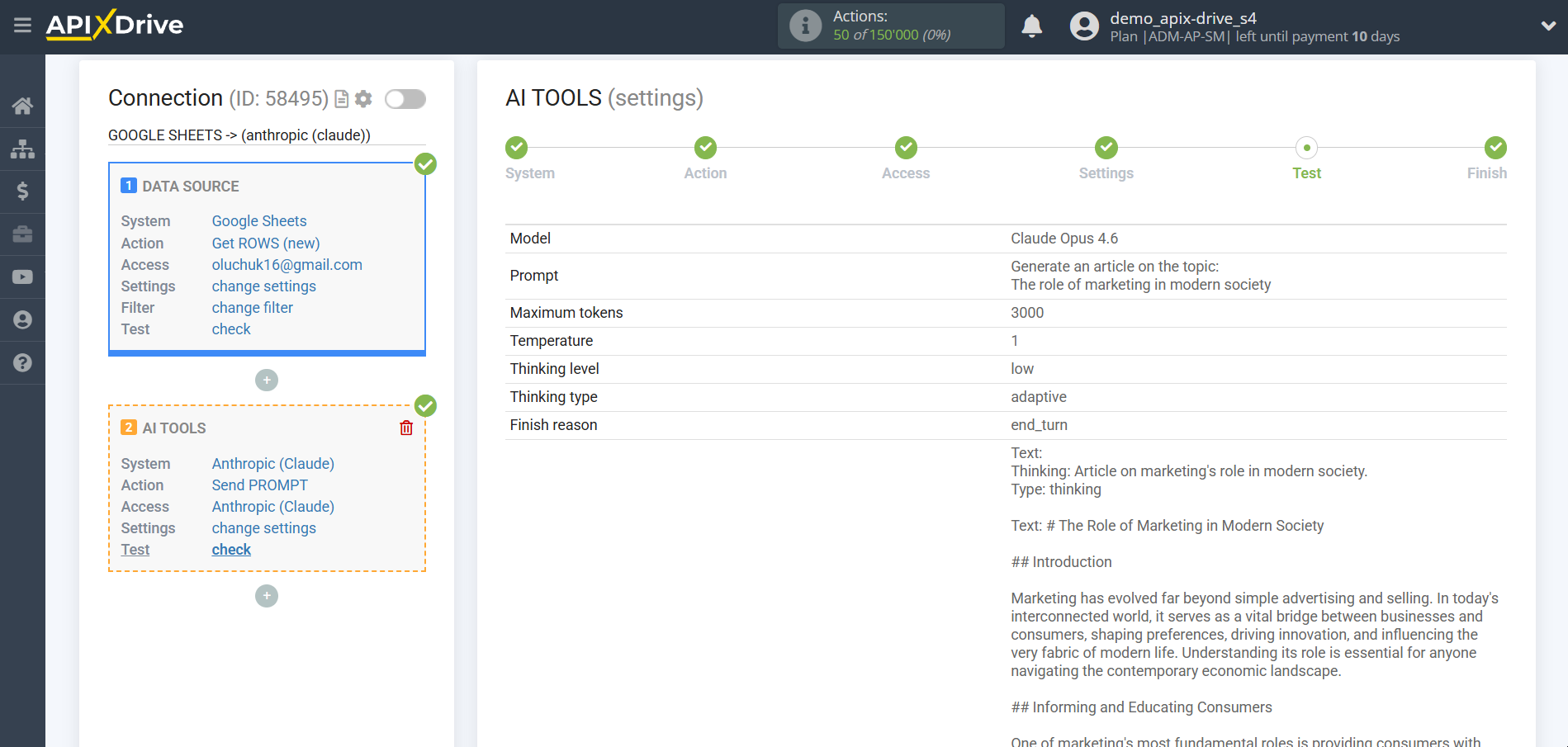

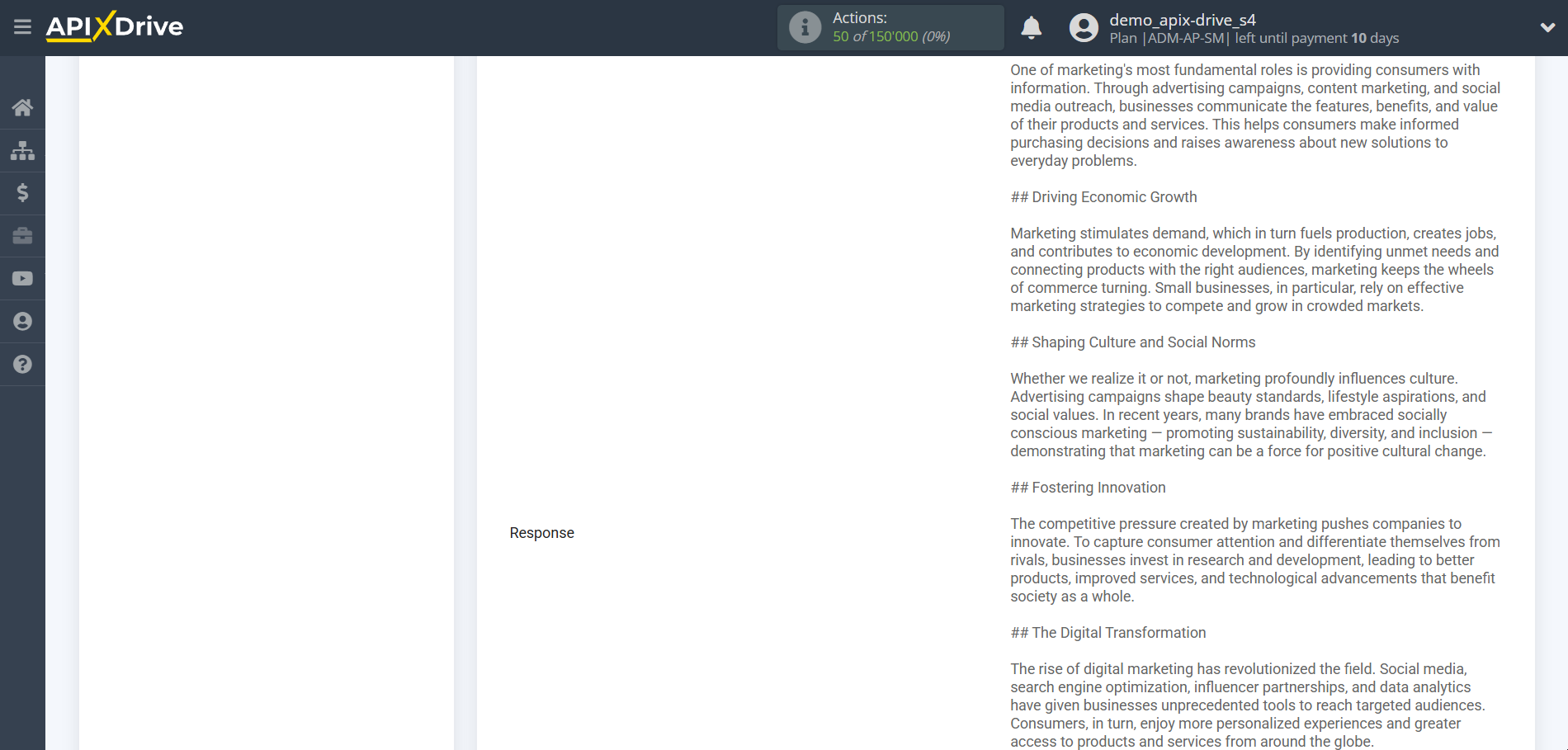

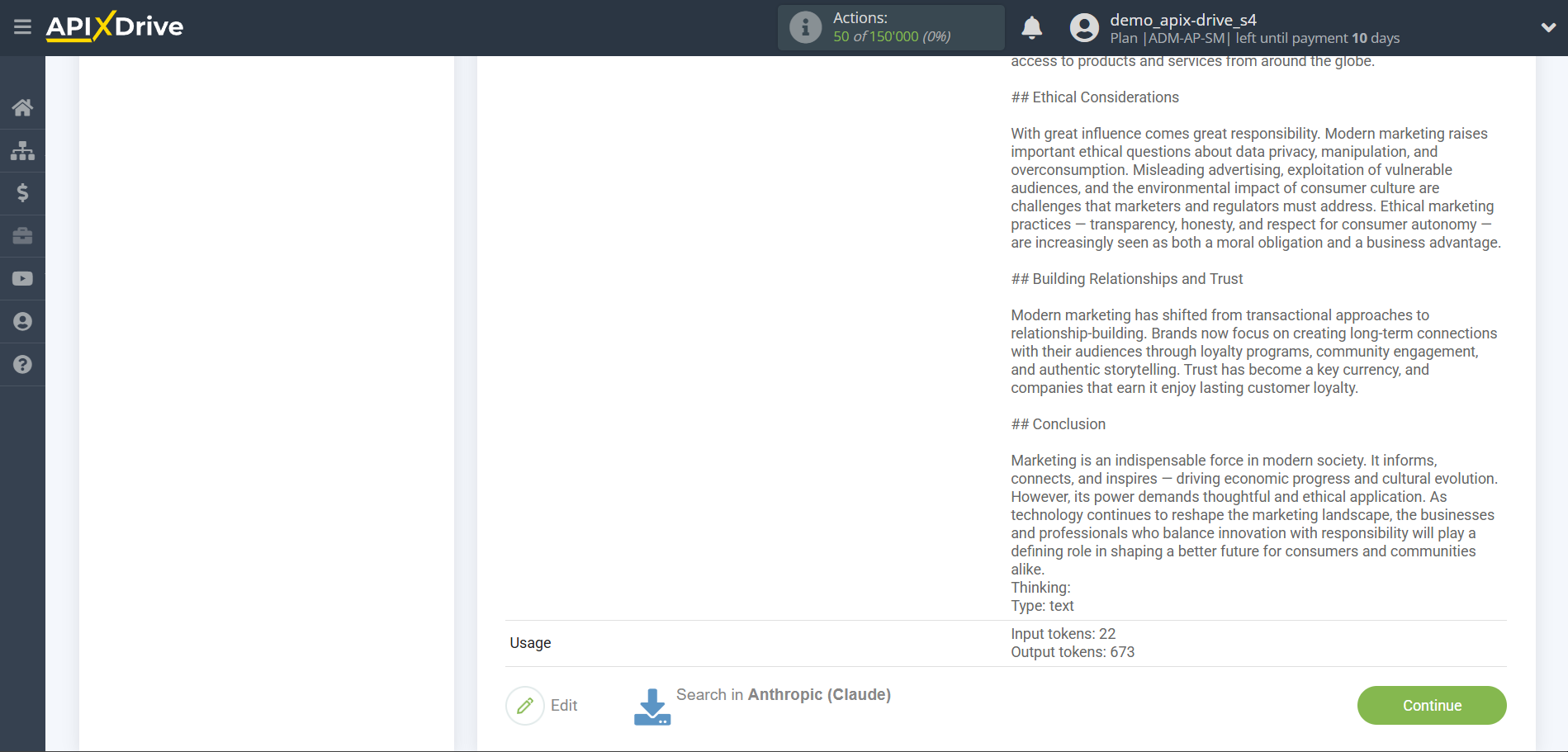

Generation and editing of text according to the request

In this case, select "Claude Opus 4.6".

- Prompt - in this field you need to assign which variable of the Data Source table contains the data for which the query will be made on the Anthropic server, in our case, this is column “B”.

- Presence penalty - This parameter is used to encourage the model to include a variety of tokens in the generated text. This is the value that is subtracted from the log likelihood of the token each time it is generated. A higher Presence Penalty value will cause the model to be more likely to generate tokens that were not already included in the generated text.

- Temperature - can range from 0 to 2. Higher values such as 0.8 will make the output more random, while lower values such as 0.2 will make it more focused and deterministic.

- Thinking Level

* Low — provides a basic level of logic checking. The model spends minimal time on “thinking”, which allows you to slightly improve the quality of the answer, while maintaining a high generation speed. Optimal for clarifying details in texts or simple code corrections.

* Medium — a balanced level that allows the model to work out the structure of the answer in more detail. The AI analyzes several options for solving the problem and chooses the most rational one. Suitable for most work tasks where the absence of logical errors is important.

* High — a mode of deep immersion in the context. The model conducts a thorough analysis of all conditions, reveals hidden connections and minimizes the risk of hallucinations. Recommended for complex programming, writing detailed technical tasks and analyzing voluminous documents.

* Max — the limit level of computational thinking. The model repeatedly checks its own conclusions, simulating the most complex scenarios. This is the best choice for scientific research, finding critical bugs in the code, or solving strategic problems where the cost of a mistake is very high.

- Thinking Mode

* Off — the standard operating mode of the model, in which it generates an answer instantly, based on direct associations and learned patterns. Suitable for simple creative tasks, translation, or short answers where deep logical analysis is not required.

* On — a mode that activates the model's internal chain of thought. Before providing the final answer, the AI conducts a hidden process of reflection, checking the logic and building a solution step by step. This significantly increases accuracy in complex mathematical, technical, and strategic problems.

* Adaptive — an intelligent mode in which the model independently determines whether the request requires additional reflection. If the task is simple, the AI responds instantly; if the request is complex or contains contradictory conditions, the model automatically turns on the reasoning mode to achieve the best result.

Now you see test data for your request. You can pass this data to your reception table.

If test data does not appear automatically, click "Search in Anthropic".

If you are not satisfied with something, click “Edit”, go back a step and change the search field settings.

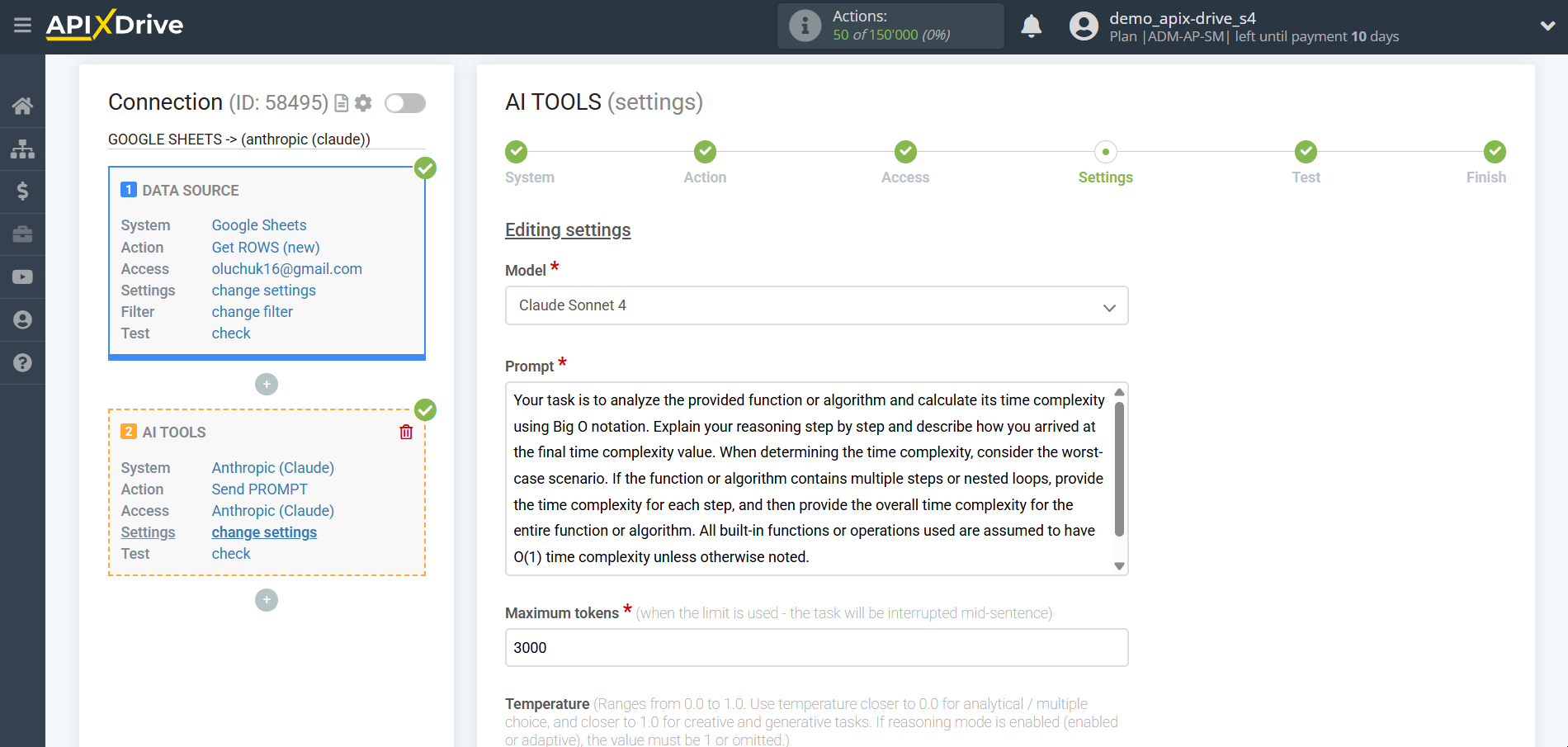

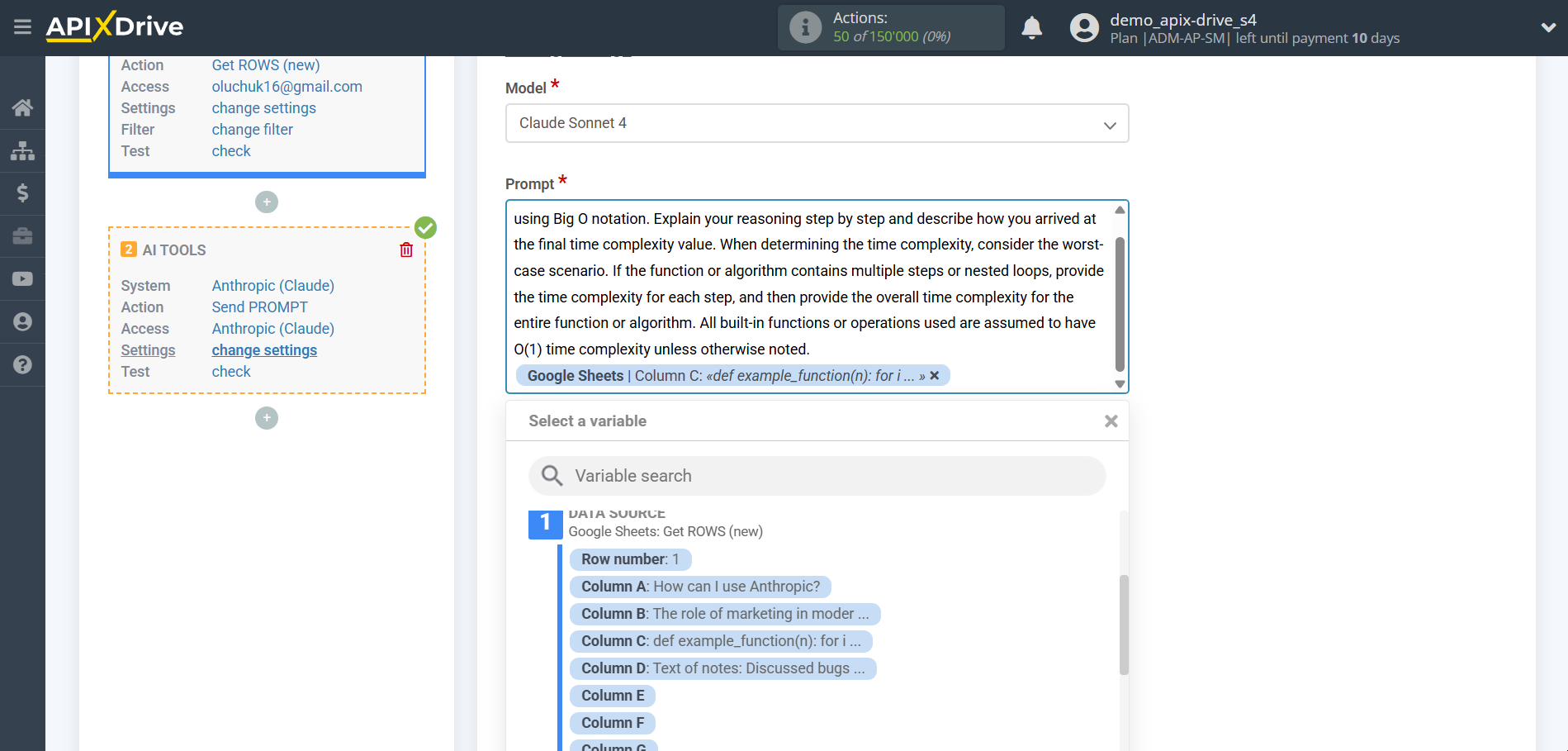

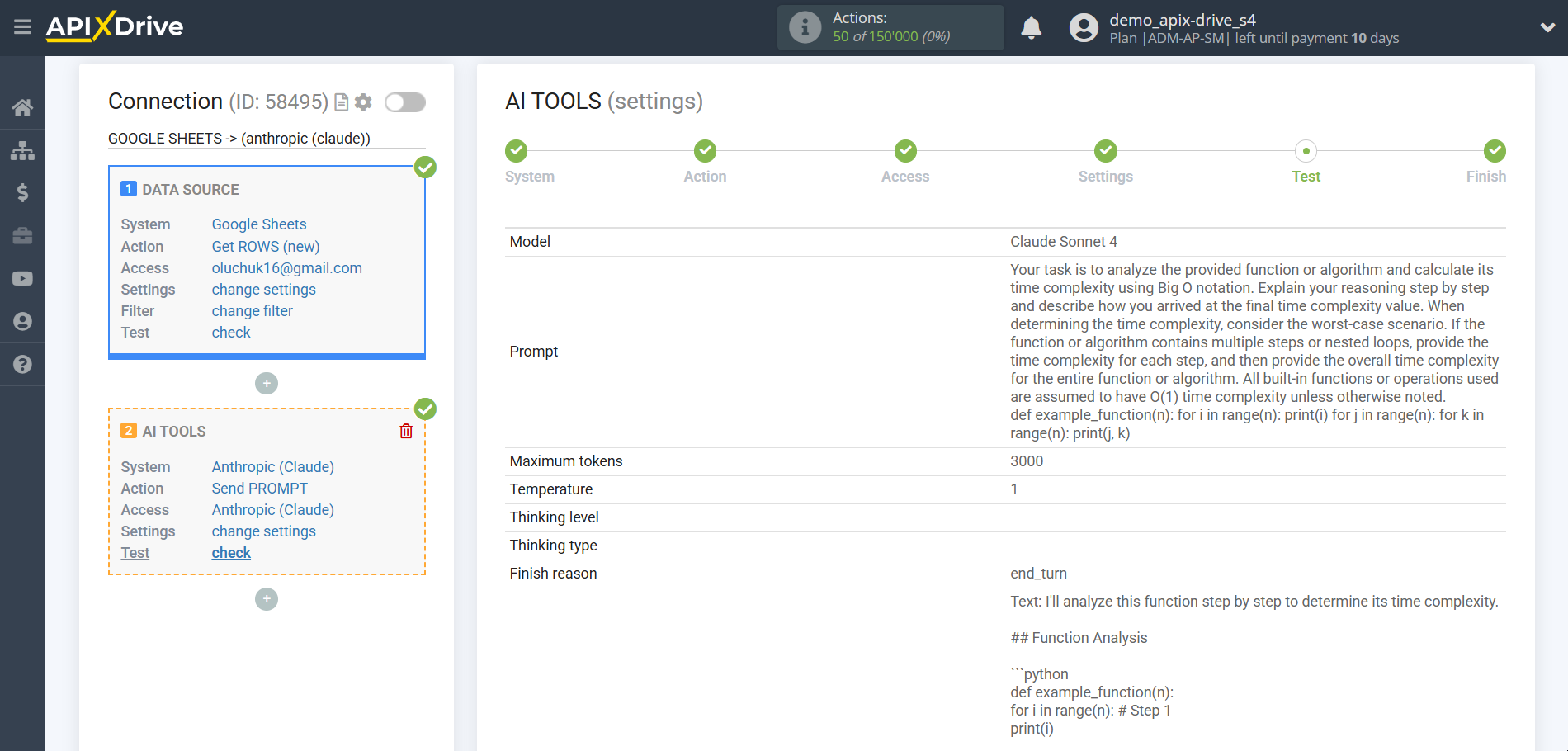

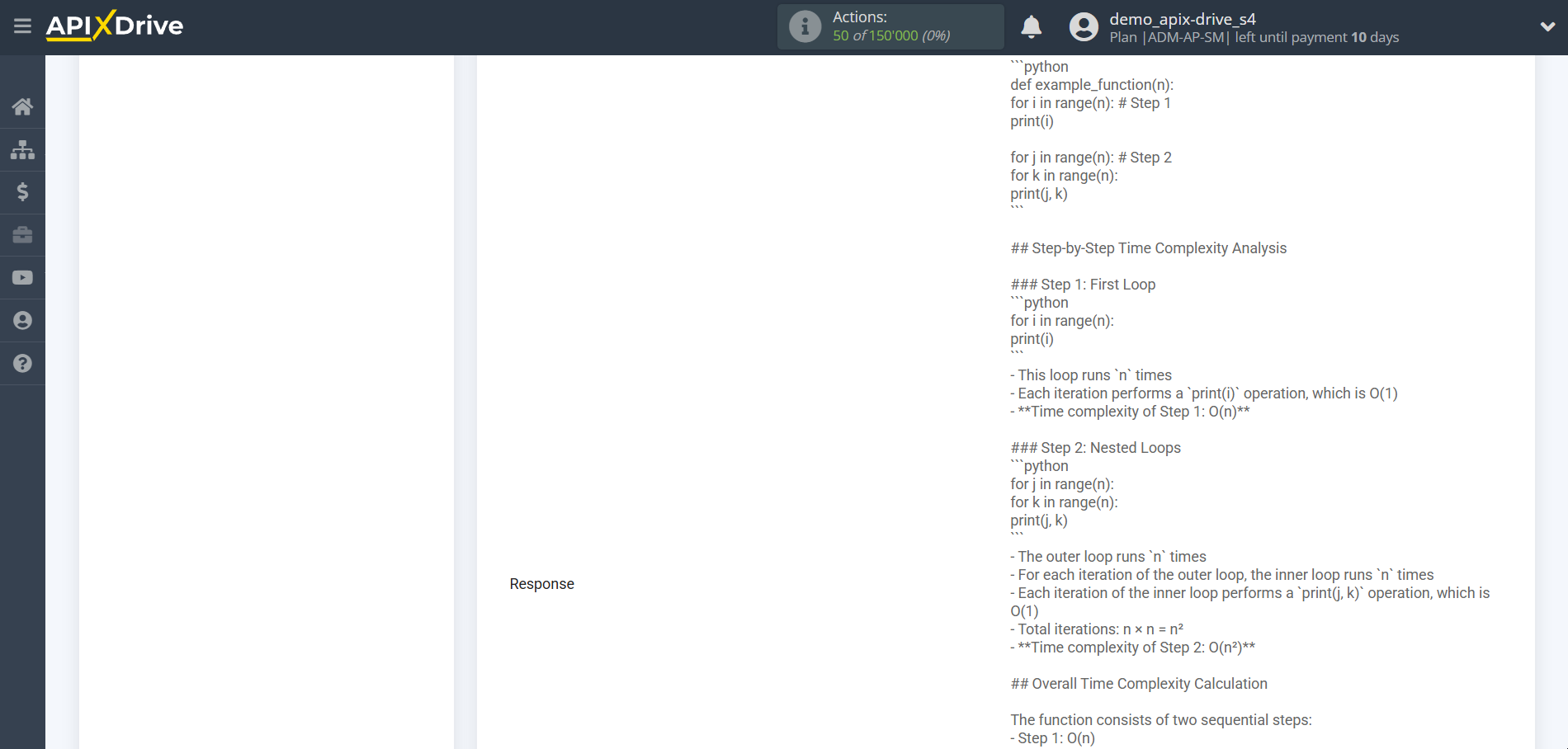

Mathematical calculations and modelling

In this case, select "Claude Sonnet 4".

- Prompt - in this field you need to assign which variable of the Data Source table contains the data for which the query will be made on the Anthropic server, in our case, this is column “C”.

- Presence penalty - This parameter is used to encourage the model to include a variety of tokens in the generated text. This is the value that is subtracted from the log likelihood of the token each time it is generated. A higher Presence Penalty value will cause the model to be more likely to generate tokens that were not already included in the generated text.

- Temperature - can range from 0 to 2. Higher values such as 0.8 will make the output more random, while lower values such as 0.2 will make it more focused and deterministic.

Now you see test data for your request. You can pass this data to your reception table.

If test data does not appear automatically, click "Search in Anthropic".

If you are not satisfied with something, click “Edit”, go back a step and change the search field settings.

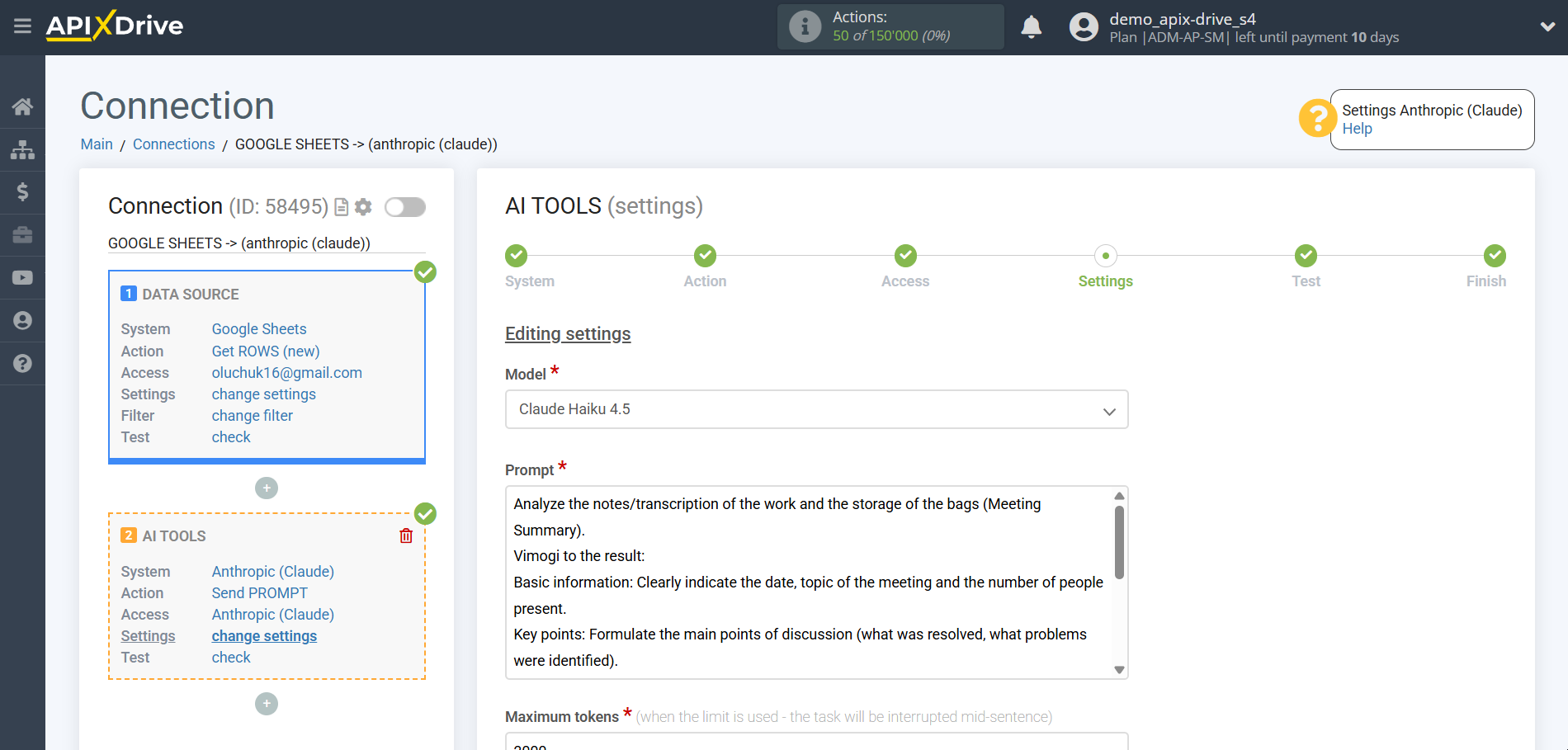

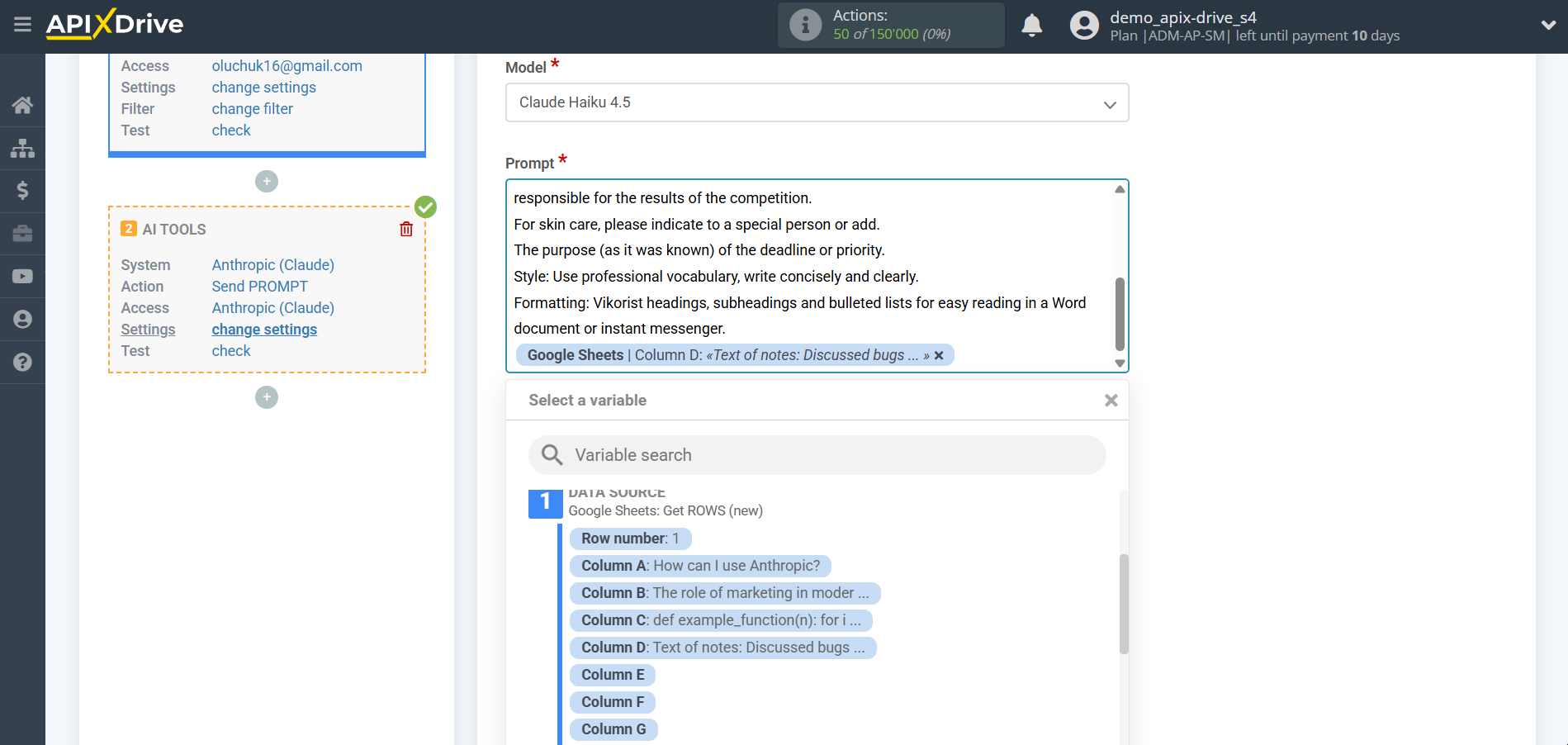

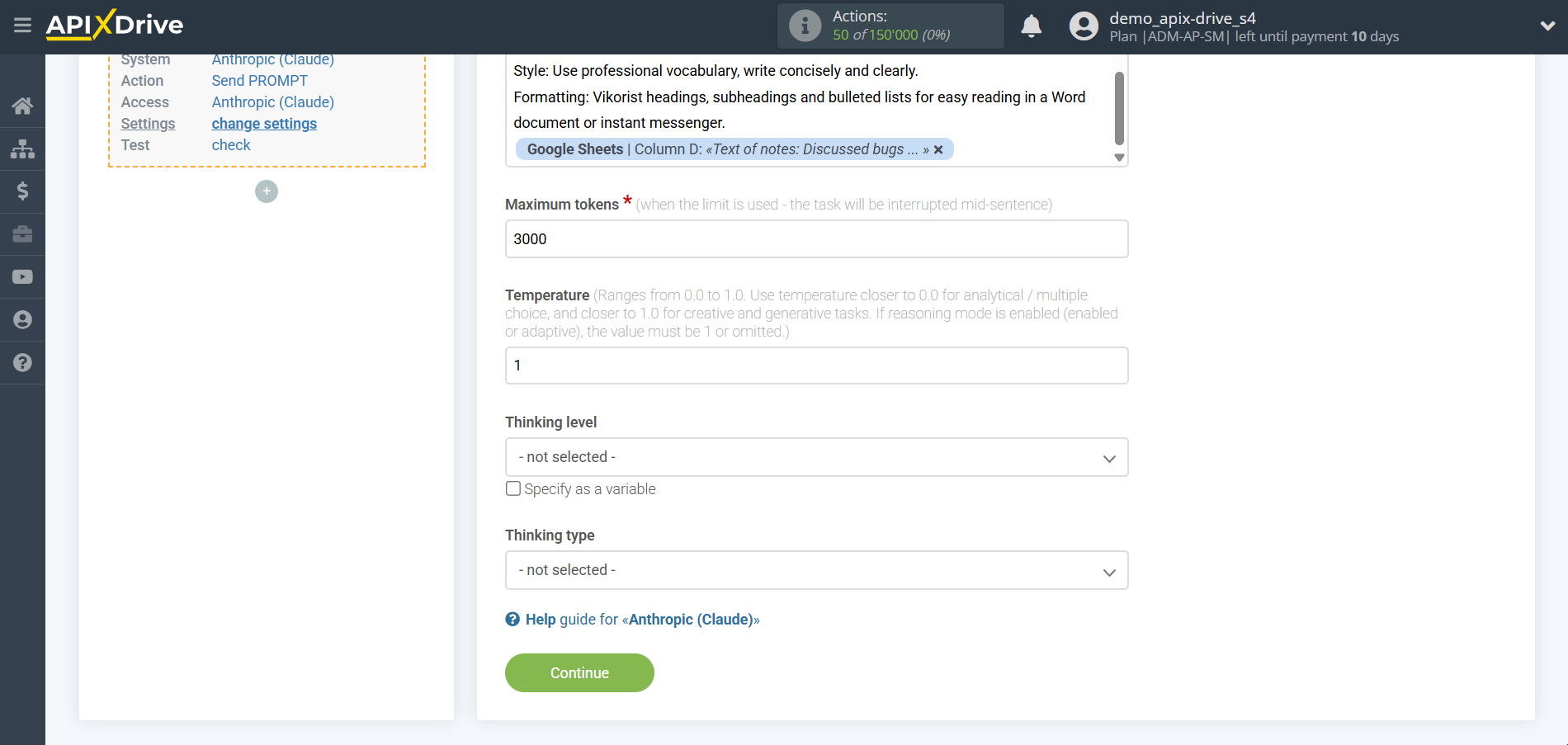

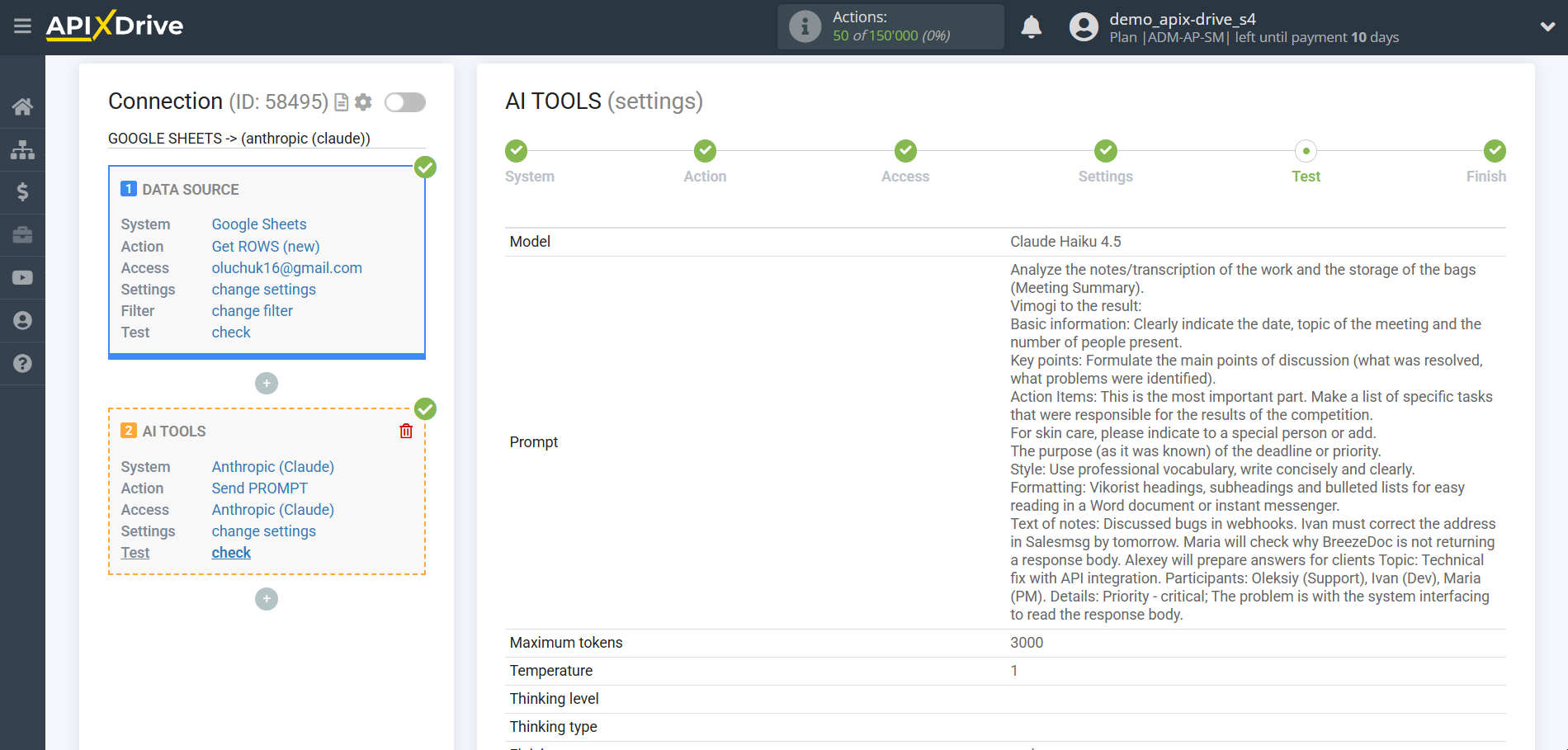

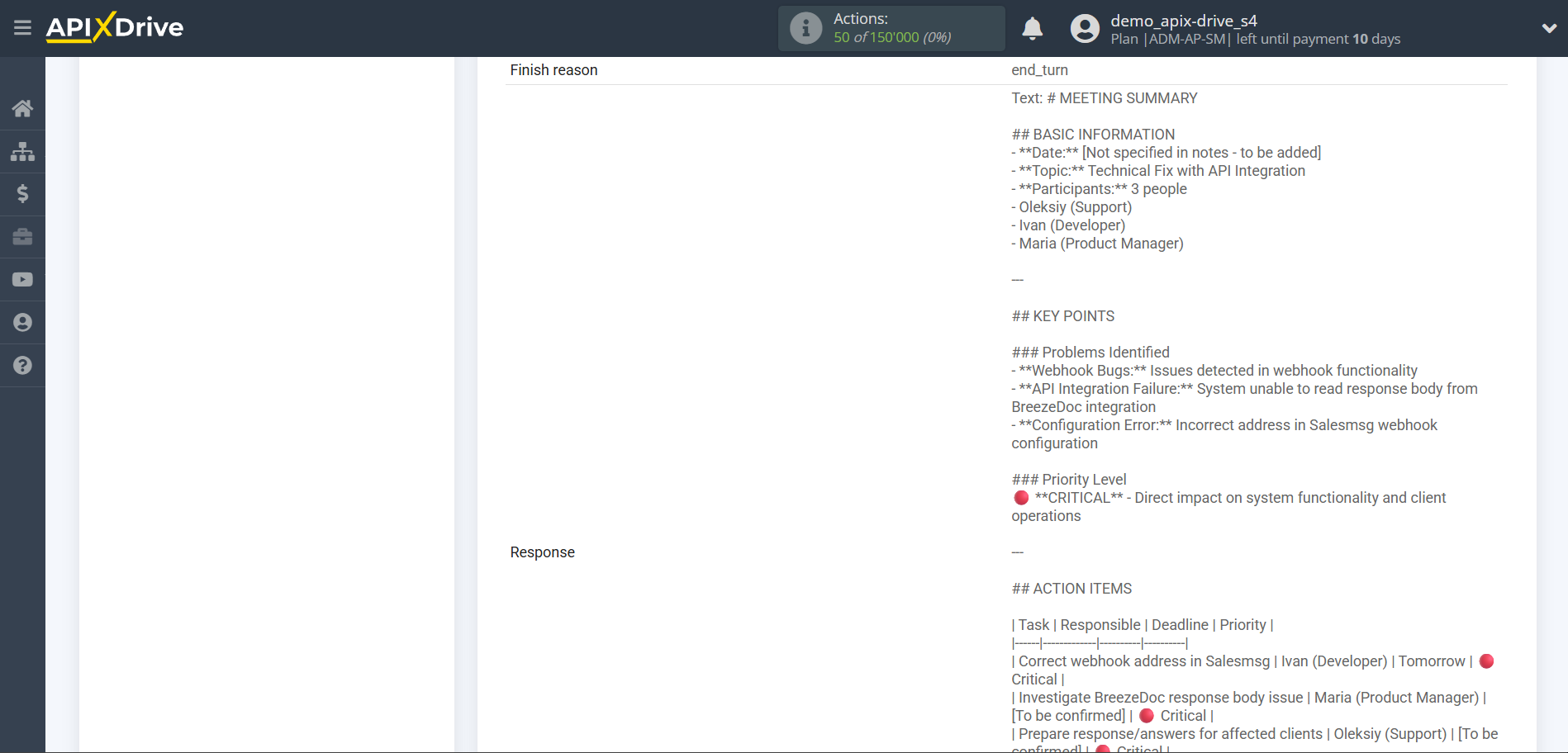

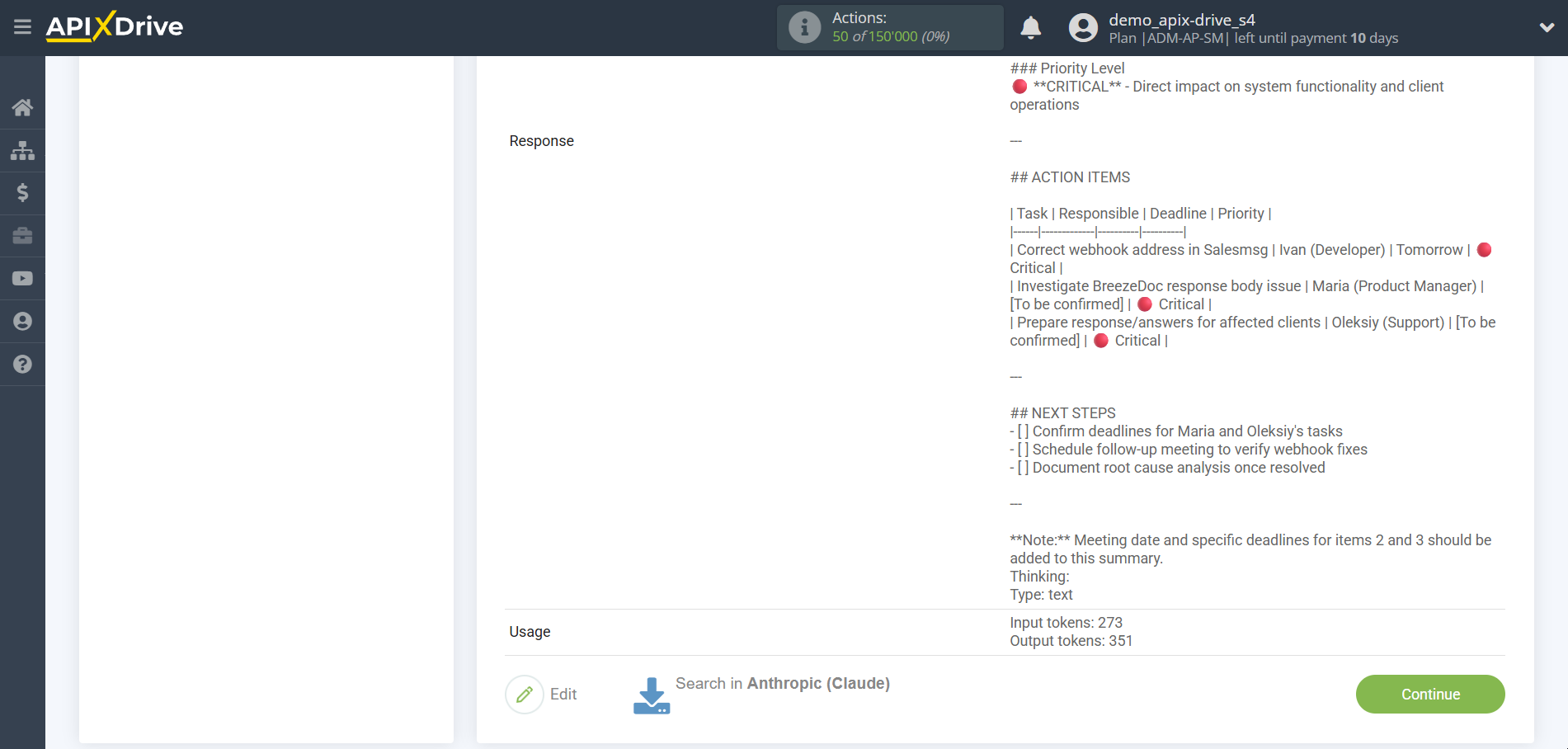

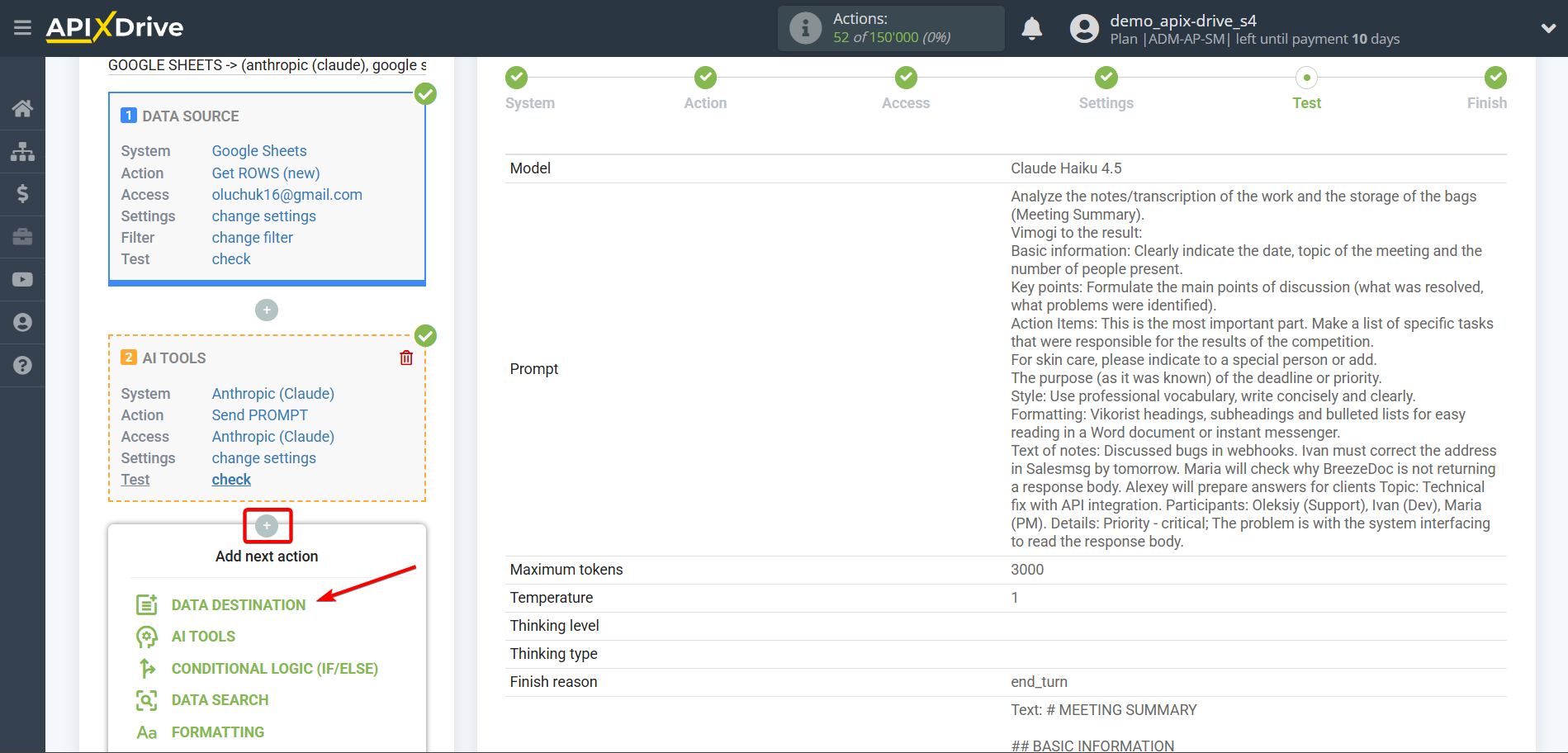

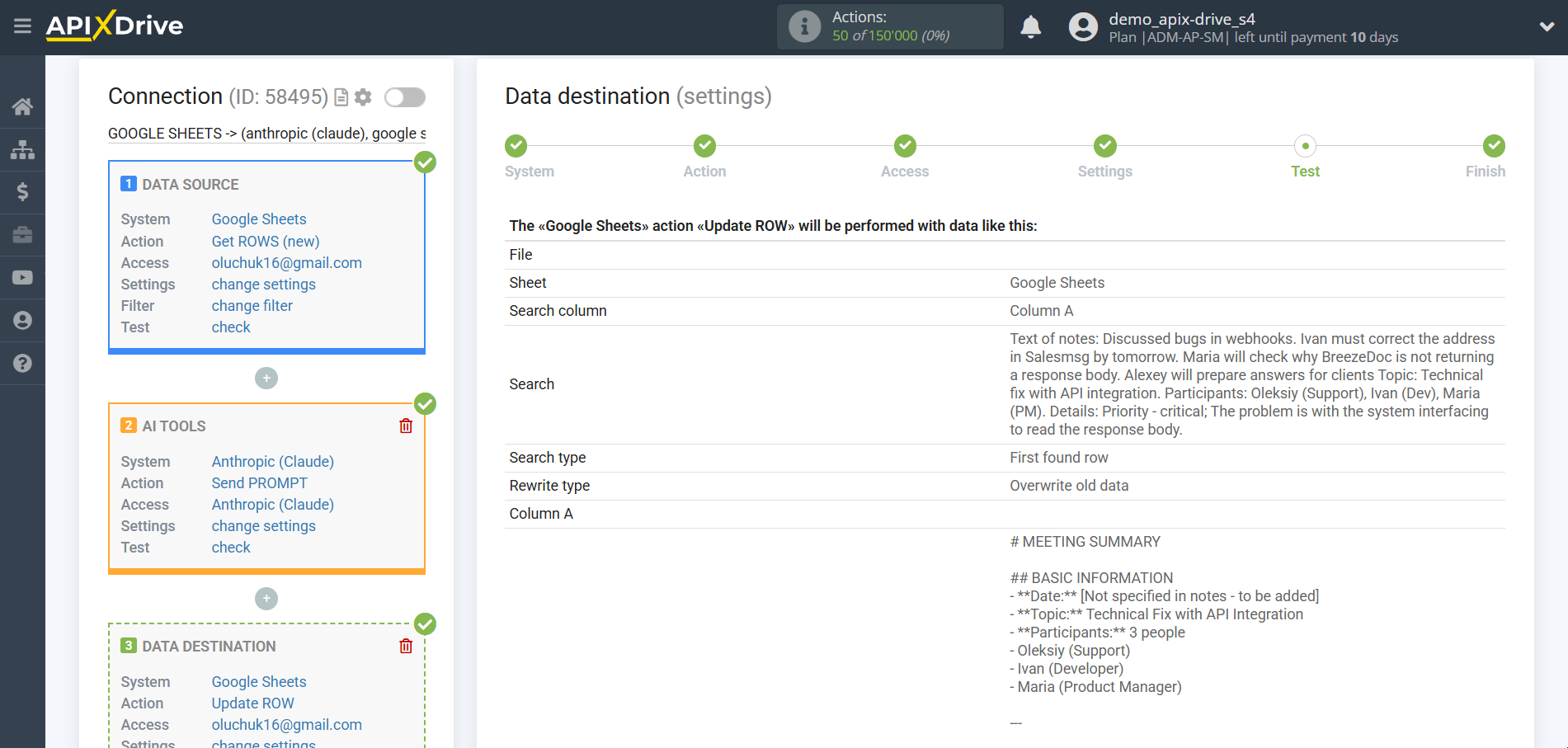

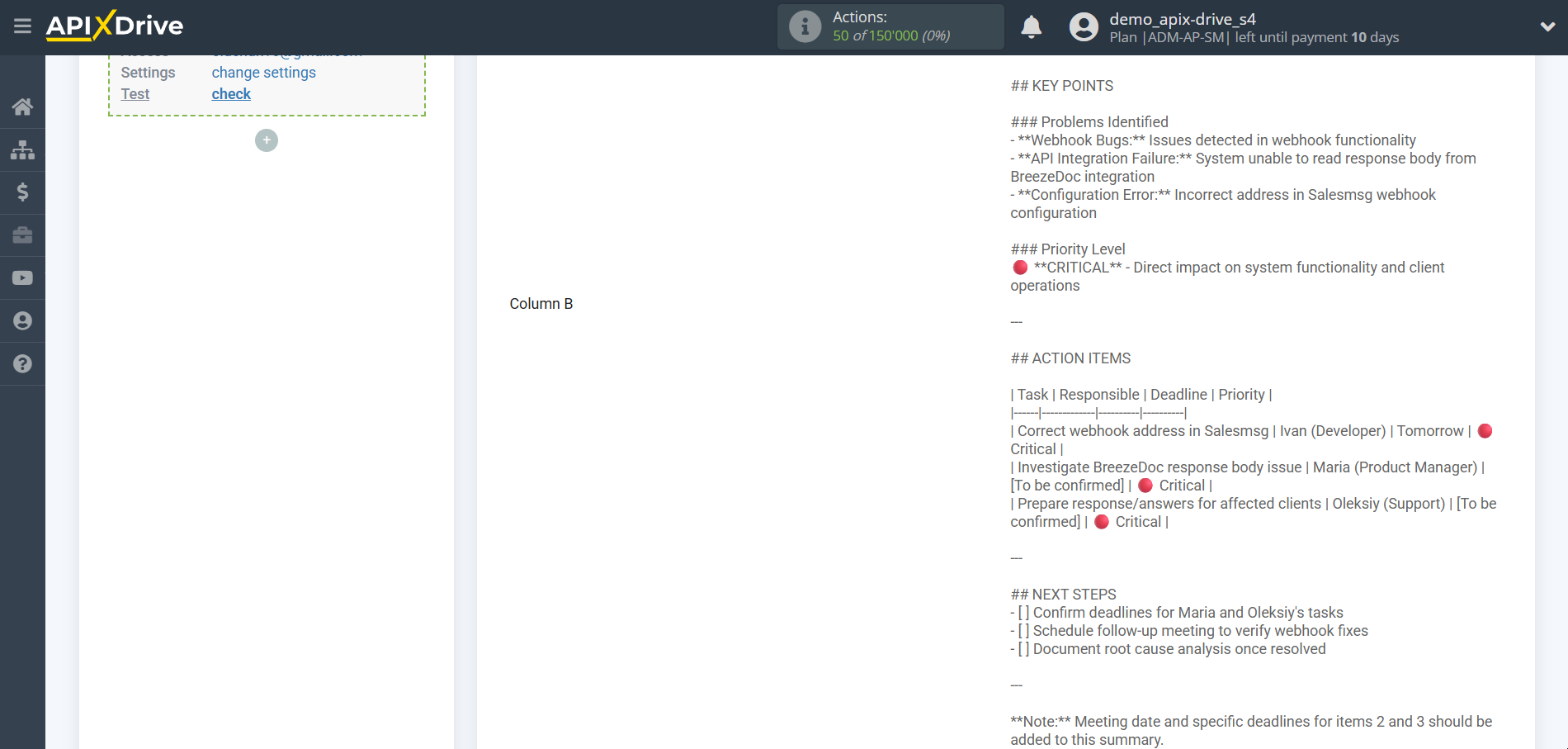

Data analysis and structuring

In this case, select "Claude Haiku 4.5".

- Prompt - in this field you need to assign which variable of the Data Source table contains the data for which the query will be made on the Anthropic server, in our case, this is column “D”.

- Presence penalty - This parameter is used to encourage the model to include a variety of tokens in the generated text. This is the value that is subtracted from the log likelihood of the token each time it is generated. A higher Presence Penalty value will cause the model to be more likely to generate tokens that were not already included in the generated text.

- Temperature - can range from 0 to 2. Higher values such as 0.8 will make the output more random, while lower values such as 0.2 will make it more focused and deterministic.

Now you see test data for your request. You can pass this data to your reception table.

If test data does not appear automatically, click "Search in Anthropic".

If you are not satisfied with something, click “Edit”, go back a step and change the search field settings.

This completes the configuration of Anthropic data!

Now we can start setting up Google Sheets as a Data Receiver system.

To do this, click "Add Data Destination".

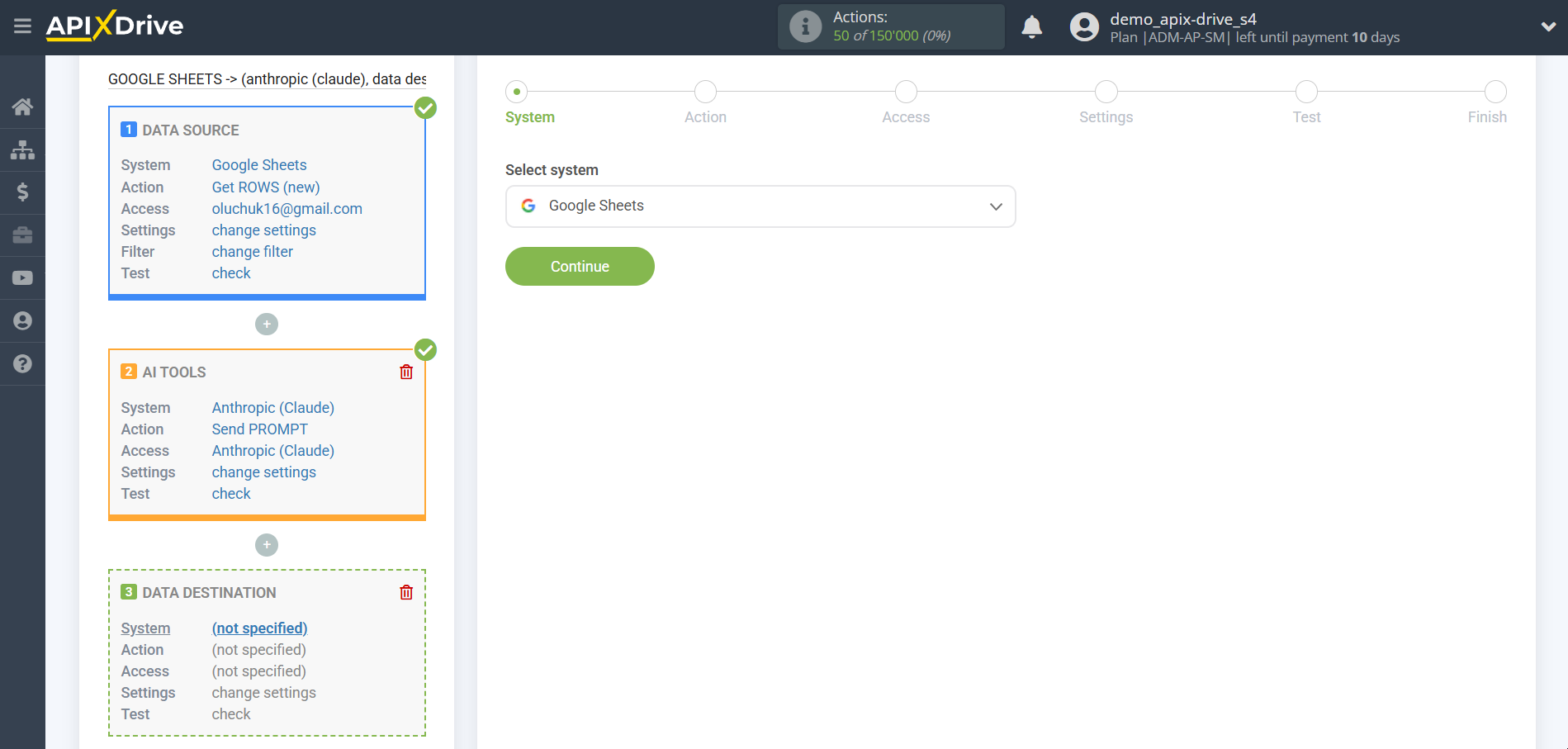

Setup Data Destination system: Google Sheets

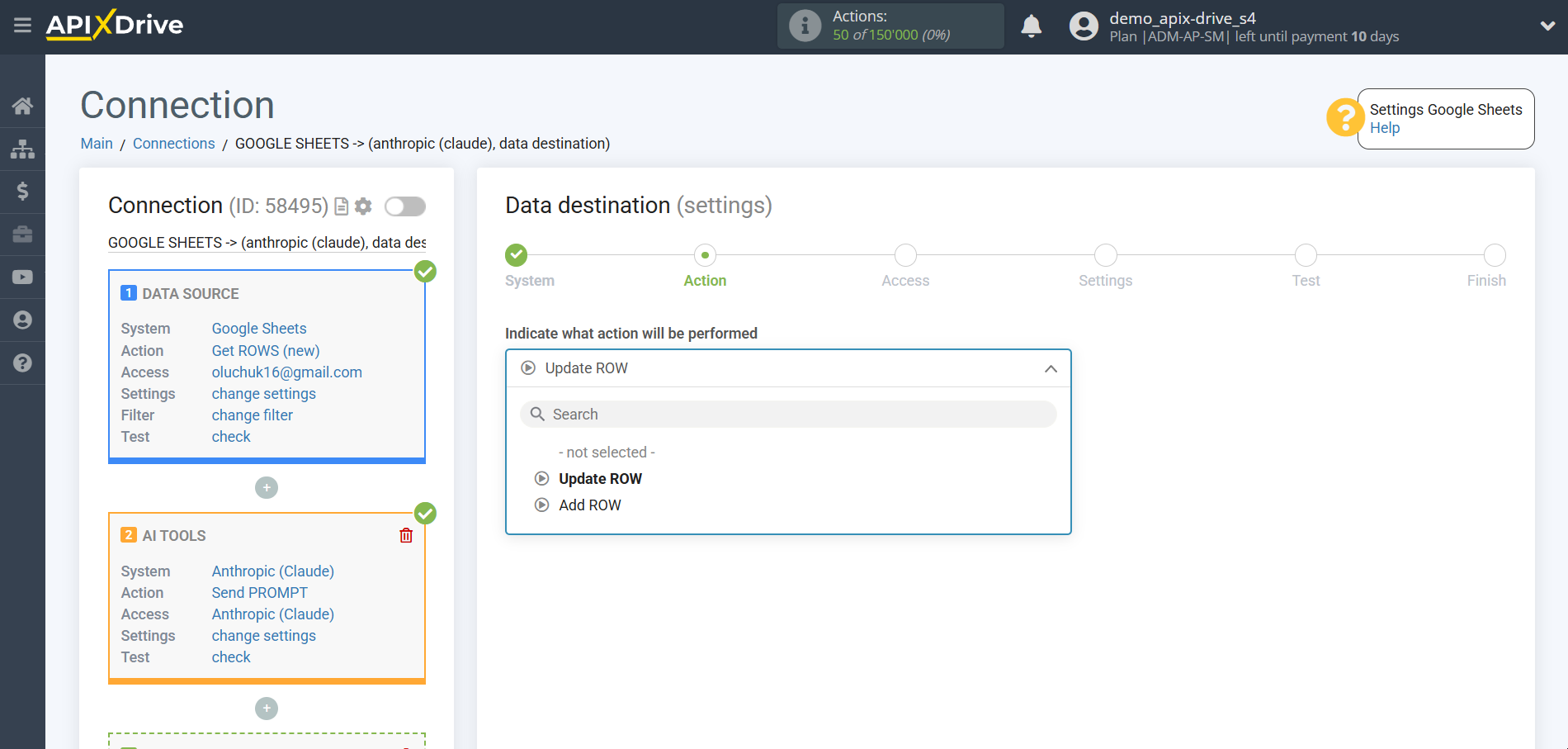

Select the system as Data Destination. In this case, you must specify Google Sheets.

Next, you need to specify the action "Update ROW".

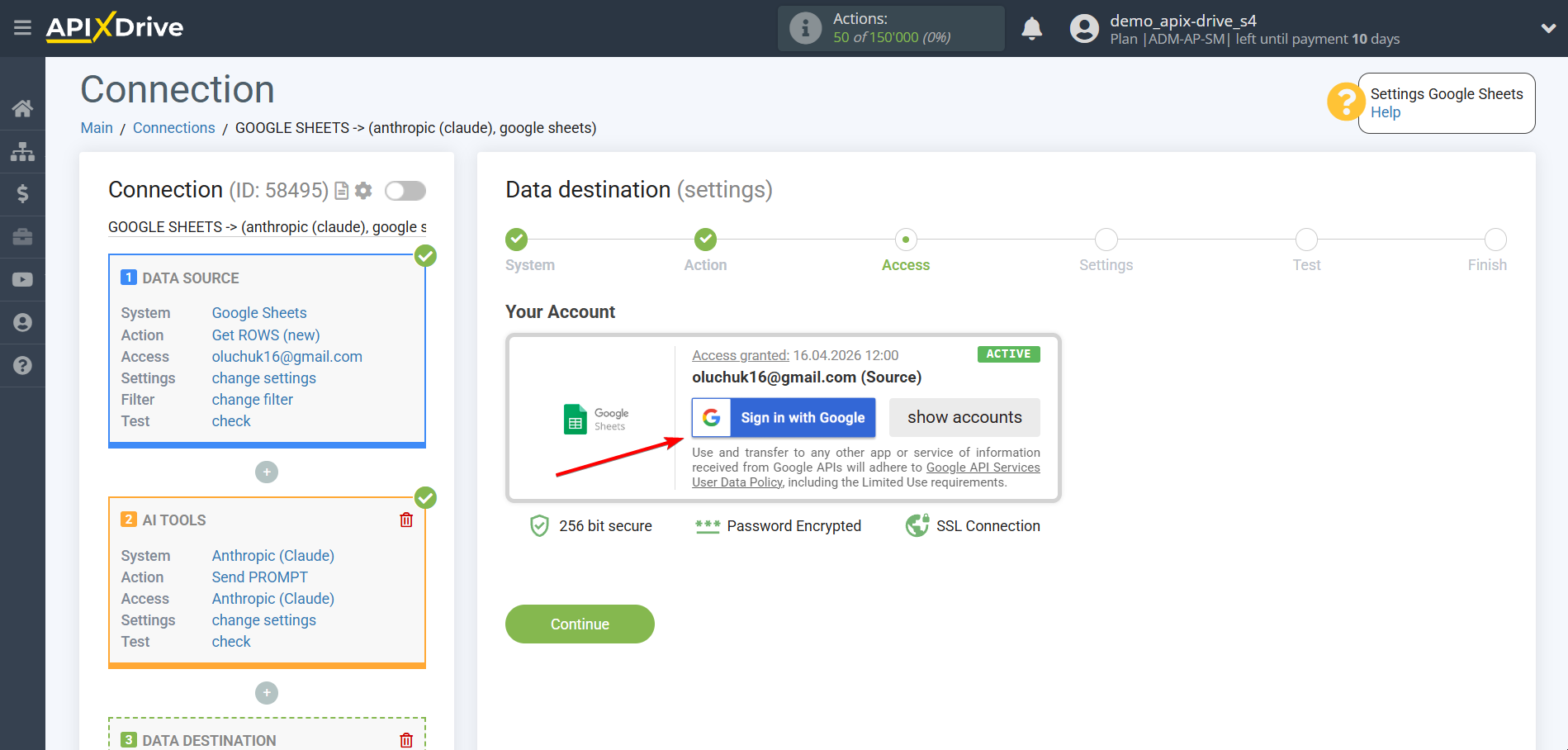

The next step is to select a Google Sheets account into which the result of the Anthropic request will be transferred. If this is the same account, then we select it.

If you need to connect another login to the system, click “Connect account” and repeat the same steps described when connecting Google Sheets as a Data Source.

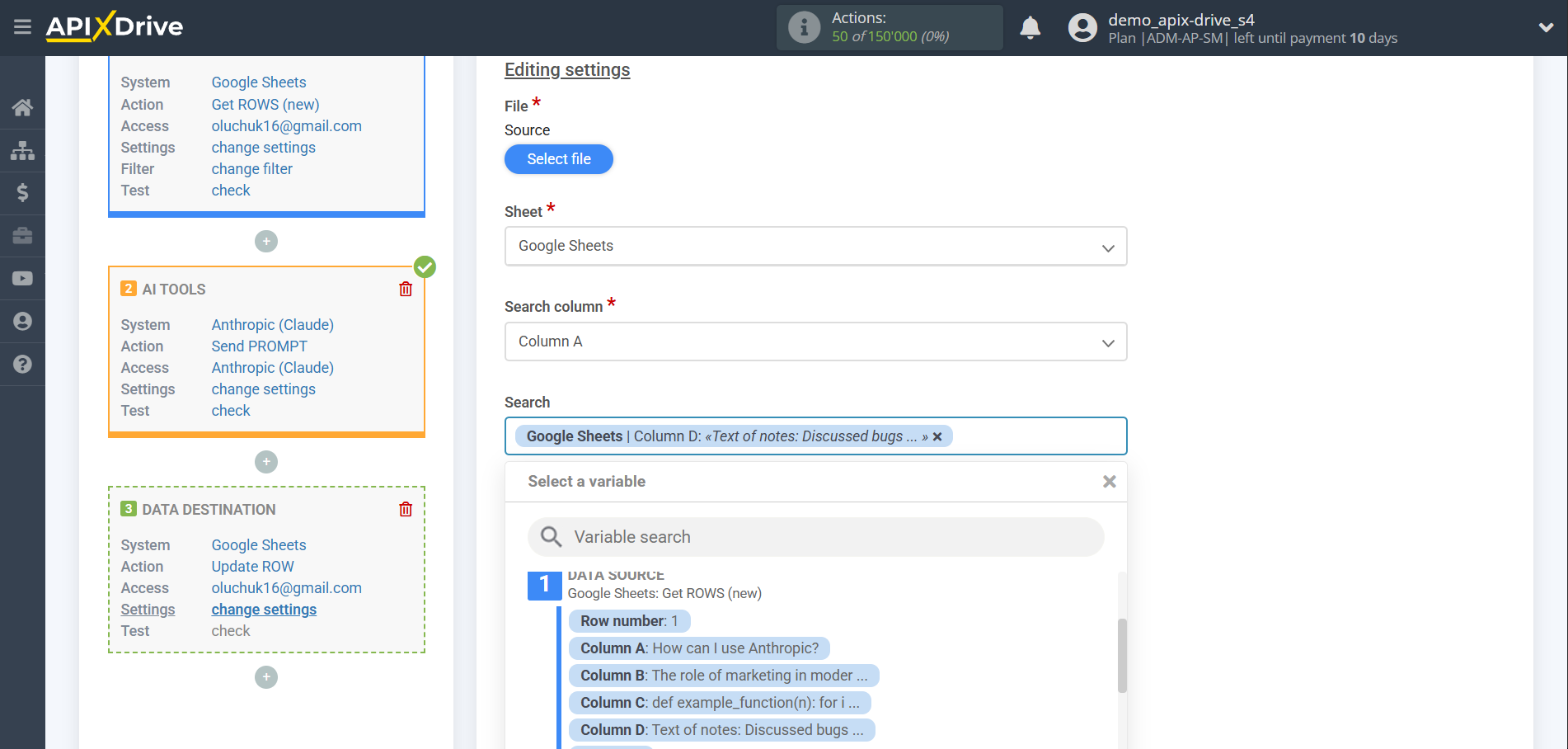

Now you need to select the File (Table) and Sheet in which the status of Anthropic will be updated.

In the "Search column" field, you need to select the column by which the data will be searched. That is, where to look for data in the table.

Next, in the "Search" field, you need to select a variable from the drop-down list or enter the data manually, by which value the system will search for data to update the row you need. In our case, we select column "A", which contains the data about the request. The system will update the data in the desired row only if it matches the requested data.

Also, you need to specify the Search Type, in case several rows with the same query are found:

"Take the first found row" - searching and updating data will occur in the first found row that satisfies the search conditions.

"Take the last found row" - searching and updating data will occur in the last found row that satisfies the search conditions.

"Take all found rows" - search and update of data will be performed on all found rows that satisfy the search conditions.

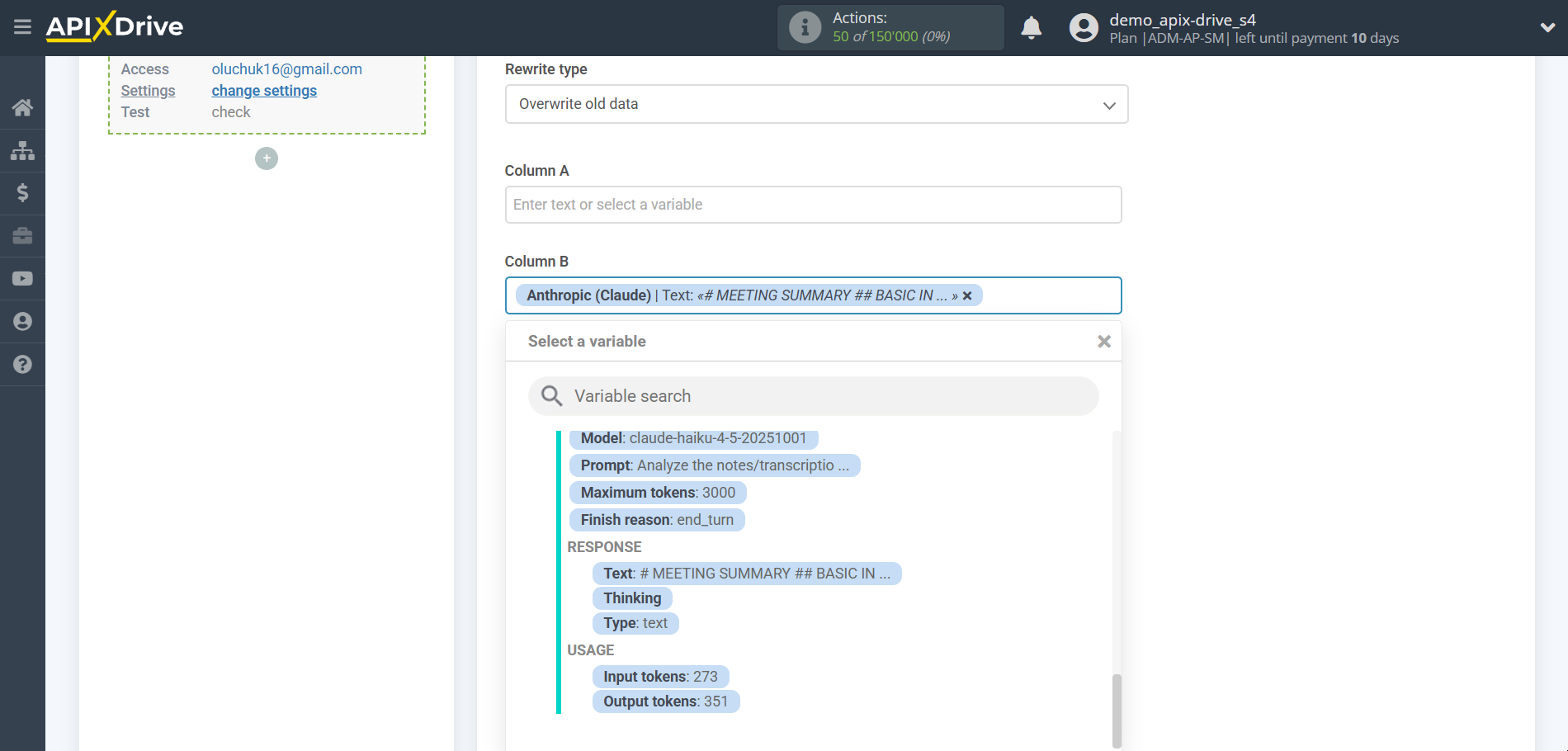

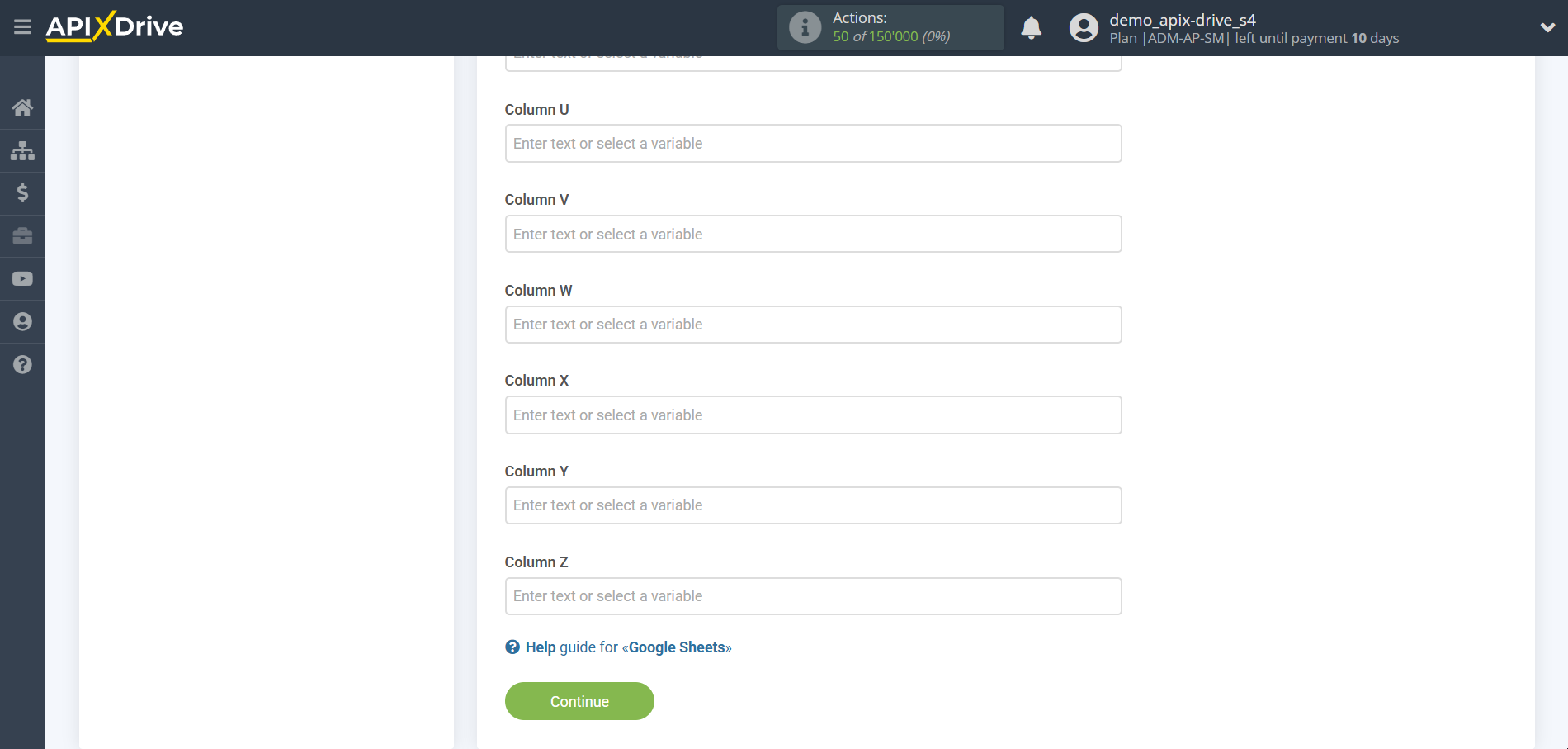

Now you need to assign a variable with the result of the query, which we take from the Data Search block, to the empty column. In the future, this column will be updated according to your request.

After setting, click "Continue".

Thus, the Anthropic block takes the field in the Data Source in which you have written the request text, queries the OpenAI server (ChatGPT) for the result of this request and transfers this data to the Data Reception field, for example, in column “B”.

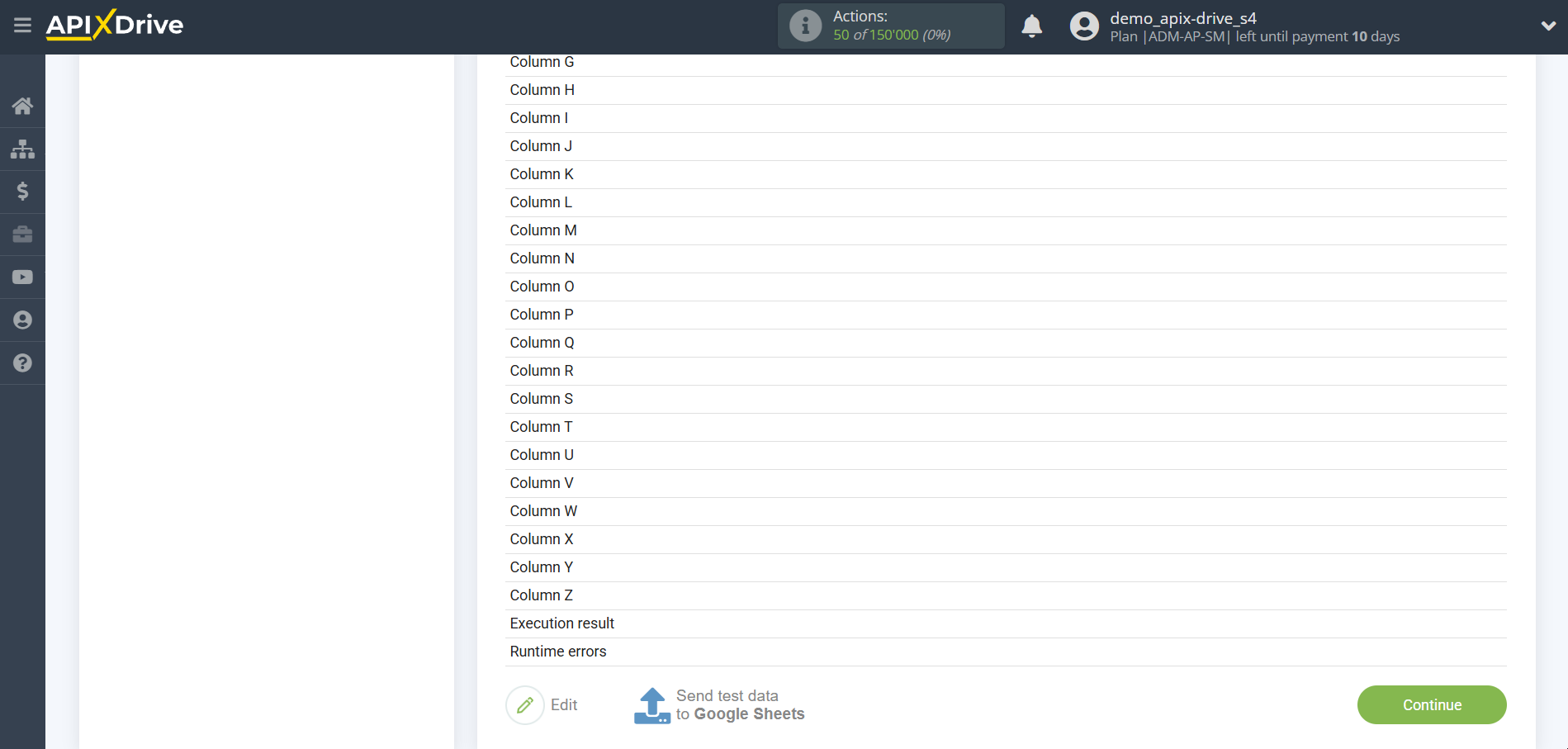

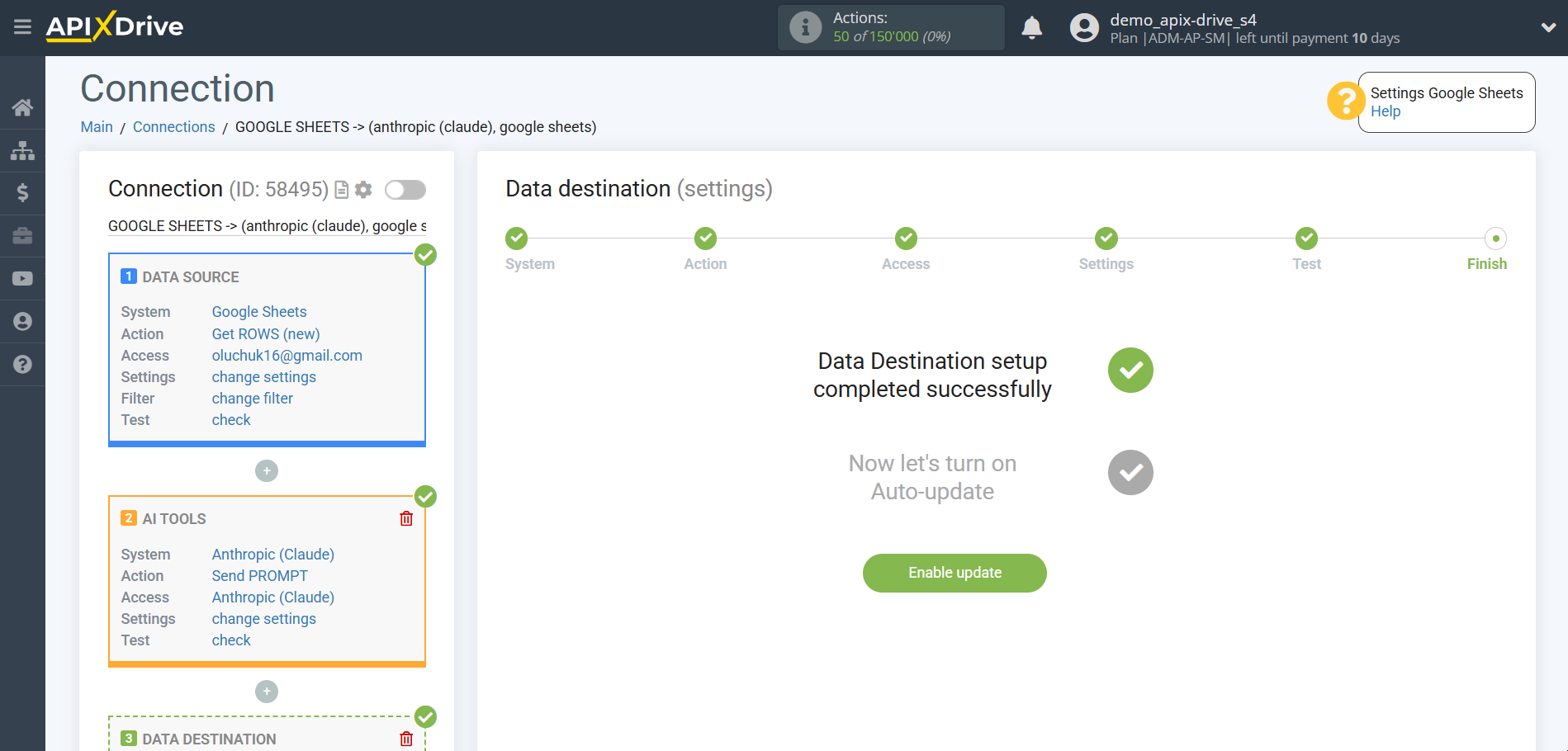

This completes the Data Destination system setup!

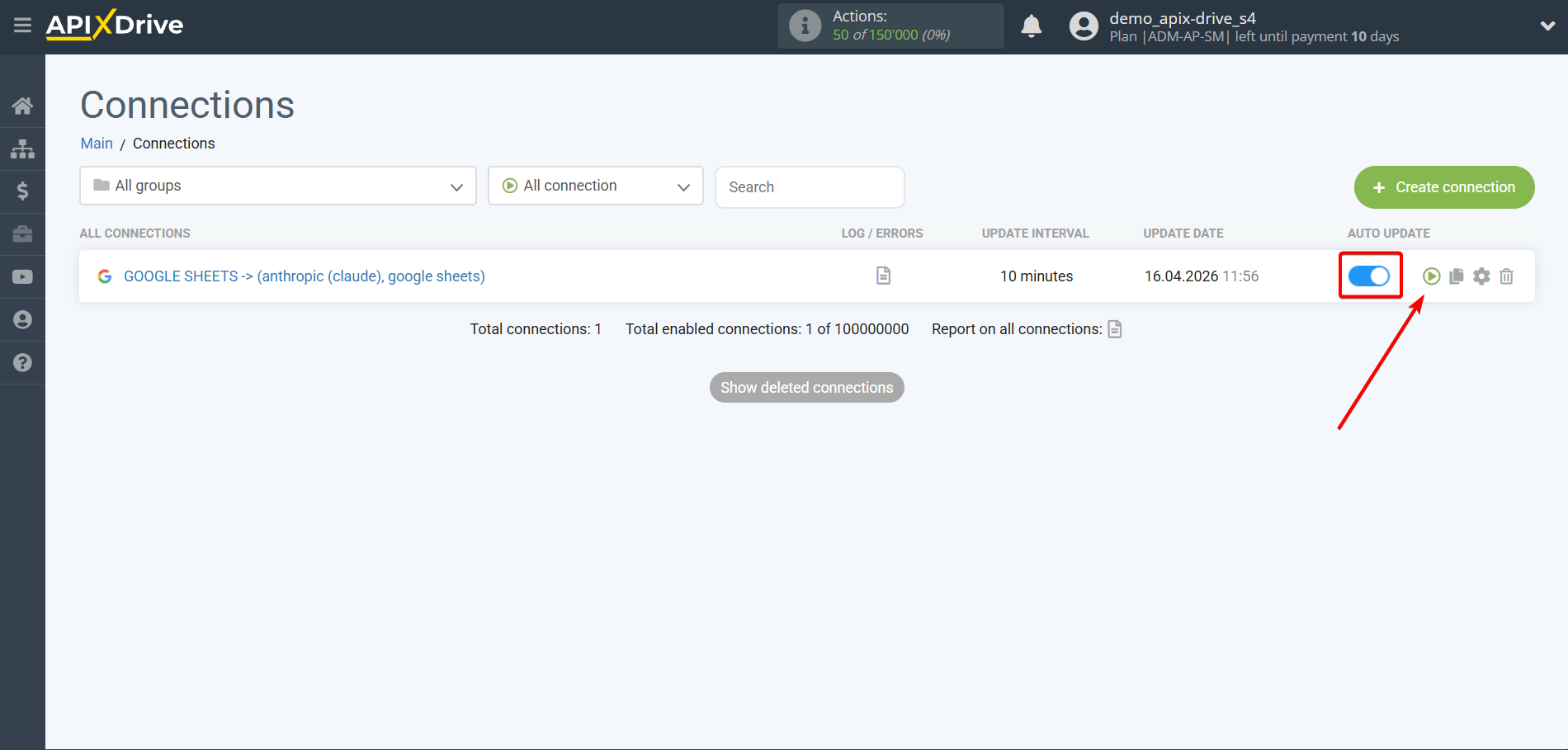

Now you can start choosing the update interval and enabling auto-update.

To do this, click "Enable update".

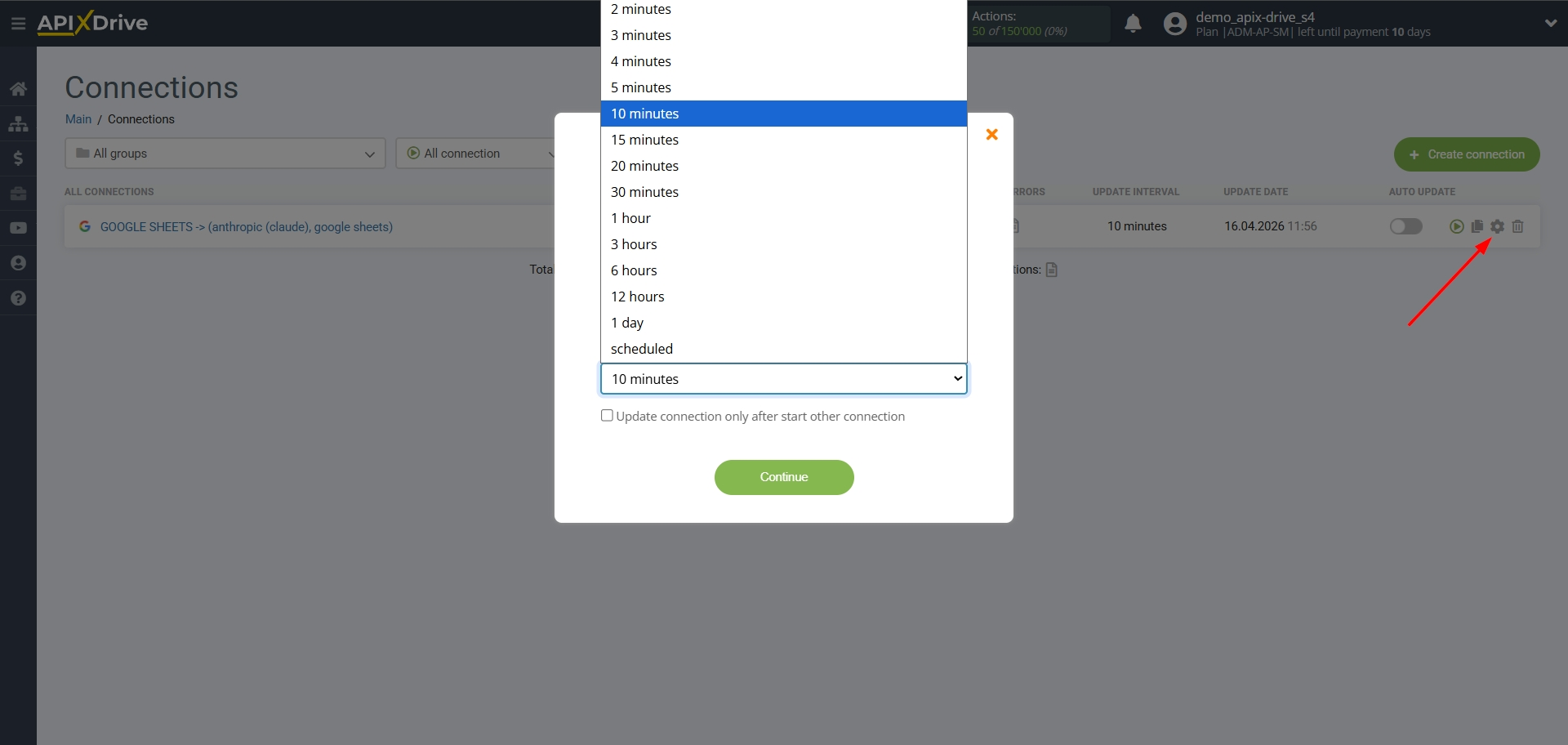

On the main screen, click on the gear icon to select the required update interval or set up scheduled launch. To start the connection by time, select scheduled start and specify the desired time for the connection update to fire, or add several options at once when you need the connection to fire.

Attention! In order for the scheduled run to work at the specified time, the interval between the current time and the specified time must be more than 5 minutes. For example, you select the time 12:10 and the current time is 12:08 - in this case, the automatic update of the connection will occur at 12:10 the next day. If you select the time 12:20 and the current time is 12:13 - the auto-update of the connection will work today and then every day at 12:20.

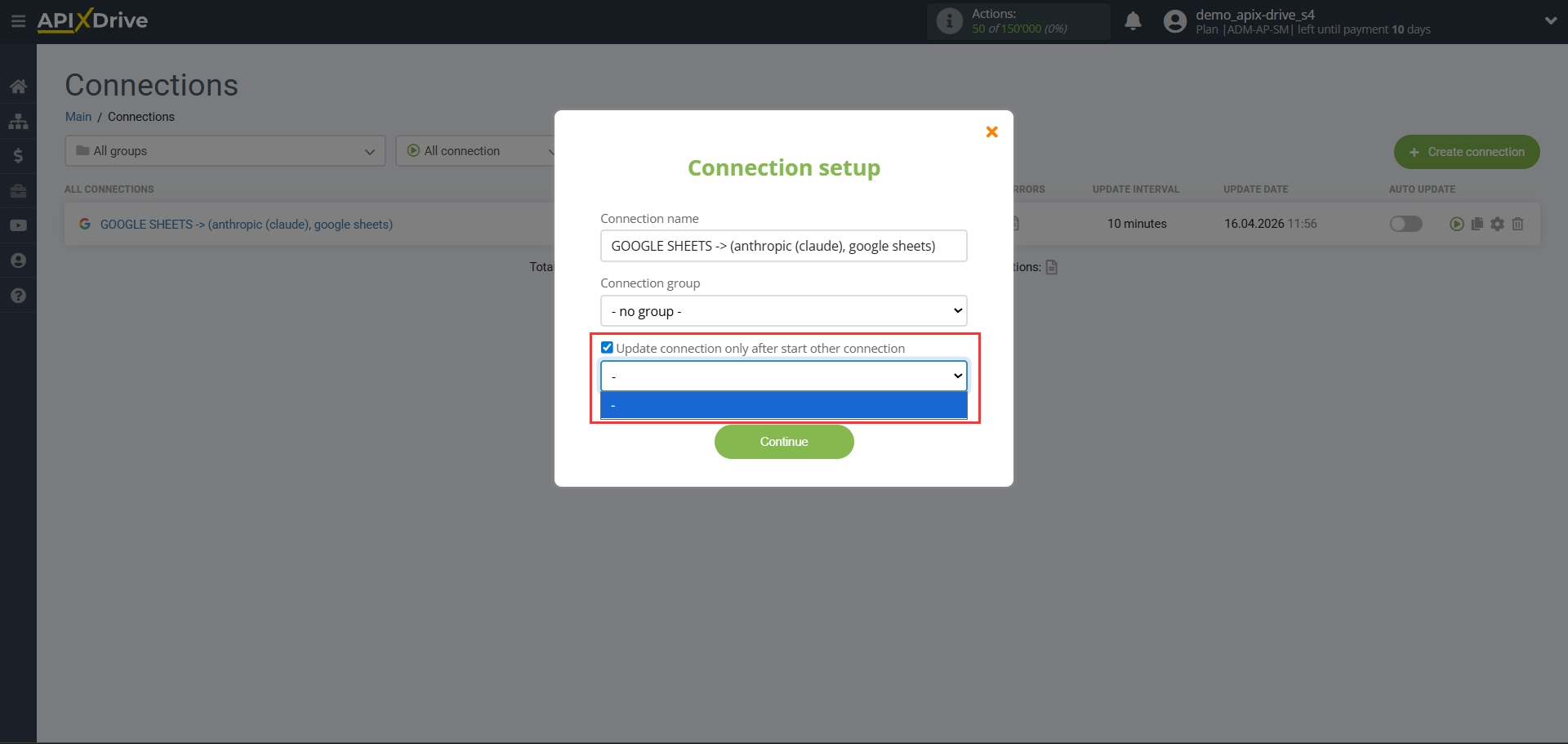

To make the current connection transmit data only after another connection, check the box "Update connection only after start other connection" and specify the connection after which the current connection will be started.

This completes the setup of Anthropic! Everything is quite simple!

Now don't worry, ApiX-Drive will do everything on its own!